Search News

TerraVista Metrics (TVM)Industry Portal

TerraVista Metrics (TVM)Hot Articles

TerraVista Metrics (TVM)- Home Security Systems Miss Risks That Basic Checklists IgnoreHome security systems often pass basic checklists while hidden risks remain. Discover scenario-based testing insights to improve reliability, safety, and smarter security decisions.

- USB-C Accessories Cause Problems When Specs Look the SameUSB-C accessories may look identical, but hidden differences in power, data, shielding, and durability can cause costly failures. Learn how to spot risks and improve uptime.

- Fitness Trackers Get More Data, but Not Always Better InsightsFitness trackers promise more health data, but which insights really help? Discover how to choose the right device for your lifestyle, goals, and daily habits.

Popular Tags

TerraVista Metrics (TVM)Industry NewsComputer Components That Bottleneck a Fast Build

auth.Time

May 04, 2026Click Count

Even a premium system can underperform when overlooked computer components create hidden constraints. For technical evaluators, identifying the parts that bottleneck a fast build is essential to balancing throughput, thermal stability, upgrade flexibility, and long-term reliability. This article breaks down the key computer components that most often limit real-world performance and shows how to assess them with precision rather than marketing claims.

Why bottlenecks depend on the workload, not just the spec sheet

A common purchasing mistake is to evaluate computer components in isolation. In real deployment, a system bottleneck appears when one part cannot feed, cool, store, or transfer data fast enough for the rest of the build. That means the limiting component in a gaming desktop may be very different from the limiting component in a hotel analytics workstation, a tourism design office rendering node, or a front-desk AI kiosk handling edge inference and multiple peripherals.

For technical assessment teams, the key question is not “Which component is fastest?” but “Which computer components are most likely to cap performance in this operating scenario?” This matters in tourism and hospitality environments, where systems may support surveillance review, property management software, digital signage, BIM visualization, IoT dashboards, or cloud-synced guest experience tools. In these contexts, performance consistency, thermals, serviceability, and interface compatibility often matter more than peak benchmark headlines.

A scenario-based view of the computer components that usually bottleneck performance

Different workloads stress different layers of the system. The table below helps technical evaluators quickly map common use cases to the computer components most likely to become constraints.

Application scenario Likely bottleneck computer components What evaluators should verify 4K gaming or visualization GPU, VRAM, cooling, power delivery Frame stability, thermal throttling, PSU headroom, case airflow Office multitasking and browser-heavy workflows RAM, SSD, low-core CPU under burst load Memory capacity, storage latency, sustained responsiveness Video editing and content production CPU, GPU, RAM, SSD throughput Timeline scrubbing, codec acceleration, cache drive speed Data dashboards, analytics, and BI workstations CPU single-core behavior, RAM, storage IOPS Query handling, data load times, memory pressure Edge AI terminals and smart hospitality control nodes GPU or NPU, networking, thermals, motherboard I/O Inference consistency, port availability, uptime under heat Scenario 1: When the CPU is the real limiter

Among all computer components, the CPU becomes the bottleneck most often in mixed productivity systems. Evaluators tend to focus on core count, but many real workloads depend on a blend of single-thread speed, cache behavior, sustained boost performance, and thermal power limits. A processor that looks strong in short tests may throttle during long render jobs, large spreadsheet calculations, dashboard refreshes, or simulation tasks.

This is especially relevant in tourism infrastructure planning offices and operations centers where one machine may switch between CAD review, procurement spreadsheets, browser tabs, conferencing, and analytics panels. In such environments, underpowered CPUs create lag that users incorrectly blame on software. Technical evaluators should examine sustained clocks under realistic cooling conditions, not just advertised maximum frequency.

A CPU bottleneck is likely when GPU utilization stays low during a heavy task, when export times scale poorly despite fast storage, or when responsiveness drops as background applications accumulate. If the deployment involves virtual machines, database-heavy tools, or local automation scripts, prioritizing a balanced processor often delivers better value than overbuying a graphics card.

Scenario 2: When the GPU holds back a “fast” build

In graphics-intensive scenarios, the GPU is one of the most critical computer components. Yet the bottleneck is not always raw shader performance. VRAM capacity, memory bandwidth, driver stability, and thermal behavior can all become limiting factors. A system may score well in a benchmark but still struggle with 4K assets, real-time visualization, multi-display digital signage, or AI-assisted media generation.

For evaluators supporting hotel showrooms, immersive destination displays, architectural walkthroughs, or surveillance review stations, GPU suitability must be tested against output resolution, codec support, and continuous-duty operation. A graphics card that performs well for short gaming sessions may be a poor choice for 12-hour commercial runtime if cooling is weak or fan curves are too aggressive.

Another frequent issue is pairing a high-end GPU with an entry-level CPU or inadequate power supply. In that case, premium computer components never reach their intended output. The lesson is simple: graphics performance must be evaluated as part of the platform, not as a standalone purchase line.

Scenario 3: RAM bottlenecks in multitasking, analytics, and virtualized workloads

Memory constraints are less visible than CPU or GPU limitations, but they are among the most disruptive computer components failures in daily use. Systems with insufficient RAM often appear “randomly slow” because the operating system begins swapping active data to storage. This affects browser-heavy teams, property management users with many open windows, content reviewers, and analysts working with large datasets.

Technical evaluators should separate memory capacity from memory speed. Capacity usually matters first. A workstation with a fast processor and premium SSD can still feel sluggish if RAM fills up during video calls, spreadsheet work, mapping software, and cloud sync tasks running together. In hospitality back-office environments, this is common because users rarely run one clean application at a time.

Signs of RAM bottlenecks include frequent disk activity during ordinary multitasking, stuttering after opening many tabs, and declining performance over the workday. If the system will host virtual machines, AI toolchains, large media libraries, or engineering applications, memory expansion headroom should be a mandatory procurement checkpoint.

Scenario 4: Storage bottlenecks are no longer just about capacity

Many buyers still treat storage as a simple capacity decision, but modern computer components must be assessed for latency, sustained write performance, controller quality, and thermal behavior. A low-cost SSD can make a system boot quickly while still underperforming during file-heavy operations, software updates, cache-intensive media work, or database extraction tasks.

In practical business settings, storage bottlenecks show up in project file loading, CCTV archive retrieval, local backup jobs, and large asset transfers between design, operations, and marketing teams. Technical evaluators should distinguish between peak sequential speed and real mixed workloads. DRAM-less drives, limited endurance ratings, and overheated M.2 devices can produce sharp drops after the initial burst phase.

For systems in remote tourism sites or high-uptime service environments, storage reliability matters as much as speed. Endurance, power-loss behavior, and easy replacement access often deserve more weight than top-line benchmark numbers.

Scenario 5: Motherboard, power supply, and cooling bottlenecks that buyers overlook

Some of the most expensive performance mistakes come from computer components that are not usually marketed as “fast.” The motherboard can limit expansion, PCIe lane allocation, memory stability, USB bandwidth, and storage options. The power supply can limit sustained performance if voltage regulation is poor or if headroom is too tight. Cooling can quietly force both CPU and GPU to reduce clock speed long before users realize what is happening.

These issues are crucial in deployment scenarios that demand reliability over appearance. A compact reception desk PC, a control-room workstation, or an edge system inside a warm equipment cabinet may all experience thermal saturation. If the case airflow is restrictive, premium computer components become trapped in a low-performance operating state. That is why technical evaluators should inspect VRM quality, fan layout, dust management, and service access, not just chipset branding.

How different evaluators should prioritize computer components

Not every decision-maker should apply the same checklist. The right weighting depends on operational responsibility, failure tolerance, and upgrade policy.

Evaluator type Primary concern Computer components to prioritize IT procurement lead Lifecycle cost and supportability Motherboard I/O, PSU quality, RAM expandability, SSD reliability Design or media manager Render speed and visual smoothness GPU, CPU, RAM, high-performance storage Operations director Stability under continuous use Cooling, PSU, SSD endurance, network interfaces Technical assessment specialist Evidence-based fit for workload System balance, telemetry, thermals, real scenario benchmarks Common misjudgments when identifying bottleneck computer components

Several errors appear repeatedly in fast-build evaluations. First, buyers confuse peak benchmark results with sustained commercial performance. Second, they overspend on one flagship component while underfunding power, cooling, or memory. Third, they ignore environmental conditions such as dust, ambient heat, or restricted airflow in furniture or wall-mounted enclosures. Fourth, they fail to match computer components to software behavior, especially where licensing, codec support, or acceleration paths change actual hardware utilization.

Another common oversight is upgrade path blindness. A build that performs well today may become boxed in by limited DIMM slots, weak VRM design, too few M.2 sockets, or insufficient PCIe bandwidth. For organizations planning phased digital transformation, these hidden constraints can raise total cost of ownership more than the initial component price difference.

Practical assessment framework for selecting the right computer components

A strong review process starts with a workload matrix. List the actual applications, file sizes, display requirements, duty cycle, and expected concurrency. Then map each requirement to the computer components most likely to saturate. Use real test cases: open the same dashboards, render the same scenes, move the same data volumes, and monitor CPU package power, GPU utilization, memory pressure, drive temperature, and noise.

For teams influenced by TVM-style evidence-based evaluation, the goal should be measurable fitness, not aesthetic branding. Ask whether the system sustains target performance for extended periods, whether thermals remain controlled in installation conditions, whether replacement parts are standardized, and whether integration ports support future peripherals and smart infrastructure needs. These criteria are especially relevant when builds serve broader hospitality ecosystems rather than isolated individual users.

FAQ: evaluating bottleneck risk in computer components

Is the CPU or GPU more important in a fast build?

It depends on the scenario. Gaming, 3D visualization, and AI image workloads often lean on the GPU, while analytics, office multitasking, scripting, and many management tools depend more on the CPU. The right answer comes from workload analysis, not generic rankings.

Can slow storage really bottleneck premium computer components?

Yes. Poor SSD behavior can delay asset loading, exports, cache access, and system responsiveness. Fast processors and GPUs cannot compensate for storage that collapses under sustained writes or high queue depth.

Why do some high-end systems still feel inconsistent?

Because inconsistency often comes from thermal throttling, RAM limits, weak motherboard design, low PSU quality, or software mismatch. Bottleneck computer components are not always the most obvious ones.

Final take: match computer components to operational reality

The most effective way to prevent a fast build from underperforming is to judge computer components in context. Every scenario has a different limiting factor: CPU for mixed productivity, GPU for visual workloads, RAM for multitasking, storage for data-heavy operations, and supporting hardware for uptime and thermal integrity. For technical evaluators, the best decision is rarely the most expensive configuration. It is the one with the fewest hidden constraints across the exact environment where it must operate.

If your team is comparing options, build your shortlist around measured workload fit, sustained behavior, and upgrade flexibility. That approach reduces procurement risk, supports long-term reliability, and ensures your computer components deliver real performance where it actually counts.

Recommended News

![Home Security Systems Miss Risks That Basic Checklists Ignore Home Security Systems Miss Risks That Basic Checklists Ignore]() May 04, 2026Home Security Systems Miss Risks That Basic Checklists IgnoreHome security systems often pass basic checklists while hidden risks remain. Discover scenario-based testing insights to improve reliability, safety, and smarter security decisions.

May 04, 2026Home Security Systems Miss Risks That Basic Checklists IgnoreHome security systems often pass basic checklists while hidden risks remain. Discover scenario-based testing insights to improve reliability, safety, and smarter security decisions.![Monitor Stands Can Fix Neck Strain, but Only if They Fit Monitor Stands Can Fix Neck Strain, but Only if They Fit]() May 04, 2026Monitor Stands Can Fix Neck Strain, but Only if They FitMonitor stands can reduce neck strain only when height, distance, and stability truly fit the user. Learn how to choose the right setup for better posture, comfort, and work performance.

May 04, 2026Monitor Stands Can Fix Neck Strain, but Only if They FitMonitor stands can reduce neck strain only when height, distance, and stability truly fit the user. Learn how to choose the right setup for better posture, comfort, and work performance.![Tablet PCs Still Make Sense in Workflows That Need Mobility Tablet PCs Still Make Sense in Workflows That Need Mobility]() May 04, 2026Tablet PCs Still Make Sense in Workflows That Need MobilityTablet PCs still matter in mobile workflows across tourism and hospitality projects, helping teams inspect faster, capture real-time data, and improve smart system coordination.

May 04, 2026Tablet PCs Still Make Sense in Workflows That Need MobilityTablet PCs still matter in mobile workflows across tourism and hospitality projects, helping teams inspect faster, capture real-time data, and improve smart system coordination.![Which Electronic Gadgets Actually Save Time at Home? Which Electronic Gadgets Actually Save Time at Home?]() May 04, 2026Which Electronic Gadgets Actually Save Time at Home?Electronic gadgets that truly save time at home: discover the best picks for cleaning, cooking, lighting, and daily routines, plus smart tips to avoid gadgets that add more work.

May 04, 2026Which Electronic Gadgets Actually Save Time at Home?Electronic gadgets that truly save time at home: discover the best picks for cleaning, cooking, lighting, and daily routines, plus smart tips to avoid gadgets that add more work.![High-End Audio: What Changes After the First Big Upgrade High-End Audio: What Changes After the First Big Upgrade]() May 04, 2026High-End Audio: What Changes After the First Big UpgradeHigh-end audio becomes real after the first big upgrade. Learn what truly changes—detail, bass control, soundstage, and listening comfort—so you can buy smarter and enjoy every track more.

May 04, 2026High-End Audio: What Changes After the First Big UpgradeHigh-end audio becomes real after the first big upgrade. Learn what truly changes—detail, bass control, soundstage, and listening comfort—so you can buy smarter and enjoy every track more.![Robot Vacuum Cleaners: When Mapping Accuracy Matters Most Robot Vacuum Cleaners: When Mapping Accuracy Matters Most]() May 04, 2026Robot Vacuum Cleaners: When Mapping Accuracy Matters MostRobot vacuum cleaners perform best when mapping accuracy is precise. Learn why reliable navigation, zone control, and consistent coverage matter most in busy hospitality spaces.

May 04, 2026Robot Vacuum Cleaners: When Mapping Accuracy Matters MostRobot vacuum cleaners perform best when mapping accuracy is precise. Learn why reliable navigation, zone control, and consistent coverage matter most in busy hospitality spaces.![Computer Components That Bottleneck a Fast Build Computer Components That Bottleneck a Fast Build]() May 04, 2026Computer Components That Bottleneck a Fast BuildComputer components can quietly bottleneck even a high-end PC. Learn how CPU, GPU, RAM, storage, cooling, and power limits affect real performance and smarter buying decisions.

May 04, 2026Computer Components That Bottleneck a Fast BuildComputer components can quietly bottleneck even a high-end PC. Learn how CPU, GPU, RAM, storage, cooling, and power limits affect real performance and smarter buying decisions.![How an ISO Certified Factory Can Still Miss Delivery Dates How an ISO Certified Factory Can Still Miss Delivery Dates]() May 03, 2026How an ISO Certified Factory Can Still Miss Delivery DatesISO certified factory does not always mean on-time delivery. Learn the hidden causes of delays and how buyers can assess real supplier reliability before critical hospitality projects slip.

May 03, 2026How an ISO Certified Factory Can Still Miss Delivery DatesISO certified factory does not always mean on-time delivery. Learn the hidden causes of delays and how buyers can assess real supplier reliability before critical hospitality projects slip.![Mobile Accessories Trends That Can Shift Margins Fast Mobile Accessories Trends That Can Shift Margins Fast]() May 03, 2026Mobile Accessories Trends That Can Shift Margins FastMobile accessories trends can shift margins fast. Discover which categories offer stronger pricing power, lower procurement risk, and better profit potential in 2025.

May 03, 2026Mobile Accessories Trends That Can Shift Margins FastMobile accessories trends can shift margins fast. Discover which categories offer stronger pricing power, lower procurement risk, and better profit potential in 2025.![Smart Watches With Strong Features but Weak Everyday Use Smart Watches With Strong Features but Weak Everyday Use]() May 03, 2026Smart Watches With Strong Features but Weak Everyday UseSmart watches can look impressive yet fail in daily life. Discover what really matters—battery, comfort, usability, and compatibility—before you buy.

May 03, 2026Smart Watches With Strong Features but Weak Everyday UseSmart watches can look impressive yet fail in daily life. Discover what really matters—battery, comfort, usability, and compatibility—before you buy.![Are IoT Gadgets Getting Smarter or Harder to Maintain? Are IoT Gadgets Getting Smarter or Harder to Maintain?]() May 03, 2026Are IoT Gadgets Getting Smarter or Harder to Maintain?IoT gadgets are smarter than ever—but are they harder to maintain? Explore the real trade-off between convenience, updates, security, and long-term value before you buy.

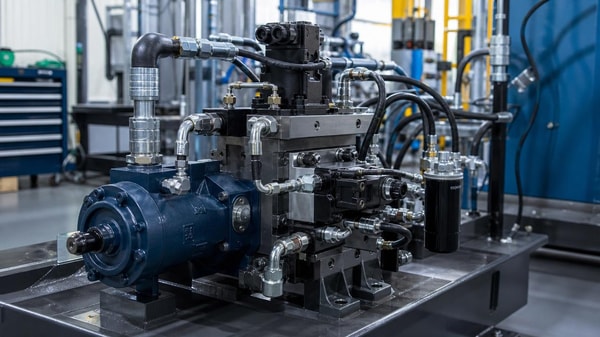

May 03, 2026Are IoT Gadgets Getting Smarter or Harder to Maintain?IoT gadgets are smarter than ever—but are they harder to maintain? Explore the real trade-off between convenience, updates, security, and long-term value before you buy.![Hydraulic Parts Failures That Usually Start Small Hydraulic Parts Failures That Usually Start Small]() May 03, 2026Hydraulic Parts Failures That Usually Start SmallHydraulic parts failures often begin with small leaks, heat, or pressure loss. Learn the early warning signs, smart maintenance checks, and sourcing tips to prevent costly downtime.

May 03, 2026Hydraulic Parts Failures That Usually Start SmallHydraulic parts failures often begin with small leaks, heat, or pressure loss. Learn the early warning signs, smart maintenance checks, and sourcing tips to prevent costly downtime.![Milling Process Problems That Often Show Up Too Late Milling Process Problems That Often Show Up Too Late]() May 03, 2026Milling Process Problems That Often Show Up Too LateMilling process issues often appear only after defects, cost increases, or tool life loss. Learn the early warning signs and practical checks to protect quality, uptime, and output.

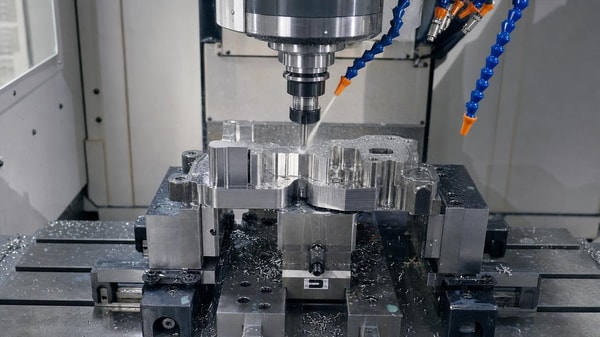

May 03, 2026Milling Process Problems That Often Show Up Too LateMilling process issues often appear only after defects, cost increases, or tool life loss. Learn the early warning signs and practical checks to protect quality, uptime, and output.![Last-mile delivery drones still face one problem roads do not Last-mile delivery drones still face one problem roads do not]() May 02, 2026Last-mile delivery drones still face one problem roads do notLast-mile delivery drones promise faster logistics, but can they match road reliability? Explore the key engineering, battery, wind, and compliance checks before deployment.

May 02, 2026Last-mile delivery drones still face one problem roads do notLast-mile delivery drones promise faster logistics, but can they match road reliability? Explore the key engineering, battery, wind, and compliance checks before deployment.![Interactive whiteboards: when does the upgrade improve teaching flow? Interactive whiteboards: when does the upgrade improve teaching flow?]() May 02, 2026Interactive whiteboards: when does the upgrade improve teaching flow?Interactive whiteboards improve teaching flow when they cut setup time, simplify collaboration, and fit hospitality workflows. Learn when the upgrade truly delivers ROI.

May 02, 2026Interactive whiteboards: when does the upgrade improve teaching flow?Interactive whiteboards improve teaching flow when they cut setup time, simplify collaboration, and fit hospitality workflows. Learn when the upgrade truly delivers ROI.![Educational robots in real classrooms: engagement gains vs setup burden Educational robots in real classrooms: engagement gains vs setup burden]() May 02, 2026Educational robots in real classrooms: engagement gains vs setup burdenEducational robots can boost classroom engagement, but do they justify setup, training, and maintenance demands? Explore a practical guide to compare value, usability, and long-term ROI before adoption.

May 02, 2026Educational robots in real classrooms: engagement gains vs setup burdenEducational robots can boost classroom engagement, but do they justify setup, training, and maintenance demands? Explore a practical guide to compare value, usability, and long-term ROI before adoption.![Hybrid office setups: what actually saves space and cost? Hybrid office setups: what actually saves space and cost?]() May 02, 2026Hybrid office setups: what actually saves space and cost?Hybrid office setups only save space and cost when utilization, leases, and tech align. Discover the checklist decision-makers need to compare models and avoid hidden expenses.

May 02, 2026Hybrid office setups: what actually saves space and cost?Hybrid office setups only save space and cost when utilization, leases, and tech align. Discover the checklist decision-makers need to compare models and avoid hidden expenses.![Organic Fertilizers: Why Field Results Vary So Much Organic Fertilizers: Why Field Results Vary So Much]() May 01, 2026Organic Fertilizers: Why Field Results Vary So MuchOrganic fertilizers can deliver strong soil and crop benefits, but field results vary with moisture, soil biology, timing, and source quality. Discover what drives performance and how to choose smarter.

May 01, 2026Organic Fertilizers: Why Field Results Vary So MuchOrganic fertilizers can deliver strong soil and crop benefits, but field results vary with moisture, soil biology, timing, and source quality. Discover what drives performance and how to choose smarter.![3D Fashion Design: Where It Saves Time and Where It Doesn't 3D Fashion Design: Where It Saves Time and Where It Doesn't]() May 01, 20263D Fashion Design: Where It Saves Time and Where It Doesn't3D fashion design saves time in approvals, sampling, and collaboration—but not every workflow speeds up. Discover where it delivers real ROI and where limits remain.

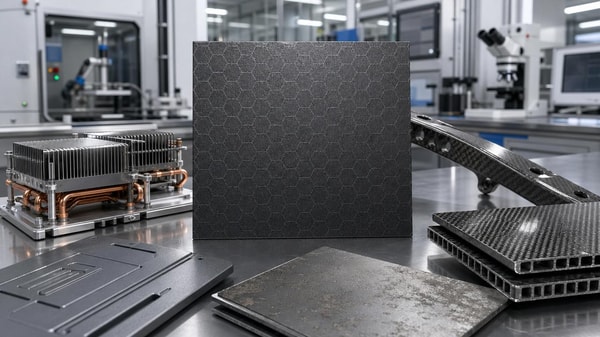

May 01, 20263D Fashion Design: Where It Saves Time and Where It Doesn't3D fashion design saves time in approvals, sampling, and collaboration—but not every workflow speeds up. Discover where it delivers real ROI and where limits remain.![Graphene Applications in Industry That Are Becoming Practical Graphene Applications in Industry That Are Becoming Practical]() May 01, 2026Graphene Applications in Industry That Are Becoming PracticalGraphene applications in industry are becoming practical in thermal management, coatings, composites, and sensors. Explore proven use cases, market signals, and why buyers are paying attention.

May 01, 2026Graphene Applications in Industry That Are Becoming PracticalGraphene applications in industry are becoming practical in thermal management, coatings, composites, and sensors. Explore proven use cases, market signals, and why buyers are paying attention.![Recycled Polyester Fabrics and the Quality Claims to Verify Recycled Polyester Fabrics and the Quality Claims to Verify]() May 01, 2026Recycled Polyester Fabrics and the Quality Claims to VerifyRecycled polyester fabrics: learn which quality claims to verify before approval, from traceability and compliance to durability, fire safety, and lot consistency.

May 01, 2026Recycled Polyester Fabrics and the Quality Claims to VerifyRecycled polyester fabrics: learn which quality claims to verify before approval, from traceability and compliance to durability, fire safety, and lot consistency.![Portable Oxygen Concentrators: What Affects Daily Use Time? Portable Oxygen Concentrators: What Affects Daily Use Time?]() May 01, 2026Portable Oxygen Concentrators: What Affects Daily Use Time?Portable oxygen concentrators: learn what really affects daily use time, from flow settings and battery age to temperature and charging habits, for smarter planning and reliable mobility.

May 01, 2026Portable Oxygen Concentrators: What Affects Daily Use Time?Portable oxygen concentrators: learn what really affects daily use time, from flow settings and battery age to temperature and charging habits, for smarter planning and reliable mobility.![Sustainable Home Decor Claims That Are Easy to Misread Sustainable Home Decor Claims That Are Easy to Misread]() Apr 30, 2026Sustainable Home Decor Claims That Are Easy to MisreadSustainable home decor claims can be misleading. Learn how to verify labels, compare materials and certifications, spot red flags, and make smarter low-impact decor choices.

Apr 30, 2026Sustainable Home Decor Claims That Are Easy to MisreadSustainable home decor claims can be misleading. Learn how to verify labels, compare materials and certifications, spot red flags, and make smarter low-impact decor choices.![Agri-PV Systems Can Raise Land Value, but Site Design Matters Agri-PV Systems Can Raise Land Value, but Site Design Matters]() Apr 30, 2026Agri-PV Systems Can Raise Land Value, but Site Design MattersAgri-PV systems can raise land value when site design supports farming, energy output, and future development. Learn the key factors that drive resilient returns.

Apr 30, 2026Agri-PV Systems Can Raise Land Value, but Site Design MattersAgri-PV systems can raise land value when site design supports farming, energy output, and future development. Learn the key factors that drive resilient returns.

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.