Search News

TerraVista Metrics (TVM)Industry Portal

TerraVista Metrics (TVM)Hot Articles

TerraVista Metrics (TVM)- RCEP Glamping Tents Carbon Mutual Recognition Extended to PhilippinesRCEP Glamping Tents carbon mutual recognition now extends to the Philippines — faster customs, 67% lower testing costs, and green procurement advantages. Act now!

- EN 16798-2:2026 Enforcement Starts May 1, 2026EN 16798-2:2026 enforcement starts May 1, 2026—key for AI HVAC, occupancy sensors & smart curtains in EU hotels. Act now to avoid market access risks!

- ADQ Launches $180M Phase II for China Supply Chain Localization in UAEADQ launches $180M Phase II fund to localize China’s glamping tent, modular cabin & RV component supply chain in UAE — apply by June 1, 2026.

Popular Tags

TerraVista Metrics (TVM)Industry NewsWhich solar farm equipment causes the most downtime?

auth.Dr. Hideo Tanaka (Outdoor Gear Engineering Lead)Time

May 07, 2026Click Count

When uptime defines profitability, understanding which solar farm equipment fails most often is critical for after-sales maintenance teams. From inverters and trackers to combiner boxes and monitoring systems, each component can trigger costly disruptions if overlooked. This article examines the most common downtime sources, why they occur, and how maintenance professionals can reduce failures through smarter diagnostics, preventive service, and data-driven equipment evaluation.

For most utility-scale sites, the short answer is this: inverters usually cause the most impactful downtime, while tracker systems often create the highest volume of repeat field issues. Combiner boxes, DC connectors, SCADA networks, weather sensors, transformers, and protection devices also contribute, but not all failures have the same operational effect. After-sales teams need to distinguish between components that fail often and components that shut down large blocks of generation when they fail.

That distinction matters in real maintenance planning. A loose connector may affect a string or a small section of the array, while an inverter trip can remove an entire block from production. A tracker communication fault may not stop energy output completely, but it can reduce yield for days if misalignment goes unnoticed. The most useful way to assess solar farm equipment is therefore by combining failure frequency, mean time to repair, safety risk, spare-parts availability, and lost megawatt-hours.

What after-sales maintenance teams really need to know first

When maintenance personnel search for which solar farm equipment causes the most downtime, they are usually not looking for a generic parts list. They want to know where to focus inspections, what to keep in stock, which alarms deserve immediate escalation, and which equipment choices will create fewer service calls over the life of the plant.

In practice, the most important questions are straightforward. Which equipment most often causes production loss? Which failures are hardest to diagnose remotely? Which components create repeat truck rolls? Which vendors have the most stable designs and serviceable layouts? And which small issues are being ignored until they become major outages?

A useful article for this audience must therefore go beyond theory. It should rank downtime sources by real operational impact, explain typical failure modes, and give field-ready guidance for prevention. That is especially important for after-sales teams responsible for both restoring performance quickly and feeding reliability insights back into procurement, commissioning, and warranty management.

Inverters: usually the single biggest source of high-impact downtime

If the question is which solar farm equipment causes the most severe downtime, inverters are usually at the top of the list. Whether central or string-based, they sit at a critical conversion point in the system. When an inverter trips, derates, or goes offline entirely, a large portion of generation can disappear immediately.

Inverters fail for several reasons. Thermal stress is one of the most common. Power electronics operate under heavy load, often in harsh environments with high ambient temperatures, dust, humidity, or insufficient airflow. Fans clog, heat sinks lose efficiency, and internal components such as IGBTs, capacitors, control boards, or power modules degrade over time.

Grid disturbances also create frequent inverter events. Voltage excursions, frequency instability, harmonics, and protection miscoordination can trigger nuisance trips. In some plants, the inverter itself is healthy, but poor settings, unstable reactive power control, or transformer-side issues make it appear to be the problem. This is why alarm review must always be tied to system-level analysis.

Firmware and communications problems are another source of avoidable downtime. After software updates, mismatched configurations, sensor input errors, or networking faults can cause unexplained resets or control issues. Maintenance teams that rely only on alarm codes without checking sequence-of-events data often replace hardware unnecessarily.

From a downtime perspective, inverters are especially costly because failures may require specialist intervention, lockout procedures, OEM support, or replacement of high-value components that are not always stocked locally. Mean time to repair can stretch from hours to weeks if spares or technical approvals are delayed.

For after-sales teams, the best inverter strategy includes thermal inspections, air filter and fan maintenance, torque verification, event-log analysis, infrared scanning of terminations, DC insulation trend monitoring, and pre-positioned critical spare parts. It also helps to classify inverter issues into three groups: grid-driven trips, environmental degradation, and internal component failures. That simple structure speeds diagnosis and reduces repeat visits.

Tracker systems: the most common source of recurring field faults

Trackers may not always produce the biggest single outage, but they are often responsible for the largest volume of maintenance interventions across a solar farm. Their failure pattern is different from inverters. Instead of one major electrical conversion unit failing, tracker systems generate distributed mechanical, control, and communication issues across many rows.

Typical tracker problems include motor failures, actuator wear, gearbox damage, controller faults, sensor misalignment, stow failures, communication drops, and wiring degradation. Wind events can also expose weaknesses in structural tolerances, calibration, damping behavior, or row-to-row synchronization.

One reason trackers create so much downtime complexity is that the energy loss is often less visible than a full inverter outage. A row stuck at the wrong angle may continue producing power, just not optimally. If monitoring granularity is poor, the issue may persist for days before anyone notices. That makes trackers a major source of hidden underperformance, which can be even more damaging over time than obvious outages.

Environmental conditions matter greatly. Dust ingress, temperature cycling, corrosion, and water intrusion degrade motors, connectors, and local control enclosures. Sites with uneven terrain or poor drainage may see repeated tracker binding or mechanical stress. Inadequate commissioning also plays a major role, especially when travel limits, stow settings, or communications are not properly validated.

To reduce tracker-related downtime, maintenance teams should focus on row-level monitoring, scheduled mechanical inspections, lubrication where applicable, storm-season prechecks, and root-cause coding that separates communication failures from true mechanical faults. It is also worth identifying whether certain rows, zones, or foundation conditions produce a disproportionate share of issues. Patterns usually exist.

Combiner boxes and DC balance-of-system components: small devices, frequent headaches

Combiner boxes rarely attract as much attention as inverters, but they are a common source of downtime and a major source of troubleshooting labor. Fuses blow, surge protection devices degrade, terminals loosen, enclosures leak, and thermal cycling stresses internal connections. In many cases, the problem is not design complexity but cumulative exposure and inconsistent installation quality.

DC connectors, junctions, and cable terminations are particularly important because minor defects can escalate into major faults. Poor crimping, incompatible connector pairings, insulation damage, UV degradation, and moisture ingress may lead to high resistance, arcing, intermittent faults, or ground insulation alarms. These problems are often hard to find because they may not present as a clean failure until operating conditions change.

For after-sales personnel, this category matters because it produces a high number of site hours per megawatt affected. A single fault may only impact one string or one section, but locating it can take time. Without string-level data, technicians may have to isolate circuits manually, inspect multiple enclosures, and verify terminations one by one.

The most effective response is disciplined preventive work. Thermal imaging, fuse health checks, torque audits, enclosure seal inspection, and DC current comparison across strings can catch many issues early. Procurement feedback is equally important. Some solar farm equipment categories look equivalent on paper but differ significantly in enclosure durability, terminal design, and serviceability under field conditions.

Transformers, switchgear, and protection devices: lower frequency, higher consequence

Medium-voltage transformers, switchgear, breakers, relays, and protection systems usually fail less often than trackers or DC components, but when they do fail, the impact can be severe. These assets can take entire blocks offline, require strict safety controls, and involve long replacement lead times.

Common causes include insulation breakdown, overheating, relay misconfiguration, breaker mechanism wear, contamination, and moisture intrusion. In some cases, the root issue comes from poor coordination rather than failed hardware. A protection setting that is too sensitive may cause repeated trips, while a setting that is too permissive may allow damage to develop before intervention.

Because these systems are safety critical, after-sales teams need strong documentation discipline. Event records, relay files, oil analysis data, thermography, and breaker operation counts should be reviewed routinely rather than only after an outage. The cost of delayed detection is high, especially where outages affect contractual delivery obligations or grid compliance.

For field teams, the main lesson is not to ignore “rare” equipment simply because service calls are less frequent. Low-frequency, high-impact failures can dominate annual lost production if they are not managed with predictive maintenance and clear escalation paths.

Monitoring, SCADA, sensors, and communications: not always the root cause, but often the reason downtime lasts longer

Monitoring systems do not usually create the original physical fault, but they often determine how long that fault remains unresolved. Poor SCADA visibility, missing data, bad irradiance sensors, unreliable gateways, or unstable communication networks can turn a short issue into a prolonged outage because the maintenance team cannot see what is really happening.

This category is underestimated. A failed pyranometer may distort performance calculations. A frozen data logger may hide inverter curtailment. A communications drop may prevent tracker alarms from reaching the control center. If the operations team loses confidence in the monitoring data, response time slows and troubleshooting becomes reactive instead of targeted.

Sensor quality and calibration also matter more than many sites admit. Dirty reference cells, poorly mounted weather instruments, and time-sync problems can all produce misleading analysis. That leads to the wrong corrective actions, unnecessary parts replacement, or delayed recognition of genuine equipment degradation.

After-sales teams should treat digital infrastructure as operational equipment, not administrative overhead. Maintain network devices, validate timestamps, test alarm paths, audit data completeness, and compare SCADA values against field measurements regularly. In a modern solar farm, diagnostic quality directly affects downtime duration.

How to rank downtime correctly: frequency is not the same as impact

One of the biggest mistakes in reliability discussions is assuming that the most commonly failing equipment is automatically the most important. For maintenance planning, you need at least five dimensions: failure frequency, production impact, repair duration, safety risk, and recurrence probability.

For example, tracker faults may generate many tickets, but inverter failures may create larger instantaneous power loss. Combiner box issues may be frequent and labor intensive, while transformer failures may be rare but operationally catastrophic. A strong after-sales program ranks solar farm equipment by weighted downtime cost, not by anecdotal frustration.

A practical field method is to create a simple reliability matrix. List each equipment category, count failures per quarter, calculate average megawatt-hours lost per event, track mean time to diagnose, track mean time to repair, and note whether the site needed OEM intervention. The result will quickly show which assets are truly driving downtime at your plant.

This also supports better vendor evaluation. Some products fail infrequently but are difficult to service because of poor access, unclear diagnostics, or weak spare-part support. Others may have minor faults but excellent recoverability. For after-sales organizations, serviceability is part of reliability.

What usually causes repeat downtime: design weakness, environment, or maintenance process gaps?

Most chronic downtime is not random. It usually comes from one of three sources: equipment design limitations, site-specific environmental stress, or maintenance process gaps. The fastest way to improve uptime is to identify which of those three is dominant for each recurring fault type.

If a certain inverter model repeatedly overheats across multiple locations, design or component selection may be the issue. If tracker motor failures cluster in a corrosive coastal environment, environmental protection may be inadequate. If combiner box hotspots keep reappearing after service, the maintenance procedure or installation standard may need revision.

After-sales teams are in a strong position because they see patterns that procurement or EPC teams may miss. The key is to document failures in a structured way. Record exact component, fault mode, weather conditions, operating state, prior repair history, and time to recurrence. Without that level of detail, organizations keep treating symptoms instead of solving root causes.

This is where data-driven evaluation becomes valuable. Benchmarking field performance across sites, climates, and equipment types helps teams move from “this part keeps failing” to “this design underperforms under these conditions.” That kind of evidence supports stronger warranty claims, smarter spare strategies, and better future equipment selection.

Practical ways after-sales maintenance teams can reduce equipment downtime

Start with criticality-based maintenance rather than equal attention for all assets. Inverters, protection systems, and major communications nodes should have tighter inspection intervals because their downtime impact is high. Trackers and DC components need broad, repeatable condition checks because their failure frequency is often higher.

Improve diagnostics before increasing labor. Many sites do not need more technicians as much as they need better fault isolation. String-level monitoring, row-level tracker analytics, thermal imaging, power quality data, and clean alarm logic can reduce troubleshooting time dramatically.

Standardize field response. Use fault trees for top failure categories, define escalation thresholds, and require technicians to capture the same evidence every time. That includes photos, thermograms, event logs, weather conditions, and measurements before and after repair. Standardization shortens repair cycles and improves root-cause analysis.

Strengthen spare-parts strategy. The right inventory is not simply the most expensive components. It should reflect failure probability, replacement lead time, and outage impact. For many plants, this means carrying key inverter boards, fans, fuses, surge protection devices, connectors, communications hardware, and selected tracker components based on actual field history.

Finally, create a feedback loop with procurement and engineering. The purpose of after-sales maintenance is not only to restore operation but also to improve future reliability. When teams share structured failure data, organizations can choose solar farm equipment with better durability, easier maintenance access, stronger enclosure protection, and more dependable OEM support.

Final answer: which solar farm equipment causes the most downtime?

If you need one clear conclusion, it is this: inverters usually cause the most significant production downtime, while tracker systems and DC balance-of-system components often create the most frequent maintenance events. Transformers and protection equipment cause fewer failures, but their consequences can be severe. Monitoring and communication systems may not fail first, yet they often determine how long an outage lasts.

For after-sales maintenance teams, the goal is not simply to know which component fails most often. The real objective is to understand which solar farm equipment creates the greatest combined burden of lost generation, diagnosis time, safety exposure, and repeat intervention. Once you rank equipment that way, maintenance priorities become much clearer.

The most effective teams reduce downtime by combining preventive inspections, better diagnostics, structured failure coding, and evidence-based equipment evaluation. In a market where uptime defines profitability, that approach turns maintenance from reactive repair into a measurable reliability advantage.

Recommended News

![Medical Grade Materials Can Cut Risk Before Assembly Begins Medical Grade Materials Can Cut Risk Before Assembly Begins]() May 07, 2026Medical Grade Materials Can Cut Risk Before Assembly BeginsMedical grade materials help cut risk before assembly by improving durability, traceability, and contamination control. Learn how smarter material choices protect hospitality hardware.

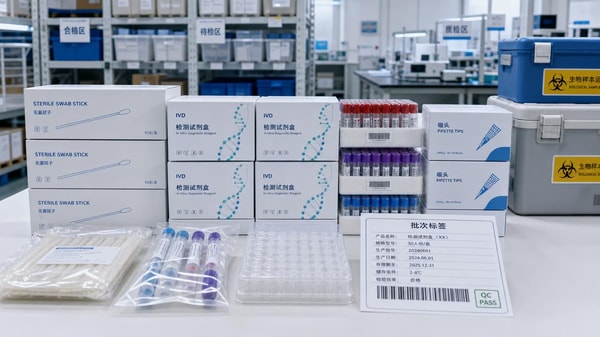

May 07, 2026Medical Grade Materials Can Cut Risk Before Assembly BeginsMedical grade materials help cut risk before assembly by improving durability, traceability, and contamination control. Learn how smarter material choices protect hospitality hardware.![Medical Lab Supplies Shortages Usually Start With One Weak Link Medical Lab Supplies Shortages Usually Start With One Weak Link]() May 07, 2026Medical Lab Supplies Shortages Usually Start With One Weak LinkMedical lab supplies shortages often start with one weak link. Learn how to spot sourcing, quality, and logistics risks early to protect continuity and build a more resilient procurement strategy.

May 07, 2026Medical Lab Supplies Shortages Usually Start With One Weak LinkMedical lab supplies shortages often start with one weak link. Learn how to spot sourcing, quality, and logistics risks early to protect continuity and build a more resilient procurement strategy.![Dining furniture materials that lower return rates over time Dining furniture materials that lower return rates over time]() May 06, 2026Dining furniture materials that lower return rates over timeDining furniture buyers can reduce returns by choosing durable materials with better moisture, stain, and wear resistance. Discover data-driven tips for smarter sourcing.

May 06, 2026Dining furniture materials that lower return rates over timeDining furniture buyers can reduce returns by choosing durable materials with better moisture, stain, and wear resistance. Discover data-driven tips for smarter sourcing.![Which solar farm equipment causes the most downtime? Which solar farm equipment causes the most downtime?]() May 06, 2026Which solar farm equipment causes the most downtime?Solar farm equipment downtime often starts with inverters, trackers, and DC faults. Learn which failures hurt output most and how smarter maintenance cuts outages fast.

May 06, 2026Which solar farm equipment causes the most downtime?Solar farm equipment downtime often starts with inverters, trackers, and DC faults. Learn which failures hurt output most and how smarter maintenance cuts outages fast.![Green electronics suppliers are changing material claims fast Green electronics suppliers are changing material claims fast]() May 05, 2026Green electronics suppliers are changing material claims fastGreen electronics suppliers are shifting claims fast. Learn how to verify durability, compliance, and real project fit in hospitality sourcing with scenario-based benchmarks.

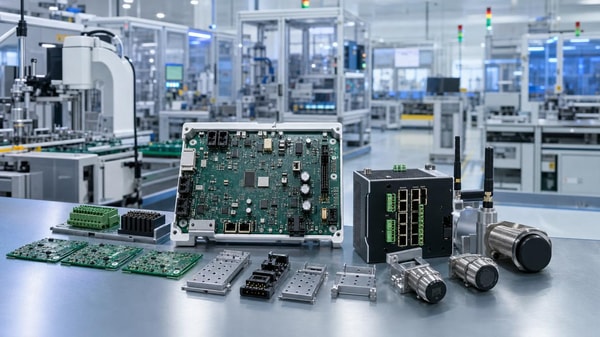

May 05, 2026Green electronics suppliers are changing material claims fastGreen electronics suppliers are shifting claims fast. Learn how to verify durability, compliance, and real project fit in hospitality sourcing with scenario-based benchmarks.![When electronic manufacturing services reduce cost and when they do not When electronic manufacturing services reduce cost and when they do not]() May 05, 2026When electronic manufacturing services reduce cost and when they do notElectronic manufacturing services cut costs only when volume, design stability, and quality control align. Learn where EMS saves money—and where hidden lifecycle costs can erode ROI.

May 05, 2026When electronic manufacturing services reduce cost and when they do notElectronic manufacturing services cut costs only when volume, design stability, and quality control align. Learn where EMS saves money—and where hidden lifecycle costs can erode ROI.![What changes vendor selection in Industrial & Manufacturing now What changes vendor selection in Industrial & Manufacturing now]() May 05, 2026What changes vendor selection in Industrial & Manufacturing nowIndustrial & Manufacturing vendor selection now depends on proof, not price alone. Discover how durability, compliance, integration, and lifecycle value reshape smarter sourcing decisions.

May 05, 2026What changes vendor selection in Industrial & Manufacturing nowIndustrial & Manufacturing vendor selection now depends on proof, not price alone. Discover how durability, compliance, integration, and lifecycle value reshape smarter sourcing decisions.![USB-C Accessories Cause Problems When Specs Look the Same USB-C Accessories Cause Problems When Specs Look the Same]() May 04, 2026USB-C Accessories Cause Problems When Specs Look the SameUSB-C accessories may look identical, but hidden differences in power, data, shielding, and durability can cause costly failures. Learn how to spot risks and improve uptime.

May 04, 2026USB-C Accessories Cause Problems When Specs Look the SameUSB-C accessories may look identical, but hidden differences in power, data, shielding, and durability can cause costly failures. Learn how to spot risks and improve uptime.![Consumer Electronics Wholesale Risks Hidden in Low MOQs Consumer Electronics Wholesale Risks Hidden in Low MOQs]() May 03, 2026Consumer Electronics Wholesale Risks Hidden in Low MOQsConsumer electronics wholesale often looks safer with low MOQs, but hidden risks in quality, compliance, traceability, and supply stability can be costly. Learn what buyers must verify before ordering.

May 03, 2026Consumer Electronics Wholesale Risks Hidden in Low MOQsConsumer electronics wholesale often looks safer with low MOQs, but hidden risks in quality, compliance, traceability, and supply stability can be costly. Learn what buyers must verify before ordering.![How to Compare Bearing Suppliers Beyond Unit Price How to Compare Bearing Suppliers Beyond Unit Price]() May 03, 2026How to Compare Bearing Suppliers Beyond Unit PriceBearing suppliers should be compared on quality, lead times, traceability, support, and total cost—not unit price alone. Learn how to choose lower-risk suppliers with confidence.

May 03, 2026How to Compare Bearing Suppliers Beyond Unit PriceBearing suppliers should be compared on quality, lead times, traceability, support, and total cost—not unit price alone. Learn how to choose lower-risk suppliers with confidence.![Freight forwarding services compared: where service levels really differ Freight forwarding services compared: where service levels really differ]() May 02, 2026Freight forwarding services compared: where service levels really differFreight forwarding services compared: discover where visibility, customs accuracy, exception handling, and project delivery support truly differ to reduce risk and choose smarter.

May 02, 2026Freight forwarding services compared: where service levels really differFreight forwarding services compared: discover where visibility, customs accuracy, exception handling, and project delivery support truly differ to reduce risk and choose smarter.![Sheet metal work tolerances can change assembly costs fast Sheet metal work tolerances can change assembly costs fast]() May 02, 2026Sheet metal work tolerances can change assembly costs fastSheet metal work tolerances can raise assembly costs fast in hospitality projects. Learn how tighter control reduces rework, protects safety, and improves installation reliability.

May 02, 2026Sheet metal work tolerances can change assembly costs fastSheet metal work tolerances can raise assembly costs fast in hospitality projects. Learn how tighter control reduces rework, protects safety, and improves installation reliability.![Custom metal fabrication quotes vary for reasons many teams miss Custom metal fabrication quotes vary for reasons many teams miss]() May 02, 2026Custom metal fabrication quotes vary for reasons many teams missCustom metal fabrication quotes vary for more than labor and material. Learn the hidden cost drivers, compare suppliers smarter, and avoid risky low-price decisions.

May 02, 2026Custom metal fabrication quotes vary for reasons many teams missCustom metal fabrication quotes vary for more than labor and material. Learn the hidden cost drivers, compare suppliers smarter, and avoid risky low-price decisions.![Why office stationery wholesale pricing often looks cheaper than it is Why office stationery wholesale pricing often looks cheaper than it is]() May 02, 2026Why office stationery wholesale pricing often looks cheaper than it isOffice stationery wholesale prices can seem low, but hidden costs often reduce real savings. Learn how to compare true value, avoid waste, and make smarter bulk buying decisions.

May 02, 2026Why office stationery wholesale pricing often looks cheaper than it isOffice stationery wholesale prices can seem low, but hidden costs often reduce real savings. Learn how to compare true value, avoid waste, and make smarter bulk buying decisions.![Activewear OEM Problems That Delay New Product Launches Activewear OEM Problems That Delay New Product Launches]() May 01, 2026Activewear OEM Problems That Delay New Product LaunchesActivewear OEM delays often stem from unclear tech packs, fabric risks, slow sampling, and weak capacity planning. Learn how to spot supplier red flags early and launch on time.

May 01, 2026Activewear OEM Problems That Delay New Product LaunchesActivewear OEM delays often stem from unclear tech packs, fabric risks, slow sampling, and weak capacity planning. Learn how to spot supplier red flags early and launch on time.![What Changes Lead Times in Wholesale Fashion Apparel? What Changes Lead Times in Wholesale Fashion Apparel?]() May 01, 2026What Changes Lead Times in Wholesale Fashion Apparel?Wholesale fashion apparel lead times change with sourcing, capacity, QC, and shipping. Learn the key risks and smart strategies to protect margins and deliver on time.

May 01, 2026What Changes Lead Times in Wholesale Fashion Apparel?Wholesale fashion apparel lead times change with sourcing, capacity, QC, and shipping. Learn the key risks and smart strategies to protect margins and deliver on time.![Smart Home Devices Wholesale: Which Products Move Reorders Fast Smart Home Devices Wholesale: Which Products Move Reorders Fast]() Apr 30, 2026Smart Home Devices Wholesale: Which Products Move Reorders FastSmart home devices wholesale: discover which products drive fast reorders in hotels and resorts, from smart locks to HVAC controls, with practical tips to cut risk and buy smarter.

Apr 30, 2026Smart Home Devices Wholesale: Which Products Move Reorders FastSmart home devices wholesale: discover which products drive fast reorders in hotels and resorts, from smart locks to HVAC controls, with practical tips to cut risk and buy smarter.![Outdoor Garden Supplies That Cause the Most Return Issues Outdoor Garden Supplies That Cause the Most Return Issues]() Apr 30, 2026Outdoor Garden Supplies That Cause the Most Return IssuesOutdoor garden supplies with the highest return issues often fail under weather, assembly, or safety demands. Learn how smarter QC checks reduce risk, costs, and supplier mistakes.

Apr 30, 2026Outdoor Garden Supplies That Cause the Most Return IssuesOutdoor garden supplies with the highest return issues often fail under weather, assembly, or safety demands. Learn how smarter QC checks reduce risk, costs, and supplier mistakes.![Why Smart Home Devices Wholesale Margins Vary So Much Why Smart Home Devices Wholesale Margins Vary So Much]() Apr 30, 2026Why Smart Home Devices Wholesale Margins Vary So MuchSmart home devices wholesale margins vary due to compliance, integration, software, and service risk. Learn which factors protect profit and help distributors choose scalable, reliable product lines.

Apr 30, 2026Why Smart Home Devices Wholesale Margins Vary So MuchSmart home devices wholesale margins vary due to compliance, integration, software, and service risk. Learn which factors protect profit and help distributors choose scalable, reliable product lines.![How to Source Smart Hotel Automation Without Overbuying How to Source Smart Hotel Automation Without Overbuying]() Apr 30, 2026How to Source Smart Hotel Automation Without OverbuyingSmart hotel automation guide: reduce system integration cost, compare hotel IoT solutions, refine smart hotel design, and align smart hotel management with sustainable tourism solutions.

Apr 30, 2026How to Source Smart Hotel Automation Without OverbuyingSmart hotel automation guide: reduce system integration cost, compare hotel IoT solutions, refine smart hotel design, and align smart hotel management with sustainable tourism solutions.![What Suppliers Should Prove on Hotel IoT Solutions What Suppliers Should Prove on Hotel IoT Solutions]() Apr 30, 2026What Suppliers Should Prove on Hotel IoT SolutionsHotel IoT solutions buyers: learn how suppliers should prove system integration cost, smart hotel design readiness, smart hotel management performance, and sustainable tourism standards before you shortlist.

Apr 30, 2026What Suppliers Should Prove on Hotel IoT SolutionsHotel IoT solutions buyers: learn how suppliers should prove system integration cost, smart hotel design readiness, smart hotel management performance, and sustainable tourism standards before you shortlist.![Finding a hotel furniture manufacturer that lasts Finding a hotel furniture manufacturer that lasts]() Apr 30, 2026Finding a hotel furniture manufacturer that lastsFind a hotel furniture manufacturer that lasts using benchmarking tools and benchmarking data. Our expert benchmarking analysis ensures long-term durability for sustainable tourism development.

Apr 30, 2026Finding a hotel furniture manufacturer that lastsFind a hotel furniture manufacturer that lasts using benchmarking tools and benchmarking data. Our expert benchmarking analysis ensures long-term durability for sustainable tourism development.![Why choosing a local hotel furniture manufacturer pays off Why choosing a local hotel furniture manufacturer pays off]() Apr 26, 2026Why choosing a local hotel furniture manufacturer pays offA local hotel furniture manufacturer streamlines your benchmarking process. Use advanced benchmarking software and tools for precise benchmarking analysis to drive sustainable tourism development.

Apr 26, 2026Why choosing a local hotel furniture manufacturer pays offA local hotel furniture manufacturer streamlines your benchmarking process. Use advanced benchmarking software and tools for precise benchmarking analysis to drive sustainable tourism development.![Hospitality Furniture OEM or ODM? Where Costs Really Split Hospitality Furniture OEM or ODM? Where Costs Really Split]() Apr 21, 2026Hospitality Furniture OEM or ODM? Where Costs Really SplitHospitality furniture OEM or ODM? Learn where costs really split across design, lead time, compliance, and lifecycle value—plus benchmarks for modular hotel, glamping, and smart hospitality sourcing.

Apr 21, 2026Hospitality Furniture OEM or ODM? Where Costs Really SplitHospitality furniture OEM or ODM? Learn where costs really split across design, lead time, compliance, and lifecycle value—plus benchmarks for modular hotel, glamping, and smart hospitality sourcing.

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.