Search News

TerraVista Metrics (TVM)Industry Portal

TerraVista Metrics (TVM)Hot Articles

TerraVista Metrics (TVM)- How Durable Amusement Hardware Performs After Heavy Seasonal UseAmusement hardware standards and amusement hardware specifications explained: discover how durable amusement hardware performs after peak use in theme and water parks, and compare smarter sourcing choices.

- Amusement Hardware Specifications That Prevent Costly ReworkAmusement hardware specifications and amusement hardware standards help prevent costly rework. Compare durable amusement hardware, suppliers, quotations, and prices for theme parks and water parks.

- What Fails Most Often in Amusement Hardware Standards?Amusement hardware standards fail when specs, fatigue testing, and corrosion checks are weak. Learn how to evaluate durable amusement hardware and choose the right amusement hardware supplier.

Popular Tags

TerraVista Metrics (TVM)Industry NewsHow Clean Does Benchmarking Data Need to Be?

auth.Sarah Jenkins (Tourism Logistics Analyst)Time

Apr 27, 2026Click Count

In tourism infrastructure procurement, clean benchmarking data is the foundation of credible benchmarking analysis, reliable benchmarking comparison, and actionable decisions. From benchmarking software to full benchmarking solutions, stakeholders need a benchmarking process that filters noise, validates performance, and supports benchmarking best practices—so every benchmarking report and benchmarking system result can be trusted.

For information researchers, procurement managers, business evaluators, and channel partners, the real question is not whether data should be clean, but how clean it must be before it can support a purchasing decision worth hundreds of thousands or even millions in lifecycle value. In tourism and hospitality infrastructure, a small error in thermal performance, load endurance, network latency, or maintenance assumptions can distort an entire supplier comparison.

That is why benchmarking in this sector must go beyond polished brochures and headline claims. Developers comparing prefab glamping cabins, hotel operators assessing smart IoT systems, and distributors reviewing amusement hardware need raw metrics that are consistent, traceable, and usable across different sourcing scenarios. TerraVista Metrics (TVM) addresses this need by turning fragmented factory data into structured benchmarking inputs that reduce ambiguity and improve procurement confidence.

What “Clean” Benchmarking Data Actually Means in Tourism Infrastructure

Clean benchmarking data does not mean perfect data with zero variation. In infrastructure procurement, that standard is unrealistic. What buyers actually need is data that is controlled enough to support a fair benchmarking comparison, with known test conditions, documented units, and limited noise. In most B2B evaluation workflows, an acceptable variance band is often within 3%–8% for repeatable lab measurements, while field measurements may allow a wider range depending on environmental exposure.

For example, if a prefab hospitality unit is advertised as having a wall thermal transmittance of 0.35–0.45 W/m²K, the result is only useful if the insulation build-up, ambient test temperature, and moisture conditions are disclosed. The same applies to hotel IoT benchmarking. A quoted throughput of 800 Mbps means little if it was measured under a 5-device load, while the target deployment will require stable performance across 50–200 concurrent endpoints.

Data is clean when it preserves comparability. That means supplier A and supplier B are being measured with the same sample basis, the same benchmark interval, and the same reporting format. If one amusement hardware supplier reports fatigue resistance after 10,000 cycles and another after 100,000 cycles, the benchmarking report may look complete, but the benchmarking system behind it is not aligned enough for decision use.

TVM’s role in this environment is not to erase all variability, but to identify which variability matters and which should be filtered out. In practice, a clean benchmarking process should separate core engineering performance, operational stability, and carbon-related metrics into defined categories so procurement teams can compare like with like.

Core attributes of clean data

- Consistent units, such as kWh/m²/year, dB, Mbps, or cycle count.

- Defined test conditions, including temperature, humidity, load, and duration.

- Sample traceability, such as prototype, pilot batch, or mass-production batch.

- Known error range, ideally expressed as a tolerance band or confidence interval.

Why “good enough” beats “over-cleaned”

Some teams delay sourcing because they want every variable cleaned to laboratory perfection. That can slow a project by 2–6 weeks without materially improving the final decision. For most tourism infrastructure categories, the goal is decision-grade cleanliness, not academic purity. If the benchmark can reliably rank suppliers, expose risk, and support contract terms, it is already highly valuable.

Why Incomplete or Noisy Data Creates Procurement Risk

Noisy benchmarking data often looks detailed on the surface. It may contain dozens of specifications, multiple PDFs, and attractive test charts. Yet if the testing basis shifts from one supplier to another, the benchmarking analysis can drive the wrong shortlist. In tourism projects, that risk is serious because procurement decisions affect capex, operating cost, guest experience, and compliance outcomes at the same time.

Consider a resort developer selecting between two modular cabin systems. If one supplier provides thermal data from a closed lab at 23°C and another uses open-site winter data at 5°C, the benchmarking comparison becomes misleading. The cleaner-looking report may not be the more reliable one. This is especially important for projects in climate-sensitive destinations where a 10%–15% deviation in insulation performance can reshape HVAC sizing and annual energy cost projections.

The same problem appears in hotel technology procurement. A benchmarking software dashboard may show uptime, latency, and integration speed, but unless the benchmarking process controls device count, network congestion, and API call volume, the resulting benchmark may overstate system readiness. In real operations, even a 50 ms increase in response time can affect guest-facing automation if multiple subsystems are linked.

For distributors and agents, poor data quality also increases channel risk. It becomes harder to defend a product in front of local buyers when the source documentation lacks repeatable metrics. Clean data is not only a procurement tool; it is also a commercial tool that supports resale credibility and reduces post-sale disputes.

Common consequences of weak benchmarking inputs

The table below shows how data quality issues typically translate into downstream business problems in tourism and hospitality sourcing.

Data issue Typical impact Procurement consequence Mixed test methods across suppliers False performance ranking Wrong shortlist or re-tender after technical review Missing operating environment data Poor site-fit prediction Higher retrofit cost during installation stage Prototype data presented as production data Overestimated durability or throughput Warranty disputes within 6–18 months No tolerance or error disclosure Low trust in benchmark output Decision delay and extra verification cycle The pattern is straightforward: once poor input quality enters the benchmarking system, downstream decisions become slower, more expensive, and harder to defend internally. A cleaner dataset shortens technical clarification rounds and improves contract negotiation because the performance basis is already aligned.

The hidden cost of “almost comparable” data

Many procurement teams do not fail because data is absent. They fail because the data is 80% comparable and the last 20% is ignored. That final gap often contains the real risk: lifecycle maintenance intervals, carbon content assumptions, spare-part lead time, or actual fatigue thresholds under tourist-grade usage intensity.

How to Define the Right Cleanliness Threshold for Different Benchmarking Uses

Not every sourcing decision requires the same level of data cleaning. A market scan for early supplier discovery can tolerate broader ranges, while a final procurement decision requires tighter control. The key is to match the cleanliness threshold to the business stage. In practical terms, most organizations move through 3 levels: screening-grade, decision-grade, and contract-grade benchmarking.

At screening stage, buyers may compare 8–15 suppliers. Here, clean benchmarking data should be enough to remove clear mismatches. Typical requirements include standard units, a common product scope, and a basic operating-condition note. At decision stage, the shortlist may shrink to 2–4 suppliers, and the benchmark must support direct technical comparison under aligned test logic. At contract stage, the data must be specific enough to become part of service-level terms, acceptance criteria, or warranty discussion.

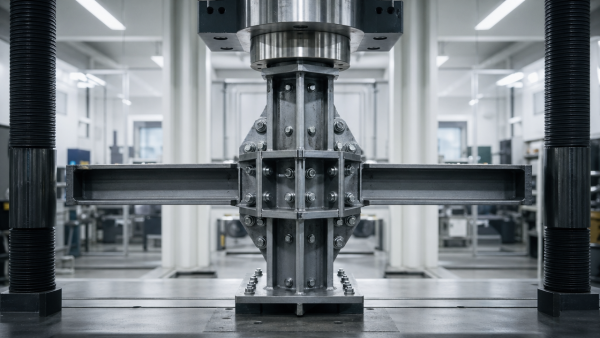

In tourism infrastructure, the threshold also changes by product category. Modular structures need stronger material, thermal, and moisture metrics. Smart hotel systems need interoperability, throughput, uptime, and cybersecurity-related testing records. Amusement hardware needs fatigue resistance, inspection frequency guidance, and operational load assumptions that reflect guest usage peaks.

A useful rule is this: the higher the switching cost after installation, the cleaner the data must be. If replacing the system would disrupt revenue operations for 7–30 days, a stronger benchmark is justified before contract signature.

Recommended cleanliness thresholds by use case

The following framework helps teams decide how much cleaning effort is necessary before using a benchmarking report for action.

Benchmarking use Minimum data cleanliness Practical requirement Initial supplier screening Moderate Aligned units, scope consistency, at least 3 core metrics Technical shortlist comparison High Same test basis, defined tolerance, sample traceability, environmental notes Contract and acceptance support Very high Repeatable data, acceptance thresholds, verification method, reporting cadence This staged approach prevents over-investment in cleaning during early research while ensuring that final procurement decisions rely on robust, decision-grade evidence. It is especially effective when procurement, engineering, and finance teams all need to sign off on the same benchmarking analysis.

A 4-point filter for deciding readiness

- Can the same metric be compared across all shortlisted suppliers?

- Are test conditions disclosed clearly enough for review?

- Is the likely error band acceptable for the investment size?

- Can the result be translated into acceptance criteria after purchase?

Building a Benchmarking Process That Filters Noise Without Losing Useful Signals

A strong benchmarking process is not just a data collection exercise. It is a filtering system. In tourism and hospitality procurement, valuable signals often arrive mixed with sales language, non-standard factory forms, prototype-only test results, and region-specific assumptions. The challenge is to remove noise without deleting operationally useful variation.

TVM’s benchmarking logic is useful here because it treats engineering evidence as a layered structure. First comes normalization: units, methods, and sample basis are aligned. Second comes validation: outliers are tested against known ranges, such as compression strength, thermal transfer, response time, or fatigue life. Third comes applicability: the benchmark is interpreted against the destination’s climate, occupancy profile, and operating intensity.

This matters because not all “outliers” are bad. A cabin with a higher-than-average insulation value may reflect a genuine material advantage, not a reporting error. A smart hotel network with lower peak throughput may still be the better option if latency under 100-device conditions stays below 30 ms. Clean benchmarking data should therefore preserve true differentiation while rejecting inconsistent measurement logic.

For procurement teams, the process should be documented in 5 practical steps so the resulting benchmarking report can be reviewed, shared, and defended across departments.

A 5-step benchmarking workflow for tourism supply decisions

- Define the use case: screening, selection, or contract support.

- Lock the metric set: usually 4–8 critical indicators per category.

- Normalize supplier submissions into one reporting template.

- Flag missing conditions, unit conflicts, and improbable values.

- Translate benchmark outputs into procurement actions and risk notes.

Metrics that often deserve priority

For prefab hospitality assets, start with thermal efficiency, moisture resistance, acoustic insulation, and transport-installation constraints. For smart hotel systems, prioritize network throughput, device concurrency, integration latency, uptime record, and maintenance burden. For leisure hardware, focus on fatigue cycles, material wear rate, inspection intervals, and environmental resistance under coastal, humid, or high-UV conditions.

A benchmarking software platform can make this workflow faster, but software alone does not guarantee clean benchmarking. The inputs, definitions, and review logic still determine whether the final benchmark is trustworthy.

What Buyers, Evaluators, and Distributors Should Check Before Trusting a Benchmarking Report

A benchmarking report should help users make decisions, not merely confirm assumptions. Before relying on one, stakeholders should review whether the report answers three practical questions: what was measured, under which conditions, and how the result affects project risk. If any of those points is unclear, the benchmark may still be informative, but it is not yet decision-safe.

For procurement managers, the strongest reports connect raw metrics to commercial implications. A throughput range should indicate likely device load behavior. A fatigue metric should indicate inspection frequency. A carbon-related material score should indicate whether additional documentation may be needed for project compliance packages. This link between engineering data and contract relevance is where many generic benchmarking solutions fall short.

For distributors and agents, the priority is transferability. Can the benchmark support resale discussions in multiple regions? Can it survive technical due diligence from local partners? A clean, structured report with clear assumptions often reduces pre-sale friction and shortens negotiation cycles by 1–3 rounds because fewer clarifications are needed.

For business evaluators, one additional check is critical: was the benchmark built from representative production data or from best-case sample data? A decision based on pilot-unit performance can inflate the projected value of a supply relationship and distort risk pricing.

Buyer-side verification checklist

The table below can be used as a fast review tool before a report is circulated for approval or included in a sourcing file.

Checkpoint What to look for Why it matters Metric definition Same unit, same scope, same reference condition Prevents false comparison between suppliers Sample source Prototype, pilot run, or production batch clearly stated Improves confidence in real delivery performance Tolerance disclosure Error range, repeatability band, or test limitation noted Helps assess whether results are contract-usable Site applicability Climate, occupancy, and operating assumptions included Reduces risk of mismatch after installation If a report passes these checks, it is much more likely to support a reliable benchmarking comparison and downstream commercial negotiation. If it fails two or more checkpoints, further cleaning or independent review is usually justified.

FAQ: common buyer questions

How clean does data need to be for supplier shortlisting?

For shortlisting, moderate cleanliness is often enough. Buyers usually need 3–5 aligned core metrics, a consistent reporting basis, and clear exclusions. If the goal is to reduce a list from 10 suppliers to 3, the benchmark does not need contract-grade detail, but it must still prevent obvious apples-to-oranges comparisons.

What is the most common benchmarking mistake in tourism infrastructure?

The most common mistake is comparing headline numbers without matching the underlying conditions. Thermal, durability, and network performance metrics are especially vulnerable to this problem because small methodological differences can create large commercial misunderstandings.

Can benchmarking software solve poor input quality by itself?

No. Software can standardize templates, automate checks, and visualize outliers, but it cannot fully repair weak source logic. A reliable benchmarking system still depends on disciplined data collection, metric definitions, and expert review.

Turning Clean Benchmarking Data Into Better Procurement Decisions

Clean data becomes valuable only when it changes decisions for the better. In tourism infrastructure, that usually means selecting suppliers with a stronger fit for climate, guest load, compliance needs, and operational model, not merely the lowest quoted price. A better benchmark reveals whole-life value, implementation risk, and integration feasibility in one view.

For project developers, this improves specification writing and tender structure. For operators, it strengthens acceptance criteria and maintenance planning. For distributors, it creates a clearer technical story for local markets. In each case, the benchmark works best when the data is clean enough to support action, but not so over-processed that important engineering differences disappear.

TVM’s value in this process lies in converting manufacturing-side complexity into standardized, decision-ready whitepapers and comparative frameworks. That is particularly useful when sourcing across borders, where naming conventions, factory formats, and marketing language often differ more than the actual engineering performance. By creating a structural filter for metrics, TVM helps buyers compare durable facts instead of polished claims.

If your team is evaluating prefab glamping units, smart hotel infrastructure, or tourism hardware with demanding performance expectations, the right question is not whether the dataset is immaculate. The right question is whether it is clean enough to support a confident, traceable, and commercially sound decision. When the answer is yes, benchmarking becomes a true procurement advantage rather than an administrative exercise.

To review your current benchmarking process, compare suppliers on a stronger technical basis, or obtain a more decision-ready benchmarking report, contact TerraVista Metrics to discuss your project requirements, request a tailored evaluation framework, or explore broader benchmarking solutions for the tourism and hospitality supply chain.

- EMS

- ESS

- PPE

- procurement

- cybersecurity

- AR

- supply chain

- hospitality infrastructure

- tourism hardware

- glamping units

- amusement hardware

- thermal efficiency

- hospitality supply chain

- prefab glamping

- smart hotel systems

- tourism infrastructure

- benchmarking

- hotel IoT

- smart hotel

- benchmarking solutions

- benchmarking report

- benchmarking analysis

- benchmarking data

- benchmarking software

- benchmarking system

- benchmarking process

- benchmarking best practices

- benchmarking comparison

Recommended News

![Why benchmarking software implementation fails so often Why benchmarking software implementation fails so often]() Apr 26, 2026Why benchmarking software implementation fails so oftenWhy does benchmarking software fail? Master benchmarking tools and benchmarking analysis to optimize your benchmarking process for sustainable tourism development. Click to learn more!

Apr 26, 2026Why benchmarking software implementation fails so oftenWhy does benchmarking software fail? Master benchmarking tools and benchmarking analysis to optimize your benchmarking process for sustainable tourism development. Click to learn more!![How to Use Tourism Benchmarking to Compare Destinations How to Use Tourism Benchmarking to Compare Destinations]() Apr 28, 2026How to Use Tourism Benchmarking to Compare DestinationsTourism benchmarking helps compare destinations with clear metrics for demand, infrastructure, sustainability, and guest experience. Learn how to reduce risk and make smarter planning decisions.

Apr 28, 2026How to Use Tourism Benchmarking to Compare DestinationsTourism benchmarking helps compare destinations with clear metrics for demand, infrastructure, sustainability, and guest experience. Learn how to reduce risk and make smarter planning decisions.![How to Choose Tourism Benchmarking Data Without Bias How to Choose Tourism Benchmarking Data Without Bias]() Apr 27, 2026How to Choose Tourism Benchmarking Data Without BiasTourism benchmarking made bias-free: learn how to verify transparent methods, compare reliable metrics, and choose decision-ready data for smarter tourism procurement.

Apr 27, 2026How to Choose Tourism Benchmarking Data Without BiasTourism benchmarking made bias-free: learn how to verify transparent methods, compare reliable metrics, and choose decision-ready data for smarter tourism procurement.![Hospitality Benchmarking: Which Metrics Matter Hospitality Benchmarking: Which Metrics Matter]() Apr 27, 2026Hospitality Benchmarking: Which Metrics MatterHospitality benchmarking shows which metrics truly matter across the hospitality ecosystem—from eco-friendly cabins and smart hotel IoT to compliance, durability, integration, and lifecycle ROI.

Apr 27, 2026Hospitality Benchmarking: Which Metrics MatterHospitality benchmarking shows which metrics truly matter across the hospitality ecosystem—from eco-friendly cabins and smart hotel IoT to compliance, durability, integration, and lifecycle ROI.![How to apply benchmarking methodology to hotel operations? How to apply benchmarking methodology to hotel operations?]() Apr 26, 2026How to apply benchmarking methodology to hotel operations?Benchmarking methodology for hotel operations: compare smart hotel technology, smart hotel solutions, and benchmarking services to improve ROI, integration, sustainability, and vendor selection.

Apr 26, 2026How to apply benchmarking methodology to hotel operations?Benchmarking methodology for hotel operations: compare smart hotel technology, smart hotel solutions, and benchmarking services to improve ROI, integration, sustainability, and vendor selection.![Which benchmarking platform works best for multi-site groups? Which benchmarking platform works best for multi-site groups?]() Apr 26, 2026Which benchmarking platform works best for multi-site groups?Compare the best benchmarking platform for multi-site groups using proven benchmarking methodology for smart hotel technology, smart hotel solutions, and benchmarking services that improve ROI.

Apr 26, 2026Which benchmarking platform works best for multi-site groups?Compare the best benchmarking platform for multi-site groups using proven benchmarking methodology for smart hotel technology, smart hotel solutions, and benchmarking services that improve ROI.![How to evaluate a benchmarking methodology step by step? How to evaluate a benchmarking methodology step by step?]() Apr 26, 2026How to evaluate a benchmarking methodology step by step?Benchmarking methodology explained step by step for smart hotel technology buyers. Learn how benchmarking services and platforms validate smart hotel solutions, integration, ROI, and sustainable tourism initiatives.

Apr 26, 2026How to evaluate a benchmarking methodology step by step?Benchmarking methodology explained step by step for smart hotel technology buyers. Learn how benchmarking services and platforms validate smart hotel solutions, integration, ROI, and sustainable tourism initiatives.![Which benchmarking platform is easier to trust and use? Which benchmarking platform is easier to trust and use?]() Apr 26, 2026Which benchmarking platform is easier to trust and use?Compare which benchmarking platform is easiest to trust and use for smart hotel technology, smart hotel solutions, and benchmarking services with transparent methodology and decision-ready insights.

Apr 26, 2026Which benchmarking platform is easier to trust and use?Compare which benchmarking platform is easiest to trust and use for smart hotel technology, smart hotel solutions, and benchmarking services with transparent methodology and decision-ready insights.![Are Benchmarking Solutions Worth the Cost? Are Benchmarking Solutions Worth the Cost?]() Apr 25, 2026Are Benchmarking Solutions Worth the Cost?Benchmarking solutions: are they worth the cost? Explore benchmarking software, benchmarking analysis, and benchmarking data that reduce risk, improve system integration services, and support sustainable tourism development.

Apr 25, 2026Are Benchmarking Solutions Worth the Cost?Benchmarking solutions: are they worth the cost? Explore benchmarking software, benchmarking analysis, and benchmarking data that reduce risk, improve system integration services, and support sustainable tourism development.![How to Fix a Broken Benchmarking Process How to Fix a Broken Benchmarking Process]() Apr 25, 2026How to Fix a Broken Benchmarking ProcessBenchmarking software and benchmarking tools help fix a broken benchmarking process with clear benchmarking analysis, reliable benchmarking data, and actionable benchmarking solutions.

Apr 25, 2026How to Fix a Broken Benchmarking ProcessBenchmarking software and benchmarking tools help fix a broken benchmarking process with clear benchmarking analysis, reliable benchmarking data, and actionable benchmarking solutions.![Which Benchmarking Tools Save Time Fast? Which Benchmarking Tools Save Time Fast?]() Apr 25, 2026Which Benchmarking Tools Save Time Fast?Benchmarking software and benchmarking tools speed benchmarking analysis, benchmarking comparison, and benchmarking reports for sustainable tourism development and system integration services.

Apr 25, 2026Which Benchmarking Tools Save Time Fast?Benchmarking software and benchmarking tools speed benchmarking analysis, benchmarking comparison, and benchmarking reports for sustainable tourism development and system integration services.![Benchmarking Software vs Spreadsheets Benchmarking Software vs Spreadsheets]() Apr 25, 2026Benchmarking Software vs SpreadsheetsBenchmarking software vs spreadsheets: discover which benchmarking tools deliver faster benchmarking analysis, cleaner benchmarking data, and stronger reports for tourism procurement.

Apr 25, 2026Benchmarking Software vs SpreadsheetsBenchmarking software vs spreadsheets: discover which benchmarking tools deliver faster benchmarking analysis, cleaner benchmarking data, and stronger reports for tourism procurement.![A Simple Benchmarking Process for Better Decisions A Simple Benchmarking Process for Better Decisions]() Apr 24, 2026A Simple Benchmarking Process for Better DecisionsBenchmarking software and benchmarking tools power a simple benchmarking process for better sourcing decisions. Explore benchmarking analysis, benchmarking comparison, and data-driven solutions.

Apr 24, 2026A Simple Benchmarking Process for Better DecisionsBenchmarking software and benchmarking tools power a simple benchmarking process for better sourcing decisions. Explore benchmarking analysis, benchmarking comparison, and data-driven solutions.![Benchmarking Comparison: What Actually Matters? Benchmarking Comparison: What Actually Matters?]() Apr 24, 2026Benchmarking Comparison: What Actually Matters?Benchmarking comparison made practical with benchmarking software, tools, and analysis—discover how benchmarking data improves sustainable tourism development, system integration services, and smarter procurement decisions.

Apr 24, 2026Benchmarking Comparison: What Actually Matters?Benchmarking comparison made practical with benchmarking software, tools, and analysis—discover how benchmarking data improves sustainable tourism development, system integration services, and smarter procurement decisions.![Benchmarking Tools That Fit Multi-Site Operations Benchmarking Tools That Fit Multi-Site Operations]() Apr 24, 2026Benchmarking Tools That Fit Multi-Site OperationsBenchmarking software and benchmarking tools for multi-site tourism operations, with benchmarking analysis, benchmarking data, and system integration services to support smarter, sustainable procurement.

Apr 24, 2026Benchmarking Tools That Fit Multi-Site OperationsBenchmarking software and benchmarking tools for multi-site tourism operations, with benchmarking analysis, benchmarking data, and system integration services to support smarter, sustainable procurement.![How to Choose Benchmarking Software in 2026 How to Choose Benchmarking Software in 2026]() Apr 24, 2026How to Choose Benchmarking Software in 2026Benchmarking software guide for 2026: compare benchmarking tools, benchmarking analysis, and benchmarking data to choose solutions that improve reporting, integration, and procurement decisions.

Apr 24, 2026How to Choose Benchmarking Software in 2026Benchmarking software guide for 2026: compare benchmarking tools, benchmarking analysis, and benchmarking data to choose solutions that improve reporting, integration, and procurement decisions.![Do Fiberglass Formwork Panels Lower Reuse Costs? Do Fiberglass Formwork Panels Lower Reuse Costs?]() Apr 23, 2026Do Fiberglass Formwork Panels Lower Reuse Costs?Fiberglass formwork panels can cut reuse costs by improving durability, handling, and lifecycle value vs plastic concrete formwork and steel column formwork OEM—learn when they deliver the best ROI.

Apr 23, 2026Do Fiberglass Formwork Panels Lower Reuse Costs?Fiberglass formwork panels can cut reuse costs by improving durability, handling, and lifecycle value vs plastic concrete formwork and steel column formwork OEM—learn when they deliver the best ROI.![How Clean Does Benchmarking Data Need to Be? How Clean Does Benchmarking Data Need to Be?]() Apr 28, 2026How Clean Does Benchmarking Data Need to Be?Benchmarking software and benchmarking data matter most when procurement decisions are high-stakes. Learn how clean benchmarking analysis and benchmarking comparison should be to reduce risk.

Apr 28, 2026How Clean Does Benchmarking Data Need to Be?Benchmarking software and benchmarking data matter most when procurement decisions are high-stakes. Learn how clean benchmarking analysis and benchmarking comparison should be to reduce risk.![Is Your Benchmarking System Flexible Enough to Scale? Is Your Benchmarking System Flexible Enough to Scale?]() Apr 22, 2026Is Your Benchmarking System Flexible Enough to Scale?Benchmarking software and benchmarking tools should scale with your projects. Learn how flexible benchmarking analysis, benchmarking data, and a stronger benchmarking system improve decisions.

Apr 22, 2026Is Your Benchmarking System Flexible Enough to Scale?Benchmarking software and benchmarking tools should scale with your projects. Learn how flexible benchmarking analysis, benchmarking data, and a stronger benchmarking system improve decisions.![Benchmarking Software Costs That Usually Appear Too Late Benchmarking Software Costs That Usually Appear Too Late]() Apr 22, 2026Benchmarking Software Costs That Usually Appear Too LateBenchmarking software costs often appear too late. Learn how benchmarking tools, benchmarking analysis, and benchmarking data reveal hidden expenses, improve vendor comparison, and support smarter decisions.

Apr 22, 2026Benchmarking Software Costs That Usually Appear Too LateBenchmarking software costs often appear too late. Learn how benchmarking tools, benchmarking analysis, and benchmarking data reveal hidden expenses, improve vendor comparison, and support smarter decisions.![Open vs Paid Benchmarking Tools: Where the Gap Shows Open vs Paid Benchmarking Tools: Where the Gap Shows]() Apr 22, 2026Open vs Paid Benchmarking Tools: Where the Gap ShowsBenchmarking software vs paid benchmarking tools: see where benchmarking analysis, benchmarking data, and benchmarking reports differ—and choose smarter benchmarking solutions with confidence.

Apr 22, 2026Open vs Paid Benchmarking Tools: Where the Gap ShowsBenchmarking software vs paid benchmarking tools: see where benchmarking analysis, benchmarking data, and benchmarking reports differ—and choose smarter benchmarking solutions with confidence.![Benchmarking Data Gaps That Lead to Weak Forecasts Benchmarking Data Gaps That Lead to Weak Forecasts]() Apr 22, 2026Benchmarking Data Gaps That Lead to Weak ForecastsBenchmarking software and benchmarking tools reveal benchmarking data gaps, sharpen benchmarking analysis, and improve benchmarking comparison for more reliable forecasts.

Apr 22, 2026Benchmarking Data Gaps That Lead to Weak ForecastsBenchmarking software and benchmarking tools reveal benchmarking data gaps, sharpen benchmarking analysis, and improve benchmarking comparison for more reliable forecasts.![Signs a Benchmarking System Is Too Rigid for Daily Use Signs a Benchmarking System Is Too Rigid for Daily Use]() Apr 22, 2026Signs a Benchmarking System Is Too Rigid for Daily UseBenchmarking software feeling too rigid? Learn the warning signs, improve benchmarking analysis and comparison, and build a flexible benchmarking process with smarter tools and best practices.

Apr 22, 2026Signs a Benchmarking System Is Too Rigid for Daily UseBenchmarking software feeling too rigid? Learn the warning signs, improve benchmarking analysis and comparison, and build a flexible benchmarking process with smarter tools and best practices.![What Hospitality Benchmarking Often Misses in RevPAR Gaps What Hospitality Benchmarking Often Misses in RevPAR Gaps]() Apr 19, 2026What Hospitality Benchmarking Often Misses in RevPAR GapsHospitality benchmarking reveals why RevPAR gaps persist by exposing hidden drivers in prefab glamping, smart hotel IoT, PCB specs, lighting IP ratings, and tourism infrastructure.

Apr 19, 2026What Hospitality Benchmarking Often Misses in RevPAR GapsHospitality benchmarking reveals why RevPAR gaps persist by exposing hidden drivers in prefab glamping, smart hotel IoT, PCB specs, lighting IP ratings, and tourism infrastructure.

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.