Search News

TerraVista Metrics (TVM)Industry Portal

TerraVista Metrics (TVM)Hot Articles

TerraVista Metrics (TVM)- How Durable Amusement Hardware Performs After Heavy Seasonal UseAmusement hardware standards and amusement hardware specifications explained: discover how durable amusement hardware performs after peak use in theme and water parks, and compare smarter sourcing choices.

- Amusement Hardware Specifications That Prevent Costly ReworkAmusement hardware specifications and amusement hardware standards help prevent costly rework. Compare durable amusement hardware, suppliers, quotations, and prices for theme parks and water parks.

- What Fails Most Often in Amusement Hardware Standards?Amusement hardware standards fail when specs, fatigue testing, and corrosion checks are weak. Learn how to evaluate durable amusement hardware and choose the right amusement hardware supplier.

Popular Tags

TerraVista Metrics (TVM)Industry NewsHow to Use Tourism Benchmarking to Compare Destinations

auth.Sarah Jenkins (Tourism Logistics Analyst)Time

Apr 27, 2026Click Count

Tourism benchmarking helps destination planners, operators, and investors compare performance with greater clarity. From sustainability indicators and infrastructure readiness to guest experience and technology integration, a data-based approach reveals where one destination outperforms another. This article explains how to use tourism benchmarking to evaluate destinations more accurately, reduce decision risk, and support smarter planning across the tourism value chain.

For B2B users across tourism development, hospitality operations, procurement, engineering review, and quality control, destination comparison is no longer just about visitor numbers or marketing image. Decisions now depend on measurable variables such as energy intensity, occupancy resilience, transport accessibility, smart system readiness, maintenance complexity, and compliance risk.

That is where tourism benchmarking becomes useful. It creates a structured method for comparing destinations with consistent criteria, allowing project managers, technical evaluators, and commercial teams to identify strengths, weaknesses, and investment gaps before land is acquired, infrastructure is deployed, or supplier contracts are signed.

In practice, benchmarking is most valuable when it combines demand indicators with physical infrastructure metrics. This is especially relevant in a market where prefab tourism assets, hotel automation, IoT connectivity, and sustainability performance increasingly shape long-term destination competitiveness.

What tourism benchmarking means in operational and investment terms

Tourism benchmarking is the process of comparing one destination against a defined peer group using standardized indicators. These indicators usually cover 4 core layers: market demand, visitor experience, infrastructure capacity, and sustainability performance. For B2B decision-makers, the goal is not academic ranking. It is to reduce uncertainty in planning, procurement, and asset deployment.

A useful benchmark should compare destinations of similar scale, seasonality, and product type. Comparing a remote eco-resort cluster to a capital-city hotel district often produces distorted conclusions. A better method is to compare 3 to 7 peer destinations that share similar climate, access conditions, guest profile, and infrastructure maturity.

For example, a glamping destination may benchmark thermal insulation performance in prefab cabins, wastewater treatment uptime, and average shuttle transfer time. A smart urban destination may focus more on hotel network throughput, check-in automation rate, public transport integration, and average response time for digital guest services.

Teams using benchmarking should also separate outcome metrics from enabling metrics. Occupancy, guest spend, and review score are outcomes. Energy efficiency, room system interoperability, mobility access, and maintenance intervals are enabling conditions. Strong tourism benchmarking looks at both, because outcomes often lag behind infrastructure quality by 6 to 24 months.

Why the method matters across the tourism supply chain

Destination developers use benchmarking to test feasibility before committing capital. Operators use it to improve service delivery and reduce downtime. Procurement teams use it to evaluate whether supplied tourism hardware can meet local performance requirements. Safety and quality control teams use it to verify whether a destination can sustain target throughput without excessive wear, failure, or compliance exposure.

In this sense, tourism benchmarking is not limited to tourism boards. It supports site operators, hotel groups, amusement asset buyers, and distributors that need to compare technical performance across competing locations. That is particularly relevant when smart hospitality systems, modular construction, and carbon targets influence purchasing decisions.

Key benchmarking dimensions

- Demand strength: arrivals, occupancy range, average length of stay, and shoulder-season recovery.

- Infrastructure readiness: roads, utilities, broadband stability, waste handling, and emergency access.

- Guest experience delivery: waiting time, service speed, review consistency, and digital touchpoint performance.

- Sustainability performance: energy use, water intensity, carbon reporting readiness, and material durability.

- Commercial resilience: pricing power, supplier access, maintenance cost, and capex efficiency over 3 to 5 years.

How to choose the right tourism benchmarking indicators

The biggest mistake in tourism benchmarking is using too many indicators with too little relevance. A practical destination comparison model often works best with 12 to 20 indicators. Fewer than 10 may miss important risk signals. More than 25 usually creates noise, slows decision cycles, and makes cross-functional review harder for commercial and technical teams.

Indicator selection should reflect the destination type and project stage. Early-stage investors may prioritize access, land servicing, seasonality, and demand consistency. Later-stage operators may care more about asset maintenance frequency, thermal performance, occupancy conversion, and guest digital engagement. Procurement directors may focus on interoperability, material fatigue, and lifecycle cost over a 5-year or 10-year horizon.

A balanced scorecard should include both quantitative and qualitative data, but the scoring logic must remain clear. For instance, a destination with 92% network uptime during peak periods is easier to compare than one described only as having “strong digital infrastructure.” Measurable definitions improve consistency across internal reviews.

For tourism infrastructure projects, the most useful benchmark indicators often include operating thresholds. These might include transfer time under 45 minutes, utility outage below 2 hours per month, guest complaint resolution within 24 hours, or cabin thermal deviation within a defined indoor comfort band during seasonal extremes.

Suggested indicator framework by evaluation objective

The table below shows how different teams can align tourism benchmarking metrics with real business goals. It is especially useful when one destination must be compared across technical, commercial, and operational perspectives rather than by marketing visibility alone.

Evaluation objective Recommended indicators Typical threshold or range Investment feasibility Occupancy seasonality, access time, utility readiness, average daily rate resilience Peak-to-low occupancy gap below 35%; primary access under 90 minutes Operational readiness Staffing availability, system uptime, maintenance interval, service response time Network uptime above 98%; issue response within 24 hours Sustainability review Energy intensity, water use per guest night, waste sorting capability, carbon data traceability Quarterly reporting available; measurable reduction targets over 12 months Procurement compatibility System integration, thermal performance, hardware durability, replacement lead time Spare part lead time within 2 to 6 weeks; interoperable with existing BMS or PMS This framework shows that tourism benchmarking works best when indicators are tied to decision use. The same destination may score well for demand growth yet poorly for integration complexity or sustainability reporting maturity. Without segmented evaluation, teams risk approving a site that is commercially attractive but operationally costly.

Selection rules for stronger indicator design

- Use indicators that can be measured at regular intervals such as monthly, quarterly, or annually.

- Prefer metrics linked to action, such as maintenance cycle or transfer time, rather than broad image perception alone.

- Set scoring bands in advance, for example 1 to 5 or 0 to 100, to reduce debate after data collection.

- Document the unit of comparison, such as per guest night, per room, per hectare, or per month.

Building a destination comparison model that goes beyond surface rankings

A strong tourism benchmarking model should not treat every variable equally. Weighting matters. If a project is focused on remote eco-lodging, thermal efficiency, water autonomy, and access reliability may deserve a combined weight of 40% to 50%. In contrast, a city hotel benchmark may assign more weight to guest flow automation, public transport reach, and network capacity.

The best comparison models also distinguish between fixed constraints and improvable weaknesses. Fixed constraints include geography, climate exposure, or airport distance. Improvable weaknesses include poor digital integration, inefficient layouts, or low-performing prefab envelopes. This distinction helps decision-makers avoid rejecting a viable destination for issues that can be corrected within 3 to 12 months.

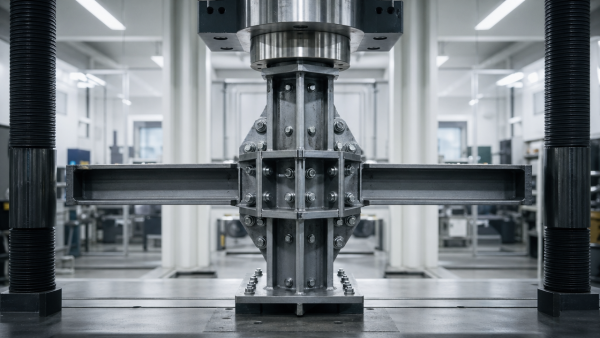

For infrastructure-heavy tourism projects, technical benchmark data can reveal hidden cost drivers. A site with lower room rates may still be more expensive over time if cabins lose thermal efficiency, if IoT systems require frequent resets, or if amusement hardware shows faster material fatigue under local humidity or load conditions.

This is where independent engineering-style benchmarking adds value. Instead of relying on supplier brochures, teams can compare raw metrics such as insulation performance, throughput stability, power consumption variation, and fatigue resistance under defined operating conditions. These measures support better procurement and better destination ranking at the same time.

Example scoring structure for destination benchmarking

The following table illustrates a simplified comparison model. Teams can adjust the weights depending on project type, but the structure helps connect tourism benchmarking with actual capex, operations, and user experience decisions.

Category Weight Example metrics Market demand and resilience 25% Occupancy spread, repeat visitation, length of stay, shoulder-season demand Infrastructure and access 25% Road quality, utility reliability, emergency access, broadband uptime Guest experience and smart systems 20% Check-in time, app usability, issue resolution, room system interoperability Sustainability and lifecycle efficiency 30% Energy intensity, carbon traceability, water use, maintenance interval, material durability The key takeaway is that tourism benchmarking becomes more reliable when a destination is evaluated as a system rather than a single tourism product. A destination with higher demand but weak infrastructure may score lower overall than a smaller location with stronger operational fundamentals and lower lifecycle risk.

Common modeling mistakes to avoid

- Using visitor volume as the dominant metric while ignoring margin, uptime, and maintenance exposure.

- Comparing destinations from different climate bands without adjusting thermal or utility assumptions.

- Ignoring replacement cycles for prefab, smart hotel, or amusement assets over a 5 to 8 year period.

- Scoring “technology” as a vague category instead of measuring actual throughput, compatibility, and failure rate.

How to apply tourism benchmarking during procurement, planning, and site operations

Tourism benchmarking delivers the highest value when it is embedded in project workflows instead of treated as a one-time report. For example, a developer can use benchmark findings in the pre-feasibility phase, a procurement team can use them to screen equipment suppliers, and operators can use the same framework to track post-launch performance every quarter.

In the planning stage, benchmark data helps confirm whether the destination concept fits local conditions. If the location has extreme diurnal temperature swings, prefab tourism units should be evaluated for insulation, condensation control, and serviceability. If the site expects high guest density, the destination comparison should include network load behavior, queue processing, and public facility throughput.

During procurement, the benchmark should be translated into technical specifications. Instead of asking for “high-performance cabins” or “smart hotel systems,” buyers can define testable requirements such as stable internal comfort range, lower maintenance frequency, interoperability with current software, or spare-parts availability within a given lead time.

Once operations begin, benchmarking supports continuous improvement. If one destination resolves service issues within 8 hours while another takes 36 hours, the gap becomes visible. If one site consumes noticeably more energy per occupied room, asset configuration or control logic can be reviewed before costs escalate across the season.

A practical 5-step implementation process

- Define the decision goal: investment screening, supplier selection, operational improvement, or portfolio comparison.

- Select 3 to 7 peer destinations with similar climate, scale, access profile, and customer mix.

- Choose 12 to 20 indicators and assign weights based on project type and risk sensitivity.

- Collect data from site audits, operating records, engineering tests, guest feedback, and supplier documentation.

- Review the results every 3, 6, or 12 months and update capex, procurement, or operational priorities accordingly.

Where independent technical benchmarking fits

For destinations investing in built assets and smart systems, independent testing is often the missing layer. TerraVista Metrics (TVM) addresses this need by translating physical and system-level performance into usable benchmark data. That may include thermal efficiency in prefab glamping units, throughput stability in hotel IoT infrastructure, or material fatigue patterns in amusement hardware.

This type of benchmarking helps global buyers compare destinations not just by tourism image, but by build quality, compliance readiness, and operational reliability. It is particularly useful when Chinese-manufactured components or modular systems are being evaluated for cross-border tourism projects and decision-makers need standardized, engineering-oriented documentation.

Frequent benchmarking risks, buyer questions, and better decision rules

Even well-intended tourism benchmarking can fail if the data set is inconsistent or if teams confuse correlation with causation. A destination may show high guest ratings because of novelty or favorable weather, not because its infrastructure model is more robust. That is why benchmark interpretation should combine operating evidence, technical review, and context about seasonality and market positioning.

Another common issue is relying on supplier claims without performance validation. In tourism hardware procurement, visual design can obscure long-term weaknesses. A glamping unit may photograph well but underperform in thermal retention. A hotel automation layer may seem advanced yet create integration bottlenecks with legacy PMS or BMS systems. Benchmarking should test practical fit, not just brochure features.

For decision-makers, the best rule is simple: benchmark what affects cost, uptime, compliance, and guest experience over time. If a metric cannot influence design choice, procurement language, or operating practice, it should carry less weight. This keeps the comparison process usable for teams managing real deadlines and capital constraints.

Below are frequent questions that arise when organizations start using tourism benchmarking in destination comparison, supplier evaluation, and infrastructure planning.

How often should destinations be benchmarked?

For active projects, a 6 to 12 month cycle is common. Fast-changing assets such as smart systems, utility performance, and digital guest-service channels may require quarterly review. More stable variables such as road access or structural envelope performance may be updated annually unless a major capex change occurs.

Which metrics matter most during supplier or site selection?

Focus first on 4 areas: lifecycle cost, technical durability, integration compatibility, and compliance readiness. For example, replacement lead time of 2 to 6 weeks, network uptime above 98%, and documented maintenance intervals are often more decision-useful than broad claims of premium quality.

Can small or emerging destinations use tourism benchmarking effectively?

Yes. Smaller destinations often benefit the most because benchmarking reveals where limited capex should be prioritized. Instead of investing across 10 weak areas, a site may discover that improving 3 items—access reliability, thermal comfort, and digital service speed—delivers the strongest gain in guest satisfaction and operating control.

What should teams document for auditability?

Keep a record of indicator definitions, data collection dates, scoring rules, peer-group logic, and any assumptions used for climate, occupancy, or usage intensity. This is especially important for engineering-led tourism benchmarking, where a small change in operating condition can materially affect results.

Tourism benchmarking is most effective when it turns destination comparison into a repeatable decision system. By combining market indicators with infrastructure quality, smart system performance, sustainability metrics, and lifecycle risk, organizations can compare destinations with more precision and less bias.

For developers, operators, procurement teams, and technical reviewers, the value is clear: better site selection, stronger supplier screening, more defensible capex decisions, and a clearer path to operational stability. If you need destination benchmarking grounded in engineering metrics rather than surface-level claims, TerraVista Metrics can help translate tourism infrastructure performance into usable decision data.

Contact us to discuss a custom benchmarking framework, request a technical comparison model, or explore how standardized whitepapers can support your next tourism, hospitality, or destination infrastructure project.

- EMS

- ESS

- energy efficiency

- BMS

- procurement

- AR

- water treatment

- supply chain

- Cement

- prefab cabins

- tourism hardware

- glamping units

- amusement hardware

- thermal efficiency

- material fatigue

- system integration

- engineering metrics

- prefab glamping

- smart hospitality

- smart hotel systems

- tourism infrastructure

- benchmarking

- hotel IoT

- smart hotel

- tourism supply chain

- benchmarking framework

- benchmarking metrics

- smart hotel system

- tourism benchmarking

Recommended News

![Why benchmarking software implementation fails so often Why benchmarking software implementation fails so often]() Apr 26, 2026Why benchmarking software implementation fails so oftenWhy does benchmarking software fail? Master benchmarking tools and benchmarking analysis to optimize your benchmarking process for sustainable tourism development. Click to learn more!

Apr 26, 2026Why benchmarking software implementation fails so oftenWhy does benchmarking software fail? Master benchmarking tools and benchmarking analysis to optimize your benchmarking process for sustainable tourism development. Click to learn more!![How to Use Tourism Benchmarking to Compare Destinations How to Use Tourism Benchmarking to Compare Destinations]() Apr 28, 2026How to Use Tourism Benchmarking to Compare DestinationsTourism benchmarking helps compare destinations with clear metrics for demand, infrastructure, sustainability, and guest experience. Learn how to reduce risk and make smarter planning decisions.

Apr 28, 2026How to Use Tourism Benchmarking to Compare DestinationsTourism benchmarking helps compare destinations with clear metrics for demand, infrastructure, sustainability, and guest experience. Learn how to reduce risk and make smarter planning decisions.![How to Choose Tourism Benchmarking Data Without Bias How to Choose Tourism Benchmarking Data Without Bias]() Apr 27, 2026How to Choose Tourism Benchmarking Data Without BiasTourism benchmarking made bias-free: learn how to verify transparent methods, compare reliable metrics, and choose decision-ready data for smarter tourism procurement.

Apr 27, 2026How to Choose Tourism Benchmarking Data Without BiasTourism benchmarking made bias-free: learn how to verify transparent methods, compare reliable metrics, and choose decision-ready data for smarter tourism procurement.![Hospitality Benchmarking: Which Metrics Matter Hospitality Benchmarking: Which Metrics Matter]() Apr 27, 2026Hospitality Benchmarking: Which Metrics MatterHospitality benchmarking shows which metrics truly matter across the hospitality ecosystem—from eco-friendly cabins and smart hotel IoT to compliance, durability, integration, and lifecycle ROI.

Apr 27, 2026Hospitality Benchmarking: Which Metrics MatterHospitality benchmarking shows which metrics truly matter across the hospitality ecosystem—from eco-friendly cabins and smart hotel IoT to compliance, durability, integration, and lifecycle ROI.![How to apply benchmarking methodology to hotel operations? How to apply benchmarking methodology to hotel operations?]() Apr 26, 2026How to apply benchmarking methodology to hotel operations?Benchmarking methodology for hotel operations: compare smart hotel technology, smart hotel solutions, and benchmarking services to improve ROI, integration, sustainability, and vendor selection.

Apr 26, 2026How to apply benchmarking methodology to hotel operations?Benchmarking methodology for hotel operations: compare smart hotel technology, smart hotel solutions, and benchmarking services to improve ROI, integration, sustainability, and vendor selection.![Which benchmarking platform works best for multi-site groups? Which benchmarking platform works best for multi-site groups?]() Apr 26, 2026Which benchmarking platform works best for multi-site groups?Compare the best benchmarking platform for multi-site groups using proven benchmarking methodology for smart hotel technology, smart hotel solutions, and benchmarking services that improve ROI.

Apr 26, 2026Which benchmarking platform works best for multi-site groups?Compare the best benchmarking platform for multi-site groups using proven benchmarking methodology for smart hotel technology, smart hotel solutions, and benchmarking services that improve ROI.![How to evaluate a benchmarking methodology step by step? How to evaluate a benchmarking methodology step by step?]() Apr 26, 2026How to evaluate a benchmarking methodology step by step?Benchmarking methodology explained step by step for smart hotel technology buyers. Learn how benchmarking services and platforms validate smart hotel solutions, integration, ROI, and sustainable tourism initiatives.

Apr 26, 2026How to evaluate a benchmarking methodology step by step?Benchmarking methodology explained step by step for smart hotel technology buyers. Learn how benchmarking services and platforms validate smart hotel solutions, integration, ROI, and sustainable tourism initiatives.![Which benchmarking platform is easier to trust and use? Which benchmarking platform is easier to trust and use?]() Apr 26, 2026Which benchmarking platform is easier to trust and use?Compare which benchmarking platform is easiest to trust and use for smart hotel technology, smart hotel solutions, and benchmarking services with transparent methodology and decision-ready insights.

Apr 26, 2026Which benchmarking platform is easier to trust and use?Compare which benchmarking platform is easiest to trust and use for smart hotel technology, smart hotel solutions, and benchmarking services with transparent methodology and decision-ready insights.![Are Benchmarking Solutions Worth the Cost? Are Benchmarking Solutions Worth the Cost?]() Apr 25, 2026Are Benchmarking Solutions Worth the Cost?Benchmarking solutions: are they worth the cost? Explore benchmarking software, benchmarking analysis, and benchmarking data that reduce risk, improve system integration services, and support sustainable tourism development.

Apr 25, 2026Are Benchmarking Solutions Worth the Cost?Benchmarking solutions: are they worth the cost? Explore benchmarking software, benchmarking analysis, and benchmarking data that reduce risk, improve system integration services, and support sustainable tourism development.![How to Fix a Broken Benchmarking Process How to Fix a Broken Benchmarking Process]() Apr 25, 2026How to Fix a Broken Benchmarking ProcessBenchmarking software and benchmarking tools help fix a broken benchmarking process with clear benchmarking analysis, reliable benchmarking data, and actionable benchmarking solutions.

Apr 25, 2026How to Fix a Broken Benchmarking ProcessBenchmarking software and benchmarking tools help fix a broken benchmarking process with clear benchmarking analysis, reliable benchmarking data, and actionable benchmarking solutions.![Which Benchmarking Tools Save Time Fast? Which Benchmarking Tools Save Time Fast?]() Apr 25, 2026Which Benchmarking Tools Save Time Fast?Benchmarking software and benchmarking tools speed benchmarking analysis, benchmarking comparison, and benchmarking reports for sustainable tourism development and system integration services.

Apr 25, 2026Which Benchmarking Tools Save Time Fast?Benchmarking software and benchmarking tools speed benchmarking analysis, benchmarking comparison, and benchmarking reports for sustainable tourism development and system integration services.![Benchmarking Software vs Spreadsheets Benchmarking Software vs Spreadsheets]() Apr 25, 2026Benchmarking Software vs SpreadsheetsBenchmarking software vs spreadsheets: discover which benchmarking tools deliver faster benchmarking analysis, cleaner benchmarking data, and stronger reports for tourism procurement.

Apr 25, 2026Benchmarking Software vs SpreadsheetsBenchmarking software vs spreadsheets: discover which benchmarking tools deliver faster benchmarking analysis, cleaner benchmarking data, and stronger reports for tourism procurement.![A Simple Benchmarking Process for Better Decisions A Simple Benchmarking Process for Better Decisions]() Apr 24, 2026A Simple Benchmarking Process for Better DecisionsBenchmarking software and benchmarking tools power a simple benchmarking process for better sourcing decisions. Explore benchmarking analysis, benchmarking comparison, and data-driven solutions.

Apr 24, 2026A Simple Benchmarking Process for Better DecisionsBenchmarking software and benchmarking tools power a simple benchmarking process for better sourcing decisions. Explore benchmarking analysis, benchmarking comparison, and data-driven solutions.![Benchmarking Comparison: What Actually Matters? Benchmarking Comparison: What Actually Matters?]() Apr 24, 2026Benchmarking Comparison: What Actually Matters?Benchmarking comparison made practical with benchmarking software, tools, and analysis—discover how benchmarking data improves sustainable tourism development, system integration services, and smarter procurement decisions.

Apr 24, 2026Benchmarking Comparison: What Actually Matters?Benchmarking comparison made practical with benchmarking software, tools, and analysis—discover how benchmarking data improves sustainable tourism development, system integration services, and smarter procurement decisions.![Benchmarking Tools That Fit Multi-Site Operations Benchmarking Tools That Fit Multi-Site Operations]() Apr 24, 2026Benchmarking Tools That Fit Multi-Site OperationsBenchmarking software and benchmarking tools for multi-site tourism operations, with benchmarking analysis, benchmarking data, and system integration services to support smarter, sustainable procurement.

Apr 24, 2026Benchmarking Tools That Fit Multi-Site OperationsBenchmarking software and benchmarking tools for multi-site tourism operations, with benchmarking analysis, benchmarking data, and system integration services to support smarter, sustainable procurement.![How to Choose Benchmarking Software in 2026 How to Choose Benchmarking Software in 2026]() Apr 24, 2026How to Choose Benchmarking Software in 2026Benchmarking software guide for 2026: compare benchmarking tools, benchmarking analysis, and benchmarking data to choose solutions that improve reporting, integration, and procurement decisions.

Apr 24, 2026How to Choose Benchmarking Software in 2026Benchmarking software guide for 2026: compare benchmarking tools, benchmarking analysis, and benchmarking data to choose solutions that improve reporting, integration, and procurement decisions.![Do Fiberglass Formwork Panels Lower Reuse Costs? Do Fiberglass Formwork Panels Lower Reuse Costs?]() Apr 23, 2026Do Fiberglass Formwork Panels Lower Reuse Costs?Fiberglass formwork panels can cut reuse costs by improving durability, handling, and lifecycle value vs plastic concrete formwork and steel column formwork OEM—learn when they deliver the best ROI.

Apr 23, 2026Do Fiberglass Formwork Panels Lower Reuse Costs?Fiberglass formwork panels can cut reuse costs by improving durability, handling, and lifecycle value vs plastic concrete formwork and steel column formwork OEM—learn when they deliver the best ROI.![How Clean Does Benchmarking Data Need to Be? How Clean Does Benchmarking Data Need to Be?]() Apr 28, 2026How Clean Does Benchmarking Data Need to Be?Benchmarking software and benchmarking data matter most when procurement decisions are high-stakes. Learn how clean benchmarking analysis and benchmarking comparison should be to reduce risk.

Apr 28, 2026How Clean Does Benchmarking Data Need to Be?Benchmarking software and benchmarking data matter most when procurement decisions are high-stakes. Learn how clean benchmarking analysis and benchmarking comparison should be to reduce risk.![Is Your Benchmarking System Flexible Enough to Scale? Is Your Benchmarking System Flexible Enough to Scale?]() Apr 22, 2026Is Your Benchmarking System Flexible Enough to Scale?Benchmarking software and benchmarking tools should scale with your projects. Learn how flexible benchmarking analysis, benchmarking data, and a stronger benchmarking system improve decisions.

Apr 22, 2026Is Your Benchmarking System Flexible Enough to Scale?Benchmarking software and benchmarking tools should scale with your projects. Learn how flexible benchmarking analysis, benchmarking data, and a stronger benchmarking system improve decisions.![Benchmarking Software Costs That Usually Appear Too Late Benchmarking Software Costs That Usually Appear Too Late]() Apr 22, 2026Benchmarking Software Costs That Usually Appear Too LateBenchmarking software costs often appear too late. Learn how benchmarking tools, benchmarking analysis, and benchmarking data reveal hidden expenses, improve vendor comparison, and support smarter decisions.

Apr 22, 2026Benchmarking Software Costs That Usually Appear Too LateBenchmarking software costs often appear too late. Learn how benchmarking tools, benchmarking analysis, and benchmarking data reveal hidden expenses, improve vendor comparison, and support smarter decisions.![Open vs Paid Benchmarking Tools: Where the Gap Shows Open vs Paid Benchmarking Tools: Where the Gap Shows]() Apr 22, 2026Open vs Paid Benchmarking Tools: Where the Gap ShowsBenchmarking software vs paid benchmarking tools: see where benchmarking analysis, benchmarking data, and benchmarking reports differ—and choose smarter benchmarking solutions with confidence.

Apr 22, 2026Open vs Paid Benchmarking Tools: Where the Gap ShowsBenchmarking software vs paid benchmarking tools: see where benchmarking analysis, benchmarking data, and benchmarking reports differ—and choose smarter benchmarking solutions with confidence.![Benchmarking Data Gaps That Lead to Weak Forecasts Benchmarking Data Gaps That Lead to Weak Forecasts]() Apr 22, 2026Benchmarking Data Gaps That Lead to Weak ForecastsBenchmarking software and benchmarking tools reveal benchmarking data gaps, sharpen benchmarking analysis, and improve benchmarking comparison for more reliable forecasts.

Apr 22, 2026Benchmarking Data Gaps That Lead to Weak ForecastsBenchmarking software and benchmarking tools reveal benchmarking data gaps, sharpen benchmarking analysis, and improve benchmarking comparison for more reliable forecasts.![Signs a Benchmarking System Is Too Rigid for Daily Use Signs a Benchmarking System Is Too Rigid for Daily Use]() Apr 22, 2026Signs a Benchmarking System Is Too Rigid for Daily UseBenchmarking software feeling too rigid? Learn the warning signs, improve benchmarking analysis and comparison, and build a flexible benchmarking process with smarter tools and best practices.

Apr 22, 2026Signs a Benchmarking System Is Too Rigid for Daily UseBenchmarking software feeling too rigid? Learn the warning signs, improve benchmarking analysis and comparison, and build a flexible benchmarking process with smarter tools and best practices.![What Hospitality Benchmarking Often Misses in RevPAR Gaps What Hospitality Benchmarking Often Misses in RevPAR Gaps]() Apr 19, 2026What Hospitality Benchmarking Often Misses in RevPAR GapsHospitality benchmarking reveals why RevPAR gaps persist by exposing hidden drivers in prefab glamping, smart hotel IoT, PCB specs, lighting IP ratings, and tourism infrastructure.

Apr 19, 2026What Hospitality Benchmarking Often Misses in RevPAR GapsHospitality benchmarking reveals why RevPAR gaps persist by exposing hidden drivers in prefab glamping, smart hotel IoT, PCB specs, lighting IP ratings, and tourism infrastructure.

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.