Search News

TerraVista Metrics (TVM)Industry Portal

TerraVista Metrics (TVM)Hot Articles

TerraVista Metrics (TVM)- UL 60335-2-107:2026 Tightens Smart Lighting ExportsUL 60335-2-107:2026 tightens smart lighting exports with new EMC immunity and thermal protection checks. See how LED exporters can prepare faster for North America compliance.

- EN 16562:2026 Takes Effect for EU-Bound Modular CabinsEN 16562:2026 now impacts EU-bound Modular Cabins with A2-s1,d0, CE and EPD requirements. See who is affected, key compliance risks, and how to stay export-ready.

- RV MCU Lead Times Stretch as Local Supply Gains Audit AccessRV MCU lead times stretch beyond 26 weeks as local suppliers gain audit access and certification progress. Explore sourcing risks, compliance checks, and qualified alternatives for RV supply chains.

Popular Tags

TerraVista Metrics (TVM)Industry NewsBenchmarking Report Red Flags to Watch

auth.Time

Jun 09, 2026Click Count

A benchmarking report can reveal critical insights—or hide costly risks. For buyers, analysts, and distributors in tourism infrastructure, spotting red flags in benchmarking data, benchmarking comparison, and benchmarking analysis is essential to smarter decisions. From benchmarking software claims to system integration services performance, this guide helps you evaluate results with greater precision and support sustainable tourism development through a more reliable benchmarking process.

What Buyers Actually Need to Know First

If you are reviewing a benchmarking report to support procurement, supplier screening, or partnership decisions, the key question is not whether the document looks technical. The real question is whether the report gives you decision-grade evidence. A polished report can still hide weak testing methods, selective data, unrealistic operating conditions, or unsupported claims about durability, energy efficiency, interoperability, and lifecycle value.

For tourism and hospitality projects, this matters even more because procurement decisions often involve high capital cost, long deployment cycles, and cross-system dependencies. A glamping structure, hotel automation platform, smart IoT network, or amusement hardware component may perform well in a lab snapshot but fail under actual destination conditions. That is why the most important skill is learning how to identify benchmarking report red flags before those risks become budget overruns, technical disputes, or operational downtime.

In practice, most searchers looking for this topic want a clear answer to three issues: can the benchmarking data be trusted, is the comparison fair, and does the analysis match real procurement use cases. Those are the issues this article prioritizes.

Red Flag #1: The Report Never Clearly Explains the Testing Method

A benchmarking process is only as credible as its methodology. If the report does not clearly explain how tests were designed, what conditions were controlled, how samples were selected, and what metrics were measured, treat the findings with caution.

Common warning signs include vague phrases such as “industry-leading performance,” “verified efficiency,” or “best-in-class reliability” without a test protocol behind them. In a trustworthy benchmarking analysis, you should be able to see:

- Test objectives and scope

- Sample size and sample source

- Environmental conditions and load assumptions

- Testing duration

- Measurement instruments or software used

- Calculation logic for scores or rankings

For example, if a report compares thermal performance in prefab tourism units, but does not specify outside temperature range, insulation configuration, occupancy assumptions, or heating and cooling loads, the benchmarking comparison may be too weak to support procurement decisions.

When methodology is missing, your risk is simple: you cannot tell whether the performance claim is real, repeatable, or relevant.

Red Flag #2: The Comparison Group Is Chosen to Make One Product Look Better

One of the most common benchmarking report red flags is biased peer selection. A report may compare a supplier’s product only against outdated models, lower-grade alternatives, or products designed for different operating conditions. This creates the appearance of superiority without proving real market competitiveness.

Procurement teams and distributors should ask:

- Are the compared products in the same category and performance tier?

- Are they intended for similar hospitality or tourism applications?

- Were all products tested under identical conditions?

- Were key commercial alternatives excluded without explanation?

In tourism infrastructure, fair benchmarking comparison is especially important because products often sit in very different deployment environments. A smart hotel control system designed for luxury urban properties should not be compared casually with a simplified controller for low-complexity sites. Likewise, high-end amusement hardware should not be benchmarked only against entry-level alternatives.

If the comparison set feels too convenient, it probably is.

Red Flag #3: Results Look Impressive, but the Metrics Do Not Match Real Buying Priorities

Some reports are technically dense but commercially weak. They highlight metrics that sound advanced while ignoring the indicators that matter most to ownership cost, guest experience, compliance, and integration risk.

This is where many information researchers and business evaluators lose time. The report may focus on abstract benchmark scores while leaving out practical indicators such as:

- Energy consumption under realistic occupancy

- Mean time between failure

- Maintenance frequency and spare-part dependency

- Interoperability with existing hotel systems

- Carbon compliance and material traceability

- Installation complexity and commissioning time

- Total cost of ownership over the asset lifecycle

A useful benchmarking analysis should help you connect technical performance to operational and financial impact. If it cannot show how benchmark results affect uptime, utility cost, staffing needs, compliance exposure, or guest service quality, the report may be informative but not decision-ready.

Red Flag #4: Testing Conditions Are Too Controlled to Reflect Real-World Use

Many products perform well in optimized demonstrations and poorly in live hospitality environments. This is a major concern for site operators, project developers, and channel partners who need systems that remain stable across climate variation, high occupancy, and continuous usage.

Be careful when a benchmarking report is based only on ideal laboratory conditions with no attempt to simulate field reality. For tourism applications, real-world conditions may include humidity swings, coastal corrosion, unstable power quality, variable occupancy, intermittent connectivity, dust exposure, transport stress, or multi-system data traffic.

For instance, benchmarking software used to validate an IoT network may show excellent throughput in isolation. But if the report does not test packet loss, latency, or device stability under high device density across an active hotel property, the result may overstate performance.

The more complex the deployment environment, the more valuable field-relevant benchmarking becomes.

Red Flag #5: The Report Hides Variability and Only Shows Averages

Average performance figures can conceal instability. A report that highlights only mean results without showing spread, variance, failure rates, or deviation across repeated tests may be masking inconsistency.

This matters because procurement risk often comes from outliers, not averages. A product with a strong average benchmark score but wide performance fluctuation can create service interruptions, increased maintenance, and unpredictable user experience.

Look for data such as:

- Minimum and maximum values

- Standard deviation or range

- Pass-fail consistency across test cycles

- Results across different operating loads

- Failure incidence and recovery behavior

In benchmarking data, consistency often matters as much as peak performance. A supplier that performs slightly below the top score but with stable, repeatable outcomes may be the better commercial choice.

Red Flag #6: There Is No Independent Verification or Source Transparency

If the report is effectively self-certified marketing content, treat it carefully. Independence and traceability are critical when benchmark results influence capital investment or supplier qualification.

Ask whether the report identifies:

- The organization that conducted the testing

- Whether the lab or analyst is independent

- The origin of the raw data

- Any sponsorship or commercial relationship

- Whether the findings were reviewed or replicated

In supplier-driven benchmarking reports, selective disclosure is common. A vendor may publish only favorable sections, summarize data without raw tables, or omit failed test categories. That does not automatically invalidate the report, but it means buyers should request deeper documentation before relying on it.

For distributors and agents, source transparency is also important because your own credibility may be affected if you pass weak benchmark claims downstream to clients or project owners.

Red Flag #7: System Integration Claims Are Broad but Poorly Proven

In modern tourism infrastructure, performance is rarely standalone. Hardware, software, sensors, controls, energy systems, and property management platforms must work together. A benchmarking report that claims strong interoperability without demonstrating integration depth should raise concerns.

This is especially relevant when evaluating system integration services or smart hospitality ecosystems. A report may claim “seamless integration” but fail to explain:

- Which third-party systems were tested

- What communication protocols were used

- How data mapping and synchronization were validated

- What happened under error conditions or network disruption

- Whether integration required custom development

True benchmarking analysis for integrated systems should go beyond feature compatibility. It should show operational reliability across workflows, not just connection success on a specification sheet.

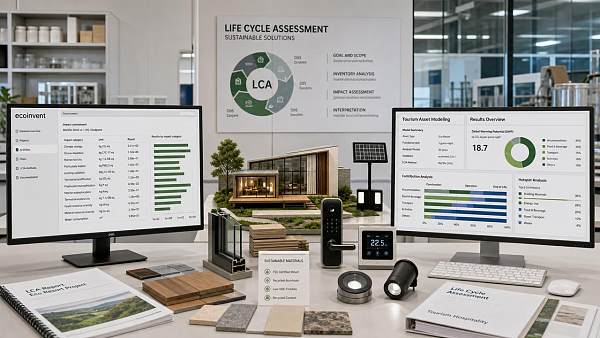

Red Flag #8: Sustainability Claims Are Included, but Evidence Is Thin

In tourism development, sustainability is no longer a branding layer. It affects approvals, investor confidence, procurement standards, and long-term operating economics. Yet many reports include environmental claims without sufficient benchmark evidence.

Be cautious if the report promotes carbon efficiency, eco-material advantages, or sustainable tourism development outcomes without measurable support. Credible sustainability benchmarking should include data such as:

- Embodied carbon or lifecycle carbon estimates

- Energy efficiency under actual use conditions

- Material origin and compliance documentation

- Waste reduction or recyclability metrics

- Water or utility consumption benchmarks

If sustainability language is prominent but evidence is secondary, the report may be designed to support sales positioning rather than procurement due diligence.

How to Check a Benchmarking Report Before You Trust It

For most buyers and evaluators, the best approach is not to reject reports automatically but to review them using a practical screening framework. Before treating a benchmarking report as decision support, confirm the following:

- Relevance: Do the tested metrics match your project goals, operating environment, and procurement priorities?

- Comparability: Were equivalent products or systems tested under the same conditions?

- Method clarity: Can you understand exactly how the benchmarking process was executed?

- Data depth: Are raw values, ranges, and limitations visible rather than only summary claims?

- Independence: Is the benchmark source transparent and commercially credible?

- Field realism: Do the tests reflect real usage conditions in tourism or hospitality operations?

- Decision utility: Does the analysis help you estimate risk, cost, performance, and integration outcome?

This type of review turns benchmarking from a marketing artifact into a practical business tool.

What a Strong Benchmarking Report Should Look Like

Once you know the red flags, it becomes easier to identify useful reports. A strong report does not try to impress with volume alone. It helps stakeholders make defensible decisions.

High-quality benchmarking reports usually have these characteristics:

- Clear scope and intended use case

- Transparent methodology and assumptions

- Fair product and system comparison logic

- Data that includes both strengths and limitations

- Operationally relevant performance indicators

- Evidence of repeatability and consistency

- Independent or verifiable testing sources

- Useful interpretation for procurement, integration, and lifecycle planning

For tourism infrastructure stakeholders, the best reports bridge engineering metrics and business decisions. They do not just say what performed best. They show what is most suitable for a specific deployment, risk profile, and operating model.

Conclusion

When reviewing benchmarking data, the biggest mistake is assuming technical language equals technical truth. The most important benchmarking report red flags usually appear in the structure of the report itself: unclear methodology, biased comparisons, unrealistic test conditions, hidden variability, weak source transparency, and unsupported system integration or sustainability claims.

For information researchers, procurement teams, business evaluators, and channel partners, the goal is not simply to find the highest score. It is to find evidence you can trust. A reliable benchmarking comparison should reduce uncertainty, improve supplier evaluation, and support smarter long-term decisions in tourism and hospitality projects.

If a report helps you understand real performance, real limits, and real deployment implications, it has value. If it only makes a product look good, keep asking questions.

Recommended News

![When enterprise software becomes too costly to maintain When enterprise software becomes too costly to maintain]() May 31, 2026When enterprise software becomes too costly to maintainEnterprise software maintenance costs rising? Learn how to spot risk, benchmark performance, and decide when to maintain, modernize, or replace.

May 31, 2026When enterprise software becomes too costly to maintainEnterprise software maintenance costs rising? Learn how to spot risk, benchmark performance, and decide when to maintain, modernize, or replace.![Sheet Metal Thickness Changes More Than Strength Sheet Metal Thickness Changes More Than Strength]() May 29, 2026Sheet Metal Thickness Changes More Than StrengthSheet metal thickness affects strength, weight, corrosion, noise, cost, and compliance. Use this checklist to choose safer, longer-lasting panels for tourism assets.

May 29, 2026Sheet Metal Thickness Changes More Than StrengthSheet metal thickness affects strength, weight, corrosion, noise, cost, and compliance. Use this checklist to choose safer, longer-lasting panels for tourism assets.![Why smart hotel bulk order pricing varies so much Why smart hotel bulk order pricing varies so much]() May 23, 2026Why smart hotel bulk order pricing varies so muchSmart hotel bulk order pricing varies by integration, cybersecurity, compliance, software, and support. Learn how to compare true lifecycle value and avoid hidden costs.

May 23, 2026Why smart hotel bulk order pricing varies so muchSmart hotel bulk order pricing varies by integration, cybersecurity, compliance, software, and support. Learn how to compare true lifecycle value and avoid hidden costs.![How to estimate smart hotel cost before budgeting How to estimate smart hotel cost before budgeting]() May 14, 2026How to estimate smart hotel cost before budgetingSmart hotel cost explained for finance teams: learn how to estimate hardware, software, integration, cybersecurity, and lifecycle expenses before budgeting with confidence.

May 14, 2026How to estimate smart hotel cost before budgetingSmart hotel cost explained for finance teams: learn how to estimate hardware, software, integration, cybersecurity, and lifecycle expenses before budgeting with confidence.![Why smart hotel price can vary more than expected Why smart hotel price can vary more than expected]() May 06, 2026Why smart hotel price can vary more than expectedSmart hotel price can vary due to hidden tech, energy systems, security, and maintenance. Discover what really drives rates and how to spot the best value before you book.

May 06, 2026Why smart hotel price can vary more than expectedSmart hotel price can vary due to hidden tech, energy systems, security, and maintenance. Discover what really drives rates and how to spot the best value before you book.![How to Use Ecoinvent Data Without Common LCA Errors How to Use Ecoinvent Data Without Common LCA Errors]() May 22, 2026How to Use Ecoinvent Data Without Common LCA ErrorsEcoinvent mistakes can weaken any LCA. Learn how to choose the right datasets, set accurate boundaries, and improve decision-ready results for tourism, hospitality, and mixed-use projects.

May 22, 2026How to Use Ecoinvent Data Without Common LCA ErrorsEcoinvent mistakes can weaken any LCA. Learn how to choose the right datasets, set accurate boundaries, and improve decision-ready results for tourism, hospitality, and mixed-use projects.![Why emerging markets still matter in 2026 growth planning Why emerging markets still matter in 2026 growth planning]() May 22, 2026Why emerging markets still matter in 2026 growth planningEmerging markets still matter in 2026 growth planning. Learn how to assess demand, risk, compliance, and procurement with TerraVista Metrics for smarter expansion decisions.

May 22, 2026Why emerging markets still matter in 2026 growth planningEmerging markets still matter in 2026 growth planning. Learn how to assess demand, risk, compliance, and procurement with TerraVista Metrics for smarter expansion decisions.![Tourism Development Risks to Watch Before New Site Investment Tourism Development Risks to Watch Before New Site Investment]() May 22, 2026Tourism Development Risks to Watch Before New Site InvestmentTourism development risks can derail new site investment fast. Discover a practical checklist to test infrastructure, climate resilience, compliance, and lifecycle costs before you commit.

May 22, 2026Tourism Development Risks to Watch Before New Site InvestmentTourism development risks can derail new site investment fast. Discover a practical checklist to test infrastructure, climate resilience, compliance, and lifecycle costs before you commit.![How AI for business helps teams make faster decisions How AI for business helps teams make faster decisions]() May 20, 2026How AI for business helps teams make faster decisionsAI for business helps teams make faster, evidence-based decisions by turning complex technical data into clear, comparable insights—reducing risk, improving consistency, and speeding approvals.

May 20, 2026How AI for business helps teams make faster decisionsAI for business helps teams make faster, evidence-based decisions by turning complex technical data into clear, comparable insights—reducing risk, improving consistency, and speeding approvals.![Is container shipping still the safest low cost option Is container shipping still the safest low cost option]() May 19, 2026Is container shipping still the safest low cost optionContainer shipping is still a leading low-cost option when cargo, packaging, route risk, and delivery timing are planned right. Learn the checklist that prevents damage and delays.

May 19, 2026Is container shipping still the safest low cost optionContainer shipping is still a leading low-cost option when cargo, packaging, route risk, and delivery timing are planned right. Learn the checklist that prevents damage and delays.![How to compare warehousing solutions for faster growth How to compare warehousing solutions for faster growth]() May 18, 2026How to compare warehousing solutions for faster growthWarehousing solutions comparison made simple: learn how to assess scalability, integration, cost, and risk to support faster growth and smarter supply chain decisions.

May 18, 2026How to compare warehousing solutions for faster growthWarehousing solutions comparison made simple: learn how to assess scalability, integration, cost, and risk to support faster growth and smarter supply chain decisions.![Why whiteboard markers fail faster than most teams expect Why whiteboard markers fail faster than most teams expect]() May 17, 2026Why whiteboard markers fail faster than most teams expectWhiteboard markers fail early due to weak cap seals, heat, poor storage, and damaged boards. Learn how teams can cut waste, improve reliability, and choose longer-lasting markers.

May 17, 2026Why whiteboard markers fail faster than most teams expectWhiteboard markers fail early due to weak cap seals, heat, poor storage, and damaged boards. Learn how teams can cut waste, improve reliability, and choose longer-lasting markers.![School equipment costs more when these basics get missed School equipment costs more when these basics get missed]() May 17, 2026School equipment costs more when these basics get missedSchool equipment may look affordable at first, but hidden durability, compliance, and integration gaps can drive costs up. Learn how to buy smarter and avoid costly mistakes.

May 17, 2026School equipment costs more when these basics get missedSchool equipment may look affordable at first, but hidden durability, compliance, and integration gaps can drive costs up. Learn how to buy smarter and avoid costly mistakes.![Back to school trends that may change buying plans Back to school trends that may change buying plans]() May 16, 2026Back to school trends that may change buying plansBack to school trends are reshaping buying plans across travel and hospitality. Discover how data-driven procurement improves flexibility, efficiency, and guest experience.

May 16, 2026Back to school trends that may change buying plansBack to school trends are reshaping buying plans across travel and hospitality. Discover how data-driven procurement improves flexibility, efficiency, and guest experience.![Office supplies costs rise when small choices go wrong Office supplies costs rise when small choices go wrong]() May 16, 2026Office supplies costs rise when small choices go wrongOffice supplies costs often rise through small, untracked buying decisions. Learn how to reduce waste, improve purchasing control, and protect budgets with smarter standards.

May 16, 2026Office supplies costs rise when small choices go wrongOffice supplies costs often rise through small, untracked buying decisions. Learn how to reduce waste, improve purchasing control, and protect budgets with smarter standards.![Agri-Supply Chain Delays That Hurt Freshness and Profit Agri-Supply Chain Delays That Hurt Freshness and Profit]() May 15, 2026Agri-Supply Chain Delays That Hurt Freshness and ProfitAgri-supply chain delays cut freshness, raise spoilage, and drain margins. Discover key bottlenecks, profit risks, and data-driven fixes for distributors and hospitality buyers.

May 15, 2026Agri-Supply Chain Delays That Hurt Freshness and ProfitAgri-supply chain delays cut freshness, raise spoilage, and drain margins. Discover key bottlenecks, profit risks, and data-driven fixes for distributors and hospitality buyers.![Are Refurbished Printers and Scanners Worth the Risk? Are Refurbished Printers and Scanners Worth the Risk?]() May 15, 2026Are Refurbished Printers and Scanners Worth the Risk?Printers and scanners: are refurbished models a smart savings move or a hidden liability? Learn the key risks, benefits, and buying checks before you invest.

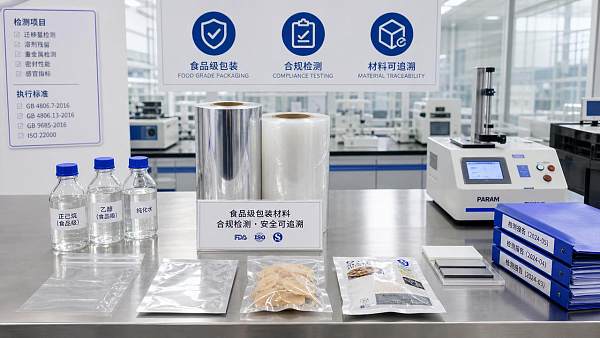

May 15, 2026Are Refurbished Printers and Scanners Worth the Risk?Printers and scanners: are refurbished models a smart savings move or a hidden liability? Learn the key risks, benefits, and buying checks before you invest.![Why Food Grade Packaging Fails Compliance Checks Why Food Grade Packaging Fails Compliance Checks]() May 14, 2026Why Food Grade Packaging Fails Compliance ChecksFood grade packaging fails compliance checks when migration risks, documentation gaps, and weak traceability go unnoticed. Learn the hidden causes and smarter verification steps.

May 14, 2026Why Food Grade Packaging Fails Compliance ChecksFood grade packaging fails compliance checks when migration risks, documentation gaps, and weak traceability go unnoticed. Learn the hidden causes and smarter verification steps.![Bakery Equipment Buying Mistakes That Hurt Output Quality Bakery Equipment Buying Mistakes That Hurt Output Quality]() May 13, 2026Bakery Equipment Buying Mistakes That Hurt Output QualityBakery equipment buying mistakes can quietly damage consistency, efficiency, and product quality. Learn what to check before investing to avoid waste, downtime, and costly output issues.

May 13, 2026Bakery Equipment Buying Mistakes That Hurt Output QualityBakery equipment buying mistakes can quietly damage consistency, efficiency, and product quality. Learn what to check before investing to avoid waste, downtime, and costly output issues.![Why Agricultural Chemicals Fail Even When the Label Is Followed Why Agricultural Chemicals Fail Even When the Label Is Followed]() May 13, 2026Why Agricultural Chemicals Fail Even When the Label Is FollowedAgricultural chemicals can fail even when labels are followed. Discover how water, weather, equipment, and mixing issues reduce results—and how to improve performance fast.

May 13, 2026Why Agricultural Chemicals Fail Even When the Label Is FollowedAgricultural chemicals can fail even when labels are followed. Discover how water, weather, equipment, and mixing issues reduce results—and how to improve performance fast.![Industrial and Manufacturing Orders Are Recovering Unevenly in 2026 Industrial and Manufacturing Orders Are Recovering Unevenly in 2026]() May 12, 2026Industrial and Manufacturing Orders Are Recovering Unevenly in 2026Industrial & Manufacturing orders are recovering unevenly in 2026. Learn how to assess supplier risk, compliance, lead times, and performance before sourcing critical projects.

May 12, 2026Industrial and Manufacturing Orders Are Recovering Unevenly in 2026Industrial & Manufacturing orders are recovering unevenly in 2026. Learn how to assess supplier risk, compliance, lead times, and performance before sourcing critical projects.![Grain Processing Capacity Is Growing, but Where Are the Bottlenecks? Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?]() May 12, 2026Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?Grain processing capacity is rising, but hidden bottlenecks still limit throughput. Discover key constraints, practical checks, and smarter ways to improve efficiency.

May 12, 2026Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?Grain processing capacity is rising, but hidden bottlenecks still limit throughput. Discover key constraints, practical checks, and smarter ways to improve efficiency.![Cosmetic ingredients under review as safety standards tighten Cosmetic ingredients under review as safety standards tighten]() May 09, 2026Cosmetic ingredients under review as safety standards tightenCosmetic ingredients face stricter safety review as global standards rise. Learn how to assess compliance, traceability, and supplier risk to build safer, audit-ready products.

May 09, 2026Cosmetic ingredients under review as safety standards tightenCosmetic ingredients face stricter safety review as global standards rise. Learn how to assess compliance, traceability, and supplier risk to build safer, audit-ready products.![Industrial & Manufacturing trends reshaping supplier selection Industrial & Manufacturing trends reshaping supplier selection]() May 09, 2026Industrial & Manufacturing trends reshaping supplier selectionIndustrial & Manufacturing trends are reshaping supplier selection in tourism infrastructure. See how buyers compare durability, integration, and compliance to choose smarter partners.

May 09, 2026Industrial & Manufacturing trends reshaping supplier selectionIndustrial & Manufacturing trends are reshaping supplier selection in tourism infrastructure. See how buyers compare durability, integration, and compliance to choose smarter partners.

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.