Search News

TerraVista Metrics (TVM)Industry Portal

TerraVista Metrics (TVM)Hot Articles

TerraVista Metrics (TVM)- UL 60335-2-107:2026 Tightens Smart Lighting ExportsUL 60335-2-107:2026 tightens smart lighting exports with new EMC immunity and thermal protection checks. See how LED exporters can prepare faster for North America compliance.

- EN 16562:2026 Takes Effect for EU-Bound Modular CabinsEN 16562:2026 now impacts EU-bound Modular Cabins with A2-s1,d0, CE and EPD requirements. See who is affected, key compliance risks, and how to stay export-ready.

- RV MCU Lead Times Stretch as Local Supply Gains Audit AccessRV MCU lead times stretch beyond 26 weeks as local suppliers gain audit access and certification progress. Explore sourcing risks, compliance checks, and qualified alternatives for RV supply chains.

Popular Tags

TerraVista Metrics (TVM)Industry NewsWhat Makes a Good Benchmarking Analysis?

auth.Time

Jun 09, 2026Click Count

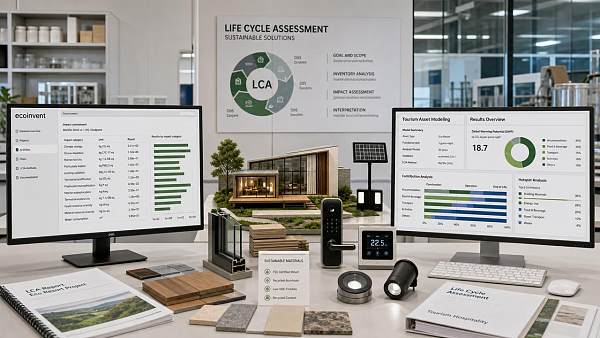

A good benchmarking analysis turns complex performance claims into clear, decision-ready evidence. Using reliable benchmarking software and benchmarking tools, buyers and evaluators can compare benchmarking data across durability, efficiency, compliance, and system integration services. In sustainable tourism development, a structured benchmarking process and benchmarking comparison help stakeholders produce a credible benchmarking report and identify practical benchmarking solutions with confidence.

For tourism developers, hotel procurement teams, commercial evaluators, and distribution partners, this matters because supplier claims often look similar on paper. A prefab cabin may promise thermal efficiency, an IoT platform may advertise seamless integration, and amusement hardware may highlight long service life. Without benchmarking analysis, those claims remain marketing language rather than operational evidence.

In practice, a useful benchmarking framework should reduce decision risk in 4 areas: technical performance, lifecycle cost, regulatory alignment, and implementation compatibility. For organizations sourcing hospitality infrastructure across borders, especially when comparing multiple manufacturers or system providers, the quality of the benchmarking process often determines whether a project stays on budget, meets carbon targets, and performs reliably over 3 to 10 years.

Why Benchmarking Analysis Matters in Tourism and Hospitality Procurement

A good benchmarking analysis is not simply a side-by-side checklist. It is a structured method for testing whether products, systems, or infrastructure solutions can perform under real operating conditions. In tourism projects, that can include occupancy fluctuation, coastal humidity, mountain temperature swings, 24/7 network demand, and maintenance access constraints across remote sites.

This is especially important in modern tourism development, where hardware and digital systems are tightly connected. A glamping unit’s insulation performance affects guest comfort and HVAC load. A hotel IoT network affects room automation response times, energy monitoring accuracy, and guest service speed. A benchmarking report must therefore connect isolated metrics to operational outcomes, not just list technical numbers.

For procurement professionals, the biggest risk is buying based on aesthetic presentation rather than measurable suitability. Two suppliers may quote similar prices, yet one may require 20% more maintenance visits per year or show higher material fatigue after repeated loading cycles. Benchmarking comparison helps clarify these differences before contract signing, sample approval, or distributor onboarding.

A strong analysis also supports communication across teams. Engineers, operators, sustainability officers, and finance reviewers often evaluate the same project through different lenses. Benchmarking data creates a shared reference point by translating performance into thresholds, tolerances, and service implications that each stakeholder can understand.

Typical procurement questions benchmarking should answer

- Can the product maintain stable performance across a 5°C to 35°C operating range or broader climate variation?

- Will integration with existing PMS, BMS, or IoT gateways require additional middleware, labor hours, or software licenses?

- How do durability results compare over 12, 24, or 36 months of expected use?

- What is the likely maintenance interval: monthly, quarterly, or semiannual?

- Does the product meet carbon, material, and safety documentation requirements for cross-border project approval?

When these questions are answered in a consistent benchmarking process, the procurement team can move from subjective preference to defensible selection criteria. That is the practical difference between a decorative comparison sheet and a decision-grade benchmarking analysis.

Core Elements of a Good Benchmarking Analysis

The quality of a benchmarking analysis depends on how clearly it defines scope, metrics, and test conditions. If one supplier is evaluated under laboratory conditions and another under field simulation, the benchmarking data may not be comparable. A credible process must standardize the baseline first, then compare performance across the same functional requirements.

For tourism and hospitality infrastructure, the most valuable benchmarking tools usually assess 5 dimensions: structural durability, energy or thermal efficiency, system interoperability, compliance readiness, and serviceability. Depending on the product category, additional factors such as acoustic control, corrosion resistance, or network latency may also be relevant.

A good benchmarking report should also distinguish between claimed performance and verified performance. This seems obvious, but many purchasing teams still compare brochures instead of measured outcomes. Benchmarking software can help centralize raw data, revision history, and weighted scoring, but the software itself is only useful if the metric definitions are sound.

Below is a practical framework that buyers and evaluators can use when reviewing tourism hardware and smart hospitality systems.

Evaluation Element What to Measure Why It Matters in Tourism Projects Durability Load cycles, fatigue resistance, moisture exposure, surface wear after repeated use Reduces failure risk in high-traffic resorts, parks, and remote accommodation sites Efficiency Thermal transfer, energy draw, standby consumption, response time Improves operating cost control and supports carbon-conscious destination planning Integration Protocol compatibility, API readiness, data throughput, system uptime Prevents costly rework when connecting rooms, sensors, access control, and management systems Compliance Material documentation, emissions data, fire or electrical conformity records Supports project approvals, import checks, and sustainability reporting The main takeaway is that a reliable benchmarking comparison should connect measurement categories to project risk. A product does not need to be “best” in every metric, but it must be suitable for the actual operating environment, installation sequence, and ownership model.

What strong methodology looks like

1. Clear test boundary

Define whether the comparison covers product-only performance, installed performance, or full system performance. These are not the same. For example, network throughput measured at device level may differ by 15% to 30% once gateways and cloud synchronization are added.

2. Repeatable scoring logic

Use weighted criteria, such as 30% durability, 25% efficiency, 20% integration, 15% compliance, and 10% service response. The exact ratio can vary, but the logic should be fixed before vendors are scored.

3. Transparent assumptions

If lifecycle cost is projected over 5 years, the report should state assumptions on maintenance frequency, replacement intervals, and average utilization. Hidden assumptions weaken the credibility of the benchmarking analysis.

How to Evaluate Benchmarking Data, Tools, and Reporting Quality

Many organizations invest in benchmarking software but still struggle to reach usable conclusions. The issue is rarely the dashboard itself. More often, the problem is inconsistent data input, missing context, or overreliance on supplier-submitted metrics without field verification. Good benchmarking tools should support traceability, not replace judgment.

For buyers in the tourism supply chain, the first test is whether the benchmarking data is normalized. If one cabin supplier reports insulation under static indoor conditions and another reports results under outdoor wind exposure, the numbers are technically real but commercially misleading. The same issue appears in smart hotel devices when latency, bandwidth, and uptime are measured under different network loads.

A practical benchmarking report should therefore include source type, test window, condition notes, and tolerance range. A measured value without context can create false confidence. For example, a response time of 120 ms may be excellent for one control scenario but weak for another if the system must support synchronized room automation across hundreds of endpoints.

The table below shows what procurement and evaluation teams should look for when reviewing benchmarking documentation and digital tools.

Review Area Strong Benchmarking Practice Warning Sign Data source Includes test origin, date, method, and sample condition Only marketing brochure figures with no testing context Comparability Uses the same conditions, units, and scoring window across suppliers Mixed units, inconsistent baselines, or no normalization Decision support Links scores to procurement thresholds, risk level, and next action Provides raw numbers but no interpretation for sourcing decisions Revision control Tracks version changes over 2 to 3 review rounds No update history or unclear supplier revisions The best benchmarking solutions do not overwhelm users with more data than they need. Instead, they organize evidence so that a sourcing manager can quickly identify what passes, what fails, and what requires follow-up testing. That is especially valuable when procurement cycles are compressed into 2 to 6 weeks.

Checklist for benchmarking report quality

- Confirm that each metric has a defined unit, acceptable threshold, and comparison baseline.

- Check whether results reflect lab testing, pilot installation, or live operational conditions.

- Verify that exclusions are stated clearly, such as software modules, transport conditions, or local installation variance.

- Review whether scoring weights reflect your project priorities, not just the supplier’s strengths.

- Require a concise risk note for every critical metric that falls outside the target range.

This approach makes benchmarking comparison actionable. It turns a spreadsheet into an approval tool, a negotiation aid, and a technical filter for future vendor shortlisting.

Benchmarking Analysis in Real Tourism Infrastructure Scenarios

A good benchmarking analysis should be scenario-driven. Tourism infrastructure is too varied for one universal scorecard. The right metrics for eco-lodges in a humid coastal zone are not identical to those for mountain glamping pods, city business hotels, or amusement hardware exposed to repeated mechanical stress. The benchmarking process must match the use case.

For prefab hospitality units, thermal performance and moisture management are often priority metrics. If wall and roof assemblies cannot maintain stable indoor conditions, HVAC load rises, guest comfort drops, and maintenance complaints increase. In many projects, a difference of even 10% to 15% in thermal efficiency can materially affect annual energy planning.

For smart hotel systems, the focus often shifts to interoperability and throughput. Door access, room controls, occupancy sensors, and management dashboards may all depend on smooth protocol communication. A benchmarking report in this category should test not just feature availability, but transmission reliability, delay behavior, and scaling performance when device counts move from 50 rooms to 300 or more.

For amusement or leisure hardware, mechanical repetition and material fatigue become more important. Visual appearance may remain acceptable while performance margins are shrinking. Benchmarking data helps evaluators distinguish between short-term showroom quality and long-term operating resilience.

Scenario-based metric priorities

Tourism Scenario Priority Metrics Procurement Focus Prefab glamping units Thermal insulation, moisture resistance, structural tolerance, installation time Seasonal comfort, transport efficiency, low rework rate, lifecycle maintenance Smart hotel IoT systems Data throughput, protocol compatibility, uptime stability, response delay System integration, scaling readiness, support burden, future upgrades Leisure and amusement hardware Fatigue resistance, coating wear, replacement cycle, safety inspection intervals Operating reliability, spare part planning, downtime avoidance The value of this comparison is that it keeps the benchmarking analysis relevant to actual project goals. A distributor may care about warranty risk and service burden. A procurement director may care more about 5-year ownership cost. A site operator may focus on maintenance intervals and guest disruption. The same benchmarking data can support all three decisions if organized correctly.

Common mistake: overvaluing headline specifications

One frequent mistake is choosing based on one standout metric, such as maximum throughput or nominal energy efficiency, while ignoring integration overhead or field durability. In tourism projects, balanced performance usually matters more than isolated peaks. A system that performs 8% lower on paper but integrates 30% faster may create better project outcomes overall.

Building a Reliable Benchmarking Process for Procurement and Commercial Evaluation

A dependable benchmarking process should begin before final quotation review. If benchmarking only starts after price negotiation, the team may already be anchored to the wrong suppliers. The better approach is to screen vendors in stages: document review, technical benchmark, pilot validation, and commercial alignment. This 4-step structure reduces wasted comparison work and keeps evaluation criteria consistent.

For most B2B tourism procurement projects, a practical timeline is 2 to 4 weeks for initial benchmarking and another 1 to 3 weeks for sample, pilot, or integration confirmation. Complex hospitality systems may need longer, particularly when third-party software, multilingual support, or regional compliance documentation must be reviewed.

It is also useful to assign score ownership clearly. Engineering should not be solely responsible for serviceability, and procurement should not be solely responsible for technical thresholds. Cross-functional benchmarking creates stronger purchasing decisions because each department validates a different part of the risk profile.

For organizations working with external labs or data-driven benchmarking partners such as TVM, the benefit is often greater comparability and cleaner evidence packaging. Independent review helps remove ambiguity from supplier messaging and gives architects, operators, and sourcing teams a neutral basis for approval.

Suggested 4-step benchmarking workflow

- Define project-critical metrics and minimum thresholds, such as durability class, energy range, or integration protocol requirements.

- Collect comparable supplier submissions with the same unit format, testing assumptions, and documentation checklist.

- Run benchmarking comparison and identify red-flag gaps that require pilot testing, engineering clarification, or contract safeguards.

- Convert the final benchmarking report into sourcing actions: shortlist, negotiate, request revisions, or reject.

Risk controls that improve decision quality

- Set pass-fail thresholds before supplier names are attached to the scoring sheet.

- Separate measured results from vendor declarations in the reporting template.

- Record environmental conditions for every benchmark to avoid false comparisons.

- Review maintenance assumptions over at least a 3-year operating window where possible.

- Require escalation notes for any compliance gap, interface limitation, or replacement part uncertainty.

When done well, benchmarking solutions become more than procurement tools. They support distributor qualification, technical sales alignment, project planning, and even post-installation review. That broader value is why strong benchmarking analysis is becoming central to sustainable tourism development rather than a secondary technical exercise.

FAQ: Common Questions About Benchmarking Analysis

How do you know if benchmarking data is reliable enough for purchasing?

Start with comparability. Reliable benchmarking data should show test conditions, units, sample type, and time window. If a supplier cannot explain whether data came from lab simulation, field testing, or internal estimation, the result should be treated as directional rather than decision-grade. For larger orders or multi-site rollouts, a pilot or third-party review is often worth the extra 7 to 14 days.

Which metrics matter most in tourism infrastructure benchmarking?

The answer depends on the asset. Prefab accommodation usually prioritizes thermal efficiency, water resistance, and installation tolerance. Smart hotel systems prioritize compatibility, uptime, and response speed. Leisure hardware prioritizes fatigue resistance, inspection intervals, and serviceability. Most projects still benefit from comparing 4 core areas: durability, efficiency, compliance, and integration.

Can benchmarking software replace an independent technical review?

Not fully. Benchmarking software improves consistency, scoring visibility, and documentation control, but it cannot correct poor assumptions or missing field context. The strongest results come from combining software-based benchmarking tools with clear methodology and, where needed, independent validation.

What is a realistic deliverable from a good benchmarking report?

A useful report should provide more than a ranking. It should identify the tested scope, highlight threshold gaps, estimate operational implications, and recommend next actions. In practical terms, it should help the team decide within 1 document whether to approve, reject, retest, or negotiate.

A good benchmarking analysis gives procurement teams, evaluators, and channel partners a disciplined way to compare real performance instead of relying on surface-level claims. In tourism and hospitality projects, where infrastructure must balance durability, efficiency, carbon alignment, and system integration, the strength of the benchmarking process directly affects project risk, lifecycle cost, and delivery confidence.

TerraVista Metrics supports this need by translating complex engineering evidence into structured benchmarking comparison, usable benchmarking reports, and practical benchmarking solutions for global tourism development. If you are evaluating prefab hospitality units, smart hotel systems, or tourism hardware for sourcing, distribution, or project qualification, now is the right time to build a more rigorous evidence base.

Contact us to discuss your benchmarking requirements, request a tailored evaluation framework, or explore decision-ready metrics for your next tourism infrastructure project.

Recommended News

![When enterprise software becomes too costly to maintain When enterprise software becomes too costly to maintain]() May 31, 2026When enterprise software becomes too costly to maintainEnterprise software maintenance costs rising? Learn how to spot risk, benchmark performance, and decide when to maintain, modernize, or replace.

May 31, 2026When enterprise software becomes too costly to maintainEnterprise software maintenance costs rising? Learn how to spot risk, benchmark performance, and decide when to maintain, modernize, or replace.![Sheet Metal Thickness Changes More Than Strength Sheet Metal Thickness Changes More Than Strength]() May 29, 2026Sheet Metal Thickness Changes More Than StrengthSheet metal thickness affects strength, weight, corrosion, noise, cost, and compliance. Use this checklist to choose safer, longer-lasting panels for tourism assets.

May 29, 2026Sheet Metal Thickness Changes More Than StrengthSheet metal thickness affects strength, weight, corrosion, noise, cost, and compliance. Use this checklist to choose safer, longer-lasting panels for tourism assets.![Why smart hotel bulk order pricing varies so much Why smart hotel bulk order pricing varies so much]() May 23, 2026Why smart hotel bulk order pricing varies so muchSmart hotel bulk order pricing varies by integration, cybersecurity, compliance, software, and support. Learn how to compare true lifecycle value and avoid hidden costs.

May 23, 2026Why smart hotel bulk order pricing varies so muchSmart hotel bulk order pricing varies by integration, cybersecurity, compliance, software, and support. Learn how to compare true lifecycle value and avoid hidden costs.![How to estimate smart hotel cost before budgeting How to estimate smart hotel cost before budgeting]() May 14, 2026How to estimate smart hotel cost before budgetingSmart hotel cost explained for finance teams: learn how to estimate hardware, software, integration, cybersecurity, and lifecycle expenses before budgeting with confidence.

May 14, 2026How to estimate smart hotel cost before budgetingSmart hotel cost explained for finance teams: learn how to estimate hardware, software, integration, cybersecurity, and lifecycle expenses before budgeting with confidence.![Why smart hotel price can vary more than expected Why smart hotel price can vary more than expected]() May 06, 2026Why smart hotel price can vary more than expectedSmart hotel price can vary due to hidden tech, energy systems, security, and maintenance. Discover what really drives rates and how to spot the best value before you book.

May 06, 2026Why smart hotel price can vary more than expectedSmart hotel price can vary due to hidden tech, energy systems, security, and maintenance. Discover what really drives rates and how to spot the best value before you book.![How to Use Ecoinvent Data Without Common LCA Errors How to Use Ecoinvent Data Without Common LCA Errors]() May 22, 2026How to Use Ecoinvent Data Without Common LCA ErrorsEcoinvent mistakes can weaken any LCA. Learn how to choose the right datasets, set accurate boundaries, and improve decision-ready results for tourism, hospitality, and mixed-use projects.

May 22, 2026How to Use Ecoinvent Data Without Common LCA ErrorsEcoinvent mistakes can weaken any LCA. Learn how to choose the right datasets, set accurate boundaries, and improve decision-ready results for tourism, hospitality, and mixed-use projects.![Why emerging markets still matter in 2026 growth planning Why emerging markets still matter in 2026 growth planning]() May 22, 2026Why emerging markets still matter in 2026 growth planningEmerging markets still matter in 2026 growth planning. Learn how to assess demand, risk, compliance, and procurement with TerraVista Metrics for smarter expansion decisions.

May 22, 2026Why emerging markets still matter in 2026 growth planningEmerging markets still matter in 2026 growth planning. Learn how to assess demand, risk, compliance, and procurement with TerraVista Metrics for smarter expansion decisions.![Tourism Development Risks to Watch Before New Site Investment Tourism Development Risks to Watch Before New Site Investment]() May 22, 2026Tourism Development Risks to Watch Before New Site InvestmentTourism development risks can derail new site investment fast. Discover a practical checklist to test infrastructure, climate resilience, compliance, and lifecycle costs before you commit.

May 22, 2026Tourism Development Risks to Watch Before New Site InvestmentTourism development risks can derail new site investment fast. Discover a practical checklist to test infrastructure, climate resilience, compliance, and lifecycle costs before you commit.![How AI for business helps teams make faster decisions How AI for business helps teams make faster decisions]() May 20, 2026How AI for business helps teams make faster decisionsAI for business helps teams make faster, evidence-based decisions by turning complex technical data into clear, comparable insights—reducing risk, improving consistency, and speeding approvals.

May 20, 2026How AI for business helps teams make faster decisionsAI for business helps teams make faster, evidence-based decisions by turning complex technical data into clear, comparable insights—reducing risk, improving consistency, and speeding approvals.![Is container shipping still the safest low cost option Is container shipping still the safest low cost option]() May 19, 2026Is container shipping still the safest low cost optionContainer shipping is still a leading low-cost option when cargo, packaging, route risk, and delivery timing are planned right. Learn the checklist that prevents damage and delays.

May 19, 2026Is container shipping still the safest low cost optionContainer shipping is still a leading low-cost option when cargo, packaging, route risk, and delivery timing are planned right. Learn the checklist that prevents damage and delays.![How to compare warehousing solutions for faster growth How to compare warehousing solutions for faster growth]() May 18, 2026How to compare warehousing solutions for faster growthWarehousing solutions comparison made simple: learn how to assess scalability, integration, cost, and risk to support faster growth and smarter supply chain decisions.

May 18, 2026How to compare warehousing solutions for faster growthWarehousing solutions comparison made simple: learn how to assess scalability, integration, cost, and risk to support faster growth and smarter supply chain decisions.![Why whiteboard markers fail faster than most teams expect Why whiteboard markers fail faster than most teams expect]() May 17, 2026Why whiteboard markers fail faster than most teams expectWhiteboard markers fail early due to weak cap seals, heat, poor storage, and damaged boards. Learn how teams can cut waste, improve reliability, and choose longer-lasting markers.

May 17, 2026Why whiteboard markers fail faster than most teams expectWhiteboard markers fail early due to weak cap seals, heat, poor storage, and damaged boards. Learn how teams can cut waste, improve reliability, and choose longer-lasting markers.![School equipment costs more when these basics get missed School equipment costs more when these basics get missed]() May 17, 2026School equipment costs more when these basics get missedSchool equipment may look affordable at first, but hidden durability, compliance, and integration gaps can drive costs up. Learn how to buy smarter and avoid costly mistakes.

May 17, 2026School equipment costs more when these basics get missedSchool equipment may look affordable at first, but hidden durability, compliance, and integration gaps can drive costs up. Learn how to buy smarter and avoid costly mistakes.![Back to school trends that may change buying plans Back to school trends that may change buying plans]() May 16, 2026Back to school trends that may change buying plansBack to school trends are reshaping buying plans across travel and hospitality. Discover how data-driven procurement improves flexibility, efficiency, and guest experience.

May 16, 2026Back to school trends that may change buying plansBack to school trends are reshaping buying plans across travel and hospitality. Discover how data-driven procurement improves flexibility, efficiency, and guest experience.![Office supplies costs rise when small choices go wrong Office supplies costs rise when small choices go wrong]() May 16, 2026Office supplies costs rise when small choices go wrongOffice supplies costs often rise through small, untracked buying decisions. Learn how to reduce waste, improve purchasing control, and protect budgets with smarter standards.

May 16, 2026Office supplies costs rise when small choices go wrongOffice supplies costs often rise through small, untracked buying decisions. Learn how to reduce waste, improve purchasing control, and protect budgets with smarter standards.![Agri-Supply Chain Delays That Hurt Freshness and Profit Agri-Supply Chain Delays That Hurt Freshness and Profit]() May 15, 2026Agri-Supply Chain Delays That Hurt Freshness and ProfitAgri-supply chain delays cut freshness, raise spoilage, and drain margins. Discover key bottlenecks, profit risks, and data-driven fixes for distributors and hospitality buyers.

May 15, 2026Agri-Supply Chain Delays That Hurt Freshness and ProfitAgri-supply chain delays cut freshness, raise spoilage, and drain margins. Discover key bottlenecks, profit risks, and data-driven fixes for distributors and hospitality buyers.![Are Refurbished Printers and Scanners Worth the Risk? Are Refurbished Printers and Scanners Worth the Risk?]() May 15, 2026Are Refurbished Printers and Scanners Worth the Risk?Printers and scanners: are refurbished models a smart savings move or a hidden liability? Learn the key risks, benefits, and buying checks before you invest.

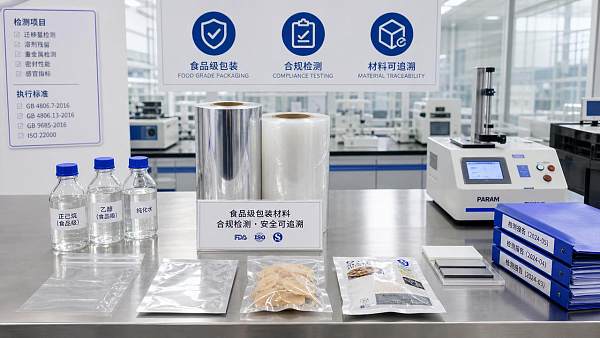

May 15, 2026Are Refurbished Printers and Scanners Worth the Risk?Printers and scanners: are refurbished models a smart savings move or a hidden liability? Learn the key risks, benefits, and buying checks before you invest.![Why Food Grade Packaging Fails Compliance Checks Why Food Grade Packaging Fails Compliance Checks]() May 14, 2026Why Food Grade Packaging Fails Compliance ChecksFood grade packaging fails compliance checks when migration risks, documentation gaps, and weak traceability go unnoticed. Learn the hidden causes and smarter verification steps.

May 14, 2026Why Food Grade Packaging Fails Compliance ChecksFood grade packaging fails compliance checks when migration risks, documentation gaps, and weak traceability go unnoticed. Learn the hidden causes and smarter verification steps.![Bakery Equipment Buying Mistakes That Hurt Output Quality Bakery Equipment Buying Mistakes That Hurt Output Quality]() May 13, 2026Bakery Equipment Buying Mistakes That Hurt Output QualityBakery equipment buying mistakes can quietly damage consistency, efficiency, and product quality. Learn what to check before investing to avoid waste, downtime, and costly output issues.

May 13, 2026Bakery Equipment Buying Mistakes That Hurt Output QualityBakery equipment buying mistakes can quietly damage consistency, efficiency, and product quality. Learn what to check before investing to avoid waste, downtime, and costly output issues.![Why Agricultural Chemicals Fail Even When the Label Is Followed Why Agricultural Chemicals Fail Even When the Label Is Followed]() May 13, 2026Why Agricultural Chemicals Fail Even When the Label Is FollowedAgricultural chemicals can fail even when labels are followed. Discover how water, weather, equipment, and mixing issues reduce results—and how to improve performance fast.

May 13, 2026Why Agricultural Chemicals Fail Even When the Label Is FollowedAgricultural chemicals can fail even when labels are followed. Discover how water, weather, equipment, and mixing issues reduce results—and how to improve performance fast.![Industrial and Manufacturing Orders Are Recovering Unevenly in 2026 Industrial and Manufacturing Orders Are Recovering Unevenly in 2026]() May 12, 2026Industrial and Manufacturing Orders Are Recovering Unevenly in 2026Industrial & Manufacturing orders are recovering unevenly in 2026. Learn how to assess supplier risk, compliance, lead times, and performance before sourcing critical projects.

May 12, 2026Industrial and Manufacturing Orders Are Recovering Unevenly in 2026Industrial & Manufacturing orders are recovering unevenly in 2026. Learn how to assess supplier risk, compliance, lead times, and performance before sourcing critical projects.![Grain Processing Capacity Is Growing, but Where Are the Bottlenecks? Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?]() May 12, 2026Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?Grain processing capacity is rising, but hidden bottlenecks still limit throughput. Discover key constraints, practical checks, and smarter ways to improve efficiency.

May 12, 2026Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?Grain processing capacity is rising, but hidden bottlenecks still limit throughput. Discover key constraints, practical checks, and smarter ways to improve efficiency.![Cosmetic ingredients under review as safety standards tighten Cosmetic ingredients under review as safety standards tighten]() May 09, 2026Cosmetic ingredients under review as safety standards tightenCosmetic ingredients face stricter safety review as global standards rise. Learn how to assess compliance, traceability, and supplier risk to build safer, audit-ready products.

May 09, 2026Cosmetic ingredients under review as safety standards tightenCosmetic ingredients face stricter safety review as global standards rise. Learn how to assess compliance, traceability, and supplier risk to build safer, audit-ready products.![Industrial & Manufacturing trends reshaping supplier selection Industrial & Manufacturing trends reshaping supplier selection]() May 09, 2026Industrial & Manufacturing trends reshaping supplier selectionIndustrial & Manufacturing trends are reshaping supplier selection in tourism infrastructure. See how buyers compare durability, integration, and compliance to choose smarter partners.

May 09, 2026Industrial & Manufacturing trends reshaping supplier selectionIndustrial & Manufacturing trends are reshaping supplier selection in tourism infrastructure. See how buyers compare durability, integration, and compliance to choose smarter partners.

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.