Search News

TerraVista Metrics (TVM)Industry Portal

TerraVista Metrics (TVM)Hot Articles

TerraVista Metrics (TVM)- UL 60335-2-107:2026 Tightens Smart Lighting ExportsUL 60335-2-107:2026 tightens smart lighting exports with new EMC immunity and thermal protection checks. See how LED exporters can prepare faster for North America compliance.

- EN 16562:2026 Takes Effect for EU-Bound Modular CabinsEN 16562:2026 now impacts EU-bound Modular Cabins with A2-s1,d0, CE and EPD requirements. See who is affected, key compliance risks, and how to stay export-ready.

- RV MCU Lead Times Stretch as Local Supply Gains Audit AccessRV MCU lead times stretch beyond 26 weeks as local suppliers gain audit access and certification progress. Explore sourcing risks, compliance checks, and qualified alternatives for RV supply chains.

Popular Tags

TerraVista Metrics (TVM)Industry NewsHow Reliable Is Your Benchmarking Data?

auth.Time

Jun 09, 2026Click Count

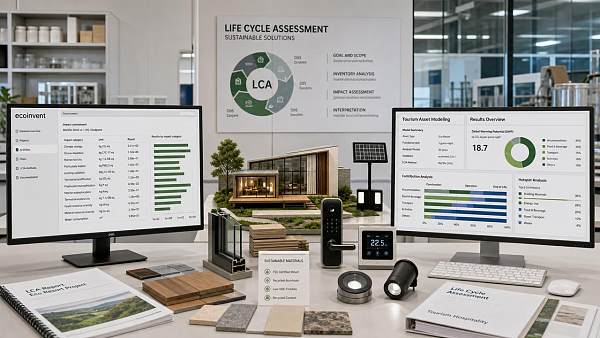

Reliable benchmarking data is the foundation of confident procurement and strategic planning. In tourism infrastructure and hospitality technology, benchmarking software, benchmarking tools, and benchmarking analysis help buyers validate performance, compare suppliers, and reduce integration risk. This article explores how to assess benchmarking data quality, strengthen your benchmarking process, and turn every benchmarking report into actionable insight for sustainable tourism development.

For researchers, procurement managers, commercial evaluators, and channel partners, the challenge is rarely a lack of claims. The market is full of brochures, dashboards, and supplier promises. The real question is whether the underlying benchmarking data is consistent, testable, and relevant to real operating conditions across tourism projects such as glamping sites, resorts, amusement installations, and smart hotel environments.

In a sector where thermal efficiency, IoT stability, material fatigue, installation compatibility, and carbon compliance all affect lifecycle cost, poor benchmarking can distort an entire sourcing decision. Reliable data does more than rank options. It clarifies technical trade-offs, shortens supplier screening cycles, and supports better investment timing for infrastructure that may need to perform for 5, 10, or even 15 years.

Why Benchmarking Data Matters in Tourism Infrastructure Procurement

Tourism infrastructure sits at the intersection of construction, hospitality operations, energy management, and guest experience. A prefabricated cabin is not just a structure. It is a thermal envelope, a maintenance asset, a utility load, and a brand promise. Likewise, a hotel IoT network is not simply a software layer. It must maintain uptime, support hundreds of connected nodes, and integrate with access control, HVAC, and property management systems.

This is why benchmarking analysis must move beyond generic product comparison. A buyer evaluating three cabin suppliers may find that all claim insulation performance, but only one provides test conditions, sample thickness, climate assumptions, and error tolerance. The difference between a measured thermal transmittance under controlled conditions and a marketing phrase can translate into 12% to 25% higher annual energy demand in a harsh climate zone.

The same principle applies to smart hospitality systems. If a supplier reports data throughput without indicating packet loss, peak occupancy load, or concurrent device count, the result can be misleading. A network that performs well at 50 devices may degrade sharply at 300 devices. For procurement teams, the cost of discovering this after deployment is much higher than identifying it during vendor comparison.

Where unreliable data causes the most damage

The largest risks usually appear in four stages: early screening, technical approval, installation planning, and post-handover operation. If the original benchmarking report is weak, each downstream decision inherits that weakness. Commercial teams may overestimate service life, engineering teams may underestimate retrofit complexity, and operators may face maintenance cycles twice as frequent as expected.

For distributors and agents, unreliable benchmarking creates an additional commercial risk. A product line that looks competitive on paper may trigger warranty pressure or reputation loss in export markets if benchmark parameters cannot be replicated under local conditions. In cross-border tourism projects, even a 2 mm assembly tolerance issue or a 5 dB acoustic deviation can become a contract dispute.

Core outcomes of reliable benchmarking

- Faster supplier filtering within the first 7 to 14 days of evaluation.

- Lower integration risk across cabins, utilities, control systems, and guest-facing technology.

- More accurate lifecycle cost forecasting over 3-year, 5-year, and 10-year planning windows.

- Stronger negotiation leverage when benchmark thresholds are tied to acceptance criteria.

For organizations using a data-first approach, benchmarking software and benchmarking tools are most useful when paired with engineering interpretation. The value is not the spreadsheet alone. The value is the ability to determine whether data quality is sufficient for a real procurement decision.

How to Judge the Quality of a Benchmarking Report

A strong benchmarking report should answer five practical questions: what was tested, under which conditions, against what baseline, with what variance, and for which application scenario. If any of these are missing, the benchmarking process may still be useful for exploration, but it is not robust enough for final supplier approval or large-scale deployment planning.

For example, material fatigue data for amusement or leisure hardware must indicate test cycle count, load pattern, environmental exposure, and pass-fail definition. A result after 20,000 cycles says something very different from a result after 100,000 cycles. Similarly, environmental durability data without humidity, UV exposure, or salt-spray context is often too broad to support destination-level investment decisions.

Benchmarking tools should also reveal whether the supplier used representative samples. One polished prototype is not the same as batch-level consistency. Procurement and commercial evaluation teams should ask whether the report reflects 1 unit, 3 units, or a statistically meaningful production sample. In many B2B contexts, batch variation of 3% to 8% can materially affect installation performance and maintenance planning.

A practical checklist for data reliability

The table below can help buyers assess whether a benchmarking report is suitable for tourism and hospitality sourcing decisions, especially when comparing infrastructure hardware and integrated systems from multiple suppliers.

Evaluation Factor What to Look For Risk If Missing Test Conditions Temperature, humidity, load, network concurrency, or operating hours clearly stated Data cannot be compared across vendors or deployment climates Sample Basis Prototype, pilot batch, or mass production sample disclosed Performance may not reflect actual delivered goods Variance Range Tolerance, deviation, or confidence interval included Small differences may be statistically meaningless Application Relevance Scenario linked to hotels, resorts, glamping, leisure parks, or destination infrastructure Strong lab data may fail in real operating environments The key conclusion is simple: benchmarking data is only reliable when context travels with the number. A benchmark value without a method statement is a weak decision input. A benchmark value tied to operating assumptions, sample scope, and variance is far more useful for procurement, resale, and technical approval.

Questions buyers should ask before approval

- Was the benchmark captured under conditions similar to our destination, occupancy level, or utility profile?

- Does the report include a baseline for comparison, such as an industry norm or previous-generation system?

- Are acceptance thresholds clear enough to use in a purchase agreement or site acceptance test?

- Can the supplier repeat the benchmark within a defined error range such as ±3% or ±5%?

These questions help separate analytical discipline from promotional presentation. For serious buyers, that distinction often determines whether a project stays on budget during the first 6 to 12 months of operation.

Key Metrics That Matter Across Tourism Hardware and Hospitality Technology

Benchmarking analysis must reflect the type of asset under review. In tourism projects, not every metric deserves equal weight. Procurement teams should concentrate on the indicators that affect safety, operating efficiency, guest comfort, and integration compatibility. A decorative material benchmark may be useful, but structural load, thermal retention, network uptime, and maintenance intervals usually matter more to long-term performance.

For prefabricated tourism units such as modular cabins, relevant benchmarks often include thermal transfer, wind resistance assumptions, acoustic insulation, moisture response, and installation tolerance. Typical review windows include seasonal performance over 30 to 90 days and projected service life assumptions over 8 to 15 years. If the benchmark report only captures a short static test, it may not support investment decisions in variable outdoor conditions.

For smart hotel systems, the most important benchmarks usually include network latency, packet loss, response time for room controls, integration compatibility, and uptime under concurrent loads. A room automation trigger that responds in 0.5 seconds at 20 devices may respond in 2.5 seconds at 250 devices. That difference shapes guest experience and service desk workload, especially in mid-size and large properties.

Recommended benchmark focus by category

The following table summarizes which metrics typically deserve priority during supplier comparison. The exact threshold will vary by project, but the categories help create a consistent benchmarking process across multiple procurement packages.

Asset Category Priority Benchmarks Why It Matters Prefab Glamping Units Thermal efficiency, water resistance, assembly tolerance, acoustic attenuation Affects energy use, comfort, installation speed, and weather resilience Hotel IoT Networks Throughput, latency, uptime, node capacity, interoperability Determines control stability, guest experience, and future expansion Leisure and Amusement Hardware Material fatigue, corrosion response, load-bearing behavior, maintenance cycle Influences safety planning, replacement timing, and operating continuity Sustainable Utility Systems Energy draw, recovery rate, standby consumption, carbon-related reporting consistency Supports compliance goals and long-term operating cost control This comparison shows that a useful benchmarking report is not universal by default. It becomes useful when the chosen metrics align with the asset’s functional role. That is why tourism developers often benefit from a structural filter approach: remove aesthetic noise first, then compare only the benchmarks that affect performance, compliance, and integration.

Common metric mistakes to avoid

- Using a single benchmark value without stating the test duration, such as 24 hours versus 90 days.

- Comparing lab data from one supplier with field data from another supplier.

- Ignoring interface compatibility when systems must connect with PMS, HVAC, BMS, or access control platforms.

- Overweighting upfront efficiency while underweighting maintenance frequency, spare part availability, or retraining time.

In practice, the best benchmarking tools are those that support side-by-side interpretation. They help teams identify not only which option performs better, but whether the benchmark itself is decision-ready.

Building a Stronger Benchmarking Process From Vendor Screening to Site Acceptance

A reliable benchmarking process should be designed as a workflow rather than a one-time report request. In many tourism and hospitality projects, the procurement cycle involves multiple teams: design consultants, technical reviewers, operations managers, finance staff, and channel partners. If benchmarking criteria are introduced too late, decisions may already be anchored by price or visual presentation instead of measurable suitability.

A stronger process begins with defining 4 to 6 non-negotiable performance indicators before vendor outreach. These might include thermal performance range, allowable installation deviation, target uptime, interface compatibility requirements, and expected maintenance interval. Once these thresholds are documented, benchmarking software becomes more effective because it is measuring against a procurement logic, not just collecting scattered numbers.

The next step is to align testing with project stage. During early screening, broad benchmarks are useful for eliminating weak options quickly. During technical due diligence, more detailed benchmarking analysis should test repeatability, environmental fit, and handover implications. During site acceptance, the focus should shift to field verification and tolerance confirmation. Each stage asks a different question, so each stage needs a different level of detail.

A five-step benchmarking workflow

- Define use case and thresholds: establish 4 to 6 critical metrics tied to guest comfort, system stability, or maintenance exposure.

- Screen vendors: request baseline benchmarking reports within 7 to 10 business days and reject submissions without methods or sample scope.

- Validate comparability: normalize units, climate assumptions, load profiles, and test durations before comparing results.

- Run field or pilot verification: confirm whether lab benchmarks remain within an acceptable variance such as ±5% under site-like conditions.

- Embed into contract and handover: translate the final benchmark values into acceptance clauses, maintenance triggers, and spare-part planning.

This workflow helps procurement managers avoid a common mistake: selecting based on a benchmarking report that cannot later support commissioning or warranty discussions. A number is useful only when it can travel from analysis to implementation. That is why independent benchmarking laboratories and neutral technical reviewers add value. They reduce interpretation bias and create a common language for suppliers and buyers.

Typical timelines and deliverables

In a mid-scale project, initial benchmark screening may take 1 to 2 weeks, detailed technical review another 2 to 4 weeks, and pilot validation 2 to 6 weeks depending on product type. For infrastructure components with longer fabrication cycles, integrating benchmarking checkpoints early can prevent delays of 30 days or more during final approval. That is particularly important for international buyers coordinating logistics, compliance review, and installation sequencing.

For organizations working with TerraVista Metrics, the value of the process lies in converting manufacturing data into structured whitepapers that support decision clarity. When engineering metrics are standardized, commercial teams can evaluate options on substance rather than supplier storytelling.

Common Benchmarking Mistakes and How Buyers Can Reduce Risk

Even experienced buyers can misread benchmarking data if the report looks technical enough. One of the most common mistakes is treating formatting as proof of rigor. A polished PDF with charts and dashboards may still omit load conditions, baseline assumptions, or field applicability. In tourism procurement, where systems must survive variable occupancy, outdoor exposure, and mixed-user behavior, context is more important than visual presentation.

Another common issue is over-comparing isolated metrics. A glamping structure with stronger thermal performance may also require longer installation time or more specialized maintenance. A hotel control platform with faster response speed may have lower interoperability or higher retraining cost. Benchmarking analysis becomes reliable only when it reflects the trade-off profile, not just the single strongest number in a supplier presentation.

Commercial evaluators should also watch for hidden baseline distortion. Some vendors compare a current system to an outdated reference point to create an artificial performance gap. Others present best-case rather than average-case values. A realistic benchmarking report should distinguish peak performance, normal operating performance, and degraded or stress-condition performance where relevant.

Risk control points for procurement teams

The table below outlines practical actions that help buyers reduce the risk of relying on weak or incomplete benchmarking data during supplier selection and implementation planning.

Risk Area Warning Sign Risk Reduction Action Unclear Testing Scope No sample size, no environment details, no test duration Request full method statement and reject non-repeatable data Weak Field Relevance Lab result only, no pilot or site-like verification Run a pilot test or field validation before final approval Selective Comparison Only one favorable metric is highlighted Score vendors across 4 to 6 weighted criteria, not one headline value Contract Misalignment Benchmark report is separate from acceptance terms Insert benchmark thresholds into commissioning and warranty clauses The main lesson is that benchmarking data should reduce ambiguity, not decorate it. Once buyers connect benchmark values to field verification, contract language, and maintenance expectations, the report becomes commercially useful rather than merely informative.

A short buyer warning list

- Do not compare reports created under different climates or occupancy assumptions without adjustment.

- Do not rely on one benchmark if the asset affects energy, safety, and integration at the same time.

- Do not finalize a large order before confirming whether benchmarked performance is batch-repeatable.

- Do not separate benchmarking review from commissioning criteria and after-sales planning.

For distributors and agents, these controls are equally important. They protect downstream market reputation and help build a portfolio of products that can stand up to technical scrutiny in different destination environments.

FAQ: Practical Questions About Benchmarking Data Quality

How much benchmarking data is enough before shortlisting a supplier?

For early-stage screening, 3 to 5 core metrics are usually enough if they are relevant, comparable, and tied to your project use case. For final approval, most buyers need a deeper set of data covering performance, tolerance, environmental fit, and maintenance implications. In practice, the threshold rises from basic comparison to decision-grade evidence as project value and system complexity increase.

What is the difference between benchmarking software and a benchmarking report?

Benchmarking software helps organize, normalize, and compare data across suppliers or asset types. A benchmarking report is the interpreted output that explains what the data means under specific conditions. Good software improves consistency, but reliability still depends on sample quality, testing discipline, and engineering interpretation.

Should procurement teams always ask for field validation?

Not for every low-risk item, but field validation is strongly recommended for high-impact assets such as modular accommodations, smart control systems, and leisure hardware with safety or uptime implications. A short pilot of 2 to 6 weeks can reveal integration problems that a static lab benchmark may miss.

Which teams should review benchmarking analysis?

The most effective review usually includes procurement, engineering, operations, and commercial evaluation. In channel-driven projects, distributors or local service partners should also participate because spare parts, training cycles, and local installation capability often influence whether benchmarked performance can be achieved after delivery.

How can TVM support buyers and market intermediaries?

TerraVista Metrics supports decision-making by translating raw engineering metrics into structured benchmarking analysis for tourism and hospitality supply chains. This is especially useful when buyers need clearer visibility into prefab performance, smart hotel system behavior, or durability characteristics that are not obvious from standard marketing materials alone.

Reliable benchmarking data is not just a technical resource. It is a commercial safeguard for anyone comparing suppliers, planning infrastructure investment, or building long-term tourism assets. When your benchmarking process is structured, scenario-based, and tied to acceptance criteria, every benchmarking report becomes more useful for selection, negotiation, and operational planning.

For information researchers, procurement professionals, evaluators, and channel partners, the priority is clear: use benchmarking software and benchmarking tools to organize evidence, but rely on disciplined benchmarking analysis to decide what that evidence actually means. That is where stronger procurement outcomes begin.

If you need a clearer way to compare tourism infrastructure hardware, hospitality systems, or manufacturing-backed performance data, contact TerraVista Metrics to discuss your project, request a tailored benchmarking framework, or explore a customized evaluation whitepaper for your next sourcing decision.

Recommended News

![When enterprise software becomes too costly to maintain When enterprise software becomes too costly to maintain]() May 31, 2026When enterprise software becomes too costly to maintainEnterprise software maintenance costs rising? Learn how to spot risk, benchmark performance, and decide when to maintain, modernize, or replace.

May 31, 2026When enterprise software becomes too costly to maintainEnterprise software maintenance costs rising? Learn how to spot risk, benchmark performance, and decide when to maintain, modernize, or replace.![Sheet Metal Thickness Changes More Than Strength Sheet Metal Thickness Changes More Than Strength]() May 29, 2026Sheet Metal Thickness Changes More Than StrengthSheet metal thickness affects strength, weight, corrosion, noise, cost, and compliance. Use this checklist to choose safer, longer-lasting panels for tourism assets.

May 29, 2026Sheet Metal Thickness Changes More Than StrengthSheet metal thickness affects strength, weight, corrosion, noise, cost, and compliance. Use this checklist to choose safer, longer-lasting panels for tourism assets.![Why smart hotel bulk order pricing varies so much Why smart hotel bulk order pricing varies so much]() May 23, 2026Why smart hotel bulk order pricing varies so muchSmart hotel bulk order pricing varies by integration, cybersecurity, compliance, software, and support. Learn how to compare true lifecycle value and avoid hidden costs.

May 23, 2026Why smart hotel bulk order pricing varies so muchSmart hotel bulk order pricing varies by integration, cybersecurity, compliance, software, and support. Learn how to compare true lifecycle value and avoid hidden costs.![How to estimate smart hotel cost before budgeting How to estimate smart hotel cost before budgeting]() May 14, 2026How to estimate smart hotel cost before budgetingSmart hotel cost explained for finance teams: learn how to estimate hardware, software, integration, cybersecurity, and lifecycle expenses before budgeting with confidence.

May 14, 2026How to estimate smart hotel cost before budgetingSmart hotel cost explained for finance teams: learn how to estimate hardware, software, integration, cybersecurity, and lifecycle expenses before budgeting with confidence.![Why smart hotel price can vary more than expected Why smart hotel price can vary more than expected]() May 06, 2026Why smart hotel price can vary more than expectedSmart hotel price can vary due to hidden tech, energy systems, security, and maintenance. Discover what really drives rates and how to spot the best value before you book.

May 06, 2026Why smart hotel price can vary more than expectedSmart hotel price can vary due to hidden tech, energy systems, security, and maintenance. Discover what really drives rates and how to spot the best value before you book.![How to Use Ecoinvent Data Without Common LCA Errors How to Use Ecoinvent Data Without Common LCA Errors]() May 22, 2026How to Use Ecoinvent Data Without Common LCA ErrorsEcoinvent mistakes can weaken any LCA. Learn how to choose the right datasets, set accurate boundaries, and improve decision-ready results for tourism, hospitality, and mixed-use projects.

May 22, 2026How to Use Ecoinvent Data Without Common LCA ErrorsEcoinvent mistakes can weaken any LCA. Learn how to choose the right datasets, set accurate boundaries, and improve decision-ready results for tourism, hospitality, and mixed-use projects.![Why emerging markets still matter in 2026 growth planning Why emerging markets still matter in 2026 growth planning]() May 22, 2026Why emerging markets still matter in 2026 growth planningEmerging markets still matter in 2026 growth planning. Learn how to assess demand, risk, compliance, and procurement with TerraVista Metrics for smarter expansion decisions.

May 22, 2026Why emerging markets still matter in 2026 growth planningEmerging markets still matter in 2026 growth planning. Learn how to assess demand, risk, compliance, and procurement with TerraVista Metrics for smarter expansion decisions.![Tourism Development Risks to Watch Before New Site Investment Tourism Development Risks to Watch Before New Site Investment]() May 22, 2026Tourism Development Risks to Watch Before New Site InvestmentTourism development risks can derail new site investment fast. Discover a practical checklist to test infrastructure, climate resilience, compliance, and lifecycle costs before you commit.

May 22, 2026Tourism Development Risks to Watch Before New Site InvestmentTourism development risks can derail new site investment fast. Discover a practical checklist to test infrastructure, climate resilience, compliance, and lifecycle costs before you commit.![How AI for business helps teams make faster decisions How AI for business helps teams make faster decisions]() May 20, 2026How AI for business helps teams make faster decisionsAI for business helps teams make faster, evidence-based decisions by turning complex technical data into clear, comparable insights—reducing risk, improving consistency, and speeding approvals.

May 20, 2026How AI for business helps teams make faster decisionsAI for business helps teams make faster, evidence-based decisions by turning complex technical data into clear, comparable insights—reducing risk, improving consistency, and speeding approvals.![Is container shipping still the safest low cost option Is container shipping still the safest low cost option]() May 19, 2026Is container shipping still the safest low cost optionContainer shipping is still a leading low-cost option when cargo, packaging, route risk, and delivery timing are planned right. Learn the checklist that prevents damage and delays.

May 19, 2026Is container shipping still the safest low cost optionContainer shipping is still a leading low-cost option when cargo, packaging, route risk, and delivery timing are planned right. Learn the checklist that prevents damage and delays.![How to compare warehousing solutions for faster growth How to compare warehousing solutions for faster growth]() May 18, 2026How to compare warehousing solutions for faster growthWarehousing solutions comparison made simple: learn how to assess scalability, integration, cost, and risk to support faster growth and smarter supply chain decisions.

May 18, 2026How to compare warehousing solutions for faster growthWarehousing solutions comparison made simple: learn how to assess scalability, integration, cost, and risk to support faster growth and smarter supply chain decisions.![Why whiteboard markers fail faster than most teams expect Why whiteboard markers fail faster than most teams expect]() May 17, 2026Why whiteboard markers fail faster than most teams expectWhiteboard markers fail early due to weak cap seals, heat, poor storage, and damaged boards. Learn how teams can cut waste, improve reliability, and choose longer-lasting markers.

May 17, 2026Why whiteboard markers fail faster than most teams expectWhiteboard markers fail early due to weak cap seals, heat, poor storage, and damaged boards. Learn how teams can cut waste, improve reliability, and choose longer-lasting markers.![School equipment costs more when these basics get missed School equipment costs more when these basics get missed]() May 17, 2026School equipment costs more when these basics get missedSchool equipment may look affordable at first, but hidden durability, compliance, and integration gaps can drive costs up. Learn how to buy smarter and avoid costly mistakes.

May 17, 2026School equipment costs more when these basics get missedSchool equipment may look affordable at first, but hidden durability, compliance, and integration gaps can drive costs up. Learn how to buy smarter and avoid costly mistakes.![Back to school trends that may change buying plans Back to school trends that may change buying plans]() May 16, 2026Back to school trends that may change buying plansBack to school trends are reshaping buying plans across travel and hospitality. Discover how data-driven procurement improves flexibility, efficiency, and guest experience.

May 16, 2026Back to school trends that may change buying plansBack to school trends are reshaping buying plans across travel and hospitality. Discover how data-driven procurement improves flexibility, efficiency, and guest experience.![Office supplies costs rise when small choices go wrong Office supplies costs rise when small choices go wrong]() May 16, 2026Office supplies costs rise when small choices go wrongOffice supplies costs often rise through small, untracked buying decisions. Learn how to reduce waste, improve purchasing control, and protect budgets with smarter standards.

May 16, 2026Office supplies costs rise when small choices go wrongOffice supplies costs often rise through small, untracked buying decisions. Learn how to reduce waste, improve purchasing control, and protect budgets with smarter standards.![Agri-Supply Chain Delays That Hurt Freshness and Profit Agri-Supply Chain Delays That Hurt Freshness and Profit]() May 15, 2026Agri-Supply Chain Delays That Hurt Freshness and ProfitAgri-supply chain delays cut freshness, raise spoilage, and drain margins. Discover key bottlenecks, profit risks, and data-driven fixes for distributors and hospitality buyers.

May 15, 2026Agri-Supply Chain Delays That Hurt Freshness and ProfitAgri-supply chain delays cut freshness, raise spoilage, and drain margins. Discover key bottlenecks, profit risks, and data-driven fixes for distributors and hospitality buyers.![Are Refurbished Printers and Scanners Worth the Risk? Are Refurbished Printers and Scanners Worth the Risk?]() May 15, 2026Are Refurbished Printers and Scanners Worth the Risk?Printers and scanners: are refurbished models a smart savings move or a hidden liability? Learn the key risks, benefits, and buying checks before you invest.

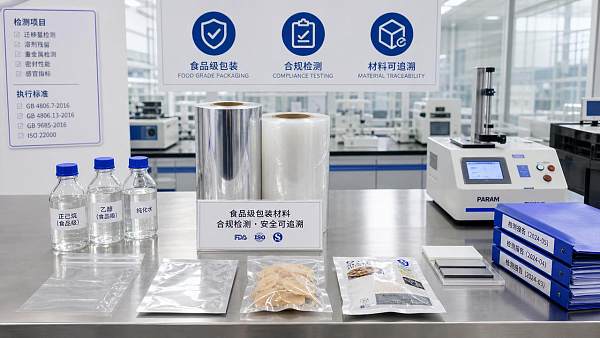

May 15, 2026Are Refurbished Printers and Scanners Worth the Risk?Printers and scanners: are refurbished models a smart savings move or a hidden liability? Learn the key risks, benefits, and buying checks before you invest.![Why Food Grade Packaging Fails Compliance Checks Why Food Grade Packaging Fails Compliance Checks]() May 14, 2026Why Food Grade Packaging Fails Compliance ChecksFood grade packaging fails compliance checks when migration risks, documentation gaps, and weak traceability go unnoticed. Learn the hidden causes and smarter verification steps.

May 14, 2026Why Food Grade Packaging Fails Compliance ChecksFood grade packaging fails compliance checks when migration risks, documentation gaps, and weak traceability go unnoticed. Learn the hidden causes and smarter verification steps.![Bakery Equipment Buying Mistakes That Hurt Output Quality Bakery Equipment Buying Mistakes That Hurt Output Quality]() May 13, 2026Bakery Equipment Buying Mistakes That Hurt Output QualityBakery equipment buying mistakes can quietly damage consistency, efficiency, and product quality. Learn what to check before investing to avoid waste, downtime, and costly output issues.

May 13, 2026Bakery Equipment Buying Mistakes That Hurt Output QualityBakery equipment buying mistakes can quietly damage consistency, efficiency, and product quality. Learn what to check before investing to avoid waste, downtime, and costly output issues.![Why Agricultural Chemicals Fail Even When the Label Is Followed Why Agricultural Chemicals Fail Even When the Label Is Followed]() May 13, 2026Why Agricultural Chemicals Fail Even When the Label Is FollowedAgricultural chemicals can fail even when labels are followed. Discover how water, weather, equipment, and mixing issues reduce results—and how to improve performance fast.

May 13, 2026Why Agricultural Chemicals Fail Even When the Label Is FollowedAgricultural chemicals can fail even when labels are followed. Discover how water, weather, equipment, and mixing issues reduce results—and how to improve performance fast.![Industrial and Manufacturing Orders Are Recovering Unevenly in 2026 Industrial and Manufacturing Orders Are Recovering Unevenly in 2026]() May 12, 2026Industrial and Manufacturing Orders Are Recovering Unevenly in 2026Industrial & Manufacturing orders are recovering unevenly in 2026. Learn how to assess supplier risk, compliance, lead times, and performance before sourcing critical projects.

May 12, 2026Industrial and Manufacturing Orders Are Recovering Unevenly in 2026Industrial & Manufacturing orders are recovering unevenly in 2026. Learn how to assess supplier risk, compliance, lead times, and performance before sourcing critical projects.![Grain Processing Capacity Is Growing, but Where Are the Bottlenecks? Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?]() May 12, 2026Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?Grain processing capacity is rising, but hidden bottlenecks still limit throughput. Discover key constraints, practical checks, and smarter ways to improve efficiency.

May 12, 2026Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?Grain processing capacity is rising, but hidden bottlenecks still limit throughput. Discover key constraints, practical checks, and smarter ways to improve efficiency.![Cosmetic ingredients under review as safety standards tighten Cosmetic ingredients under review as safety standards tighten]() May 09, 2026Cosmetic ingredients under review as safety standards tightenCosmetic ingredients face stricter safety review as global standards rise. Learn how to assess compliance, traceability, and supplier risk to build safer, audit-ready products.

May 09, 2026Cosmetic ingredients under review as safety standards tightenCosmetic ingredients face stricter safety review as global standards rise. Learn how to assess compliance, traceability, and supplier risk to build safer, audit-ready products.![Industrial & Manufacturing trends reshaping supplier selection Industrial & Manufacturing trends reshaping supplier selection]() May 09, 2026Industrial & Manufacturing trends reshaping supplier selectionIndustrial & Manufacturing trends are reshaping supplier selection in tourism infrastructure. See how buyers compare durability, integration, and compliance to choose smarter partners.

May 09, 2026Industrial & Manufacturing trends reshaping supplier selectionIndustrial & Manufacturing trends are reshaping supplier selection in tourism infrastructure. See how buyers compare durability, integration, and compliance to choose smarter partners.

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.