Search News

TerraVista Metrics (TVM)Industry Portal

TerraVista Metrics (TVM)Hot Articles

TerraVista Metrics (TVM)- UL 60335-2-107:2026 Tightens Smart Lighting ExportsUL 60335-2-107:2026 tightens smart lighting exports with new EMC immunity and thermal protection checks. See how LED exporters can prepare faster for North America compliance.

- EN 16562:2026 Takes Effect for EU-Bound Modular CabinsEN 16562:2026 now impacts EU-bound Modular Cabins with A2-s1,d0, CE and EPD requirements. See who is affected, key compliance risks, and how to stay export-ready.

- RV MCU Lead Times Stretch as Local Supply Gains Audit AccessRV MCU lead times stretch beyond 26 weeks as local suppliers gain audit access and certification progress. Explore sourcing risks, compliance checks, and qualified alternatives for RV supply chains.

Popular Tags

TerraVista Metrics (TVM)Industry NewsHow to Avoid Common Benchmarking Analysis Mistakes

auth.Time

Jun 09, 2026Click Count

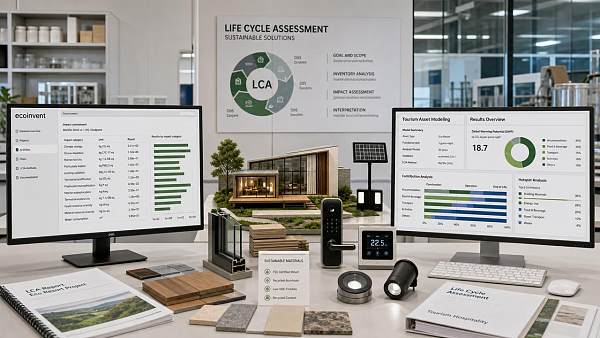

Avoiding common benchmarking analysis mistakes is essential when comparing suppliers, systems, and performance claims in tourism infrastructure. With the right benchmarking software, benchmarking tools, and reliable benchmarking data, buyers can improve every benchmarking process, produce a credible benchmarking report, and make sharper benchmarking comparison decisions that support sustainable tourism development and system integration services.

For procurement teams, project evaluators, distributors, and market researchers, benchmarking is no longer a side task completed after a shortlist is formed. In tourism and hospitality infrastructure, it is often the difference between a 10-year asset that performs as promised and a high-visibility installation that creates maintenance costs, guest complaints, and integration delays within the first 12 months.

This matters even more when procurement involves prefabricated tourism cabins, smart hotel networks, amusement hardware, energy systems, or cross-border supply chain decisions. TerraVista Metrics (TVM) focuses on raw engineering metrics rather than supplier storytelling, helping decision-makers compare thermal performance, data throughput, material fatigue, and compliance readiness with clearer evidence.

The most expensive benchmarking errors are usually not dramatic. They appear in small assumptions: inconsistent testing conditions, vague KPIs, poor sample selection, or overreliance on sales brochures. The result is a benchmarking comparison that looks complete on paper but fails to guide a sound purchase decision.

Why Benchmarking Analysis Fails in Tourism Infrastructure Procurement

Benchmarking analysis often fails because buyers compare unlike-for-like conditions. A prefab glamping unit tested at 20°C ambient temperature cannot be fairly compared with another unit tested at 32°C and 80% humidity. The same issue appears in IoT hotel systems, where one vendor reports peak throughput while another reports sustained throughput over 24 hours. Without normalized conditions, benchmarking data becomes misleading before any real analysis begins.

Another common problem is choosing the wrong benchmark objective. Many teams say they want the “best supplier,” but that is too broad. A tourism developer may actually need the lowest lifecycle maintenance burden over 5–7 years, while a resort operator may prioritize integration speed within a 6–10 week deployment window. When the benchmarking process is not linked to a real business outcome, the final benchmarking report loses decision value.

In hospitality projects, multi-system dependency also creates distortion. A smart room control platform may look strong in isolation, yet underperform once connected to PMS, HVAC, access control, and guest mobile applications. Benchmarking tools that assess only one layer of performance can miss system-wide bottlenecks. This is especially risky when procurement directors evaluate products from multiple manufacturers and expect plug-and-play compatibility.

A final reason is weak source validation. Suppliers may provide internal lab results, but internal tests are not always conducted under comparable thresholds, sample sizes, or cycle counts. If a material fatigue test covers 5,000 cycles for one component and 20,000 cycles for another, the benchmarking comparison may exaggerate durability differences or hide long-term failure patterns.

Typical causes behind poor benchmarking decisions

- Unclear comparison baseline, such as different environmental temperatures, network loads, or user volumes.

- KPIs focused on purchase price instead of total operating cost across 3, 5, or 10 years.

- Testing samples that are too small, such as 1 pilot unit used to represent a 50-unit rollout.

- Ignoring integration risk, firmware compatibility, and local compliance requirements.

For information researchers and channel partners, these mistakes also affect market positioning. A distributor that benchmarks only catalog specifications may overestimate which products are truly export-ready or region-ready. In practice, benchmark quality should improve confidence not only in the product, but also in delivery predictability, upgrade path, and after-sales service response.

The Most Common Benchmarking Analysis Mistakes and Their Commercial Impact

The first major mistake is comparing marketing claims instead of measurable outputs. In tourism infrastructure, phrases such as “energy-saving,” “smart-ready,” or “high durability” are too broad to support procurement. Buyers need measurable ranges: thermal transmittance, latency under load, power draw per room, corrosion resistance, cycle fatigue, or failure rate per 1,000 operating hours. Without those values, benchmarking software can only organize claims, not validate them.

The second mistake is relying on a single benchmark dimension. A glamping unit may show excellent insulation but poor structural performance in coastal humidity. A hotel IoT network may deliver strong dashboard visibility but weak device recovery after outages longer than 15 minutes. Strong procurement decisions require at least 4 dimensions: performance, durability, compliance, and integration readiness.

The third mistake is using outdated benchmarking data. In smart hospitality, firmware, protocols, chipsets, and network architecture can change in 6–12 months. A benchmarking report from two years ago may still be useful for directional understanding, but it should not be treated as current evidence for a live purchase process. This issue is especially important for distributors and agents selecting long-term product lines.

The fourth mistake is ignoring lifecycle cost. A supplier that appears 8% cheaper at the order stage may become 15%–25% more expensive after installation rework, maintenance visits, spare part replacement, and energy inefficiency. In tourism projects where uptime affects guest reviews and occupancy performance, benchmarking comparison must extend beyond acquisition cost.

Mistakes that often appear in supplier evaluation

The table below shows how common benchmarking analysis mistakes translate into procurement risk in tourism and hospitality projects.

Benchmarking mistake What it looks like in practice Likely business impact Non-standard test conditions Suppliers submit results from different temperatures, loads, or usage scenarios False ranking, poor product fit, costly revalidation during tender stage Price-only comparison Procurement score gives 60%–70% weight to unit cost and little weight to maintenance Higher total cost over 3–5 years and elevated service burden No integration benchmark Devices pass lab tests but are not checked against live PMS, HVAC, or access systems Deployment delays, middleware costs, unstable guest-facing services Outdated data source Using performance reports older than 12–24 months for current procurement Selection based on obsolete hardware or superseded software versions The key takeaway is that a poor benchmarking process rarely fails at the spreadsheet level alone. It fails when data inputs do not reflect real operating conditions, or when the scoring model omits the variables that determine project success after installation.

How to spot weak benchmarking reports quickly

- If test conditions are missing, the report is incomplete.

- If performance numbers lack units, thresholds, or cycle counts, the comparison is weak.

- If no section addresses maintenance, compatibility, or carbon-related metrics, the report is unlikely to support long-term decision-making.

How to Build a Reliable Benchmarking Process from the Start

A reliable benchmarking process starts with a controlled scope. Instead of asking which supplier is “best,” define 3–5 procurement scenarios. For example: eco-lodge deployment in humid coastal zones, urban hotel retrofit with live occupancy, or amusement equipment sourcing for high-cycle public use. Each scenario should have its own thresholds for thermal efficiency, uptime, installation time, network stability, and maintenance frequency.

Next, standardize benchmark inputs. If you are evaluating cabin envelope performance, set the same temperature range, humidity range, panel thickness assumption, and occupancy load. If you are evaluating a hotel IoT network, set the same device count, packet load, recovery time, and integration endpoints. Benchmarking tools are only as good as the consistency of the test design feeding them.

Third, create a weighted scoring model. Many B2B teams use a 100-point matrix, but what matters is how the points are distributed. For a premium hospitality build, a common weighting may be 30 points for technical performance, 25 for durability, 20 for integration readiness, 15 for compliance and sustainability documentation, and 10 for delivery and service support. The weighting should reflect business priorities, not generic templates.

Finally, validate with cross-functional review. Procurement, engineering, operations, and commercial teams should each review the benchmarking report before approval. A supplier that satisfies engineering but cannot meet regional service response within 48–72 hours may still be the wrong choice. Benchmarking comparison becomes more robust when multiple departments test the same evidence from different angles.

A practical 5-step workflow

- Define use case: identify asset type, guest volume, climate, and expected service life.

- Set benchmark criteria: establish 8–12 measurable indicators with units and thresholds.

- Collect comparable data: require matching test conditions and version-controlled documentation.

- Score and verify: apply weighted evaluation and request clarification where gaps exceed agreed tolerances.

- Convert findings into action: issue a benchmarking report tied to shortlist, negotiation, or pilot decision.

TVM-style benchmarking is especially useful here because it frames tourism procurement as an engineering and systems problem rather than a branding exercise. That shift helps buyers compare a glamping structure, a network backbone, or an amusement component on objective terms that support architects, operators, and channel intermediaries alike.

Recommended metrics by project type

The right benchmarking data depends on the asset under review. The matrix below shows a practical way to align project type with the indicators that matter most.

Project category Primary benchmark indicators Typical decision threshold Prefab tourism cabins Thermal performance, moisture resistance, installation cycle, maintenance interval Deployment within 2–6 weeks and stable performance across seasonal changes Smart hotel IoT systems Latency, throughput, device recovery time, API compatibility, cybersecurity update cycle Low-latency response and reliable recovery after outage events Amusement and leisure hardware Material fatigue, corrosion resistance, inspection interval, spare part lead time Predictable maintenance planning and acceptable long-cycle wear profile Integrated resort infrastructure Interoperability, carbon documentation, upgrade pathway, service SLA Cross-system compatibility and support response within agreed operating windows This structure prevents a common error: forcing every tourism asset into the same benchmarking template. Good benchmarking software should adapt to the asset class and business outcome, not flatten different systems into one simplistic score.

What Procurement Teams Should Verify Before Accepting Benchmarking Data

Before accepting any benchmarking report, procurement teams should check whether the data is traceable. At minimum, every result should identify test date, product version, configuration status, operating conditions, and measurement method. If those elements are missing, benchmarking comparison becomes difficult to audit later during negotiation, installation, or dispute resolution.

Buyers should also verify whether the sample represents the intended rollout scale. A successful test on 3 rooms does not always scale to a 300-room property. Likewise, a strong pilot for 2 cabins may not reveal installation bottlenecks that appear in a 40-unit site deployment. Benchmarking analysis should include some indication of scale sensitivity, even if that comes through scenario modeling rather than full field rollout.

For sustainability-oriented tourism projects, carbon and compliance evidence must be reviewed alongside performance data. A product can look efficient in operation yet create procurement obstacles if documentation for material source, environmental declarations, or regional conformity is incomplete. Benchmarking data should support both engineering review and approval workflow.

Commercial teams should also ask whether the supplier can maintain the benchmarked performance across batches. Manufacturing consistency matters. If one sample performs well but production variability is high, the benchmark result may not represent actual supply quality. For dealers and agents, batch consistency is often as important as the headline metric itself.

Pre-acceptance checklist for benchmarking comparison

- Check version control: confirm hardware revision, firmware version, and accessory configuration.

- Check test boundary: verify temperature, load, cycle count, humidity, and user volume assumptions.

- Check scale relevance: ensure sample size or simulation logic reflects the target deployment size.

- Check commercial usability: confirm lead time, spare part access, and service response are compatible with the project schedule.

- Check document integrity: ensure the benchmarking report can be reviewed by procurement, technical, and management stakeholders without missing definitions.

Questions worth asking suppliers directly

Ask whether the data comes from internal tests, third-party labs, field trials, or mixed sources. Ask how often the benchmark is updated and whether the reported figures represent average, peak, or minimum performance. Also ask what changed between the last two benchmark cycles. Small changes in materials, firmware, or architecture can shift outcomes significantly, especially over 12-month product development cycles.

When buyers ask these questions early, the benchmarking process becomes a stronger negotiation and risk-control tool. It also reduces the chance of selecting products that appear attractive in presentations but generate hidden implementation costs later.

FAQ: Benchmarking Analysis Questions Buyers Often Ask

How many indicators should a benchmarking report include?

For most tourism infrastructure purchases, 8–12 indicators are enough to make the report practical and decision-oriented. Fewer than 5 indicators usually misses operational risk. More than 15 can become hard to manage unless the asset is highly technical, such as a multi-layer smart building platform with network, controls, and integration dependencies.

What is the right update cycle for benchmarking data?

For digitally enabled hospitality systems, reviewing data every 6–12 months is usually reasonable. For slower-moving structural products, a 12–24 month review cycle may be acceptable if materials, production method, and compliance status have not changed. However, any major firmware revision, component substitution, or design change should trigger immediate re-evaluation.

Is benchmarking software enough on its own?

No. Benchmarking software improves consistency, scoring logic, and reporting speed, but it cannot correct weak source data or poorly defined KPIs. The software should support the methodology, not replace it. Buyers still need disciplined benchmark design, traceable measurements, and a review process that connects technical results with commercial reality.

Which benchmarking mistake is the most costly?

In many B2B tourism projects, the most costly mistake is ignoring integration. A component may pass individual tests yet fail when linked to the surrounding ecosystem. Integration failures can delay launch by 2–8 weeks, increase commissioning costs, and disrupt guest experience at the exact moment the property is trying to build market reputation.

Avoiding common benchmarking analysis mistakes requires more than a better spreadsheet. It requires comparable conditions, current benchmarking data, scenario-based KPIs, and a disciplined benchmarking process that reflects the full lifecycle of tourism assets. When buyers evaluate suppliers, structures, and smart systems through measurable engineering logic, benchmarking comparison becomes more credible and commercially useful.

For developers, operators, procurement teams, and channel partners, TerraVista Metrics supports this decision model by turning complex supplier claims into structured evidence across durability, integration, sustainability, and operational performance. If you need a clearer benchmarking report, a tailored comparison framework, or support in assessing tourism infrastructure options with greater precision, contact us to discuss your project and explore a customized solution.

Recommended News

![When enterprise software becomes too costly to maintain When enterprise software becomes too costly to maintain]() May 31, 2026When enterprise software becomes too costly to maintainEnterprise software maintenance costs rising? Learn how to spot risk, benchmark performance, and decide when to maintain, modernize, or replace.

May 31, 2026When enterprise software becomes too costly to maintainEnterprise software maintenance costs rising? Learn how to spot risk, benchmark performance, and decide when to maintain, modernize, or replace.![Sheet Metal Thickness Changes More Than Strength Sheet Metal Thickness Changes More Than Strength]() May 29, 2026Sheet Metal Thickness Changes More Than StrengthSheet metal thickness affects strength, weight, corrosion, noise, cost, and compliance. Use this checklist to choose safer, longer-lasting panels for tourism assets.

May 29, 2026Sheet Metal Thickness Changes More Than StrengthSheet metal thickness affects strength, weight, corrosion, noise, cost, and compliance. Use this checklist to choose safer, longer-lasting panels for tourism assets.![Why smart hotel bulk order pricing varies so much Why smart hotel bulk order pricing varies so much]() May 23, 2026Why smart hotel bulk order pricing varies so muchSmart hotel bulk order pricing varies by integration, cybersecurity, compliance, software, and support. Learn how to compare true lifecycle value and avoid hidden costs.

May 23, 2026Why smart hotel bulk order pricing varies so muchSmart hotel bulk order pricing varies by integration, cybersecurity, compliance, software, and support. Learn how to compare true lifecycle value and avoid hidden costs.![How to estimate smart hotel cost before budgeting How to estimate smart hotel cost before budgeting]() May 14, 2026How to estimate smart hotel cost before budgetingSmart hotel cost explained for finance teams: learn how to estimate hardware, software, integration, cybersecurity, and lifecycle expenses before budgeting with confidence.

May 14, 2026How to estimate smart hotel cost before budgetingSmart hotel cost explained for finance teams: learn how to estimate hardware, software, integration, cybersecurity, and lifecycle expenses before budgeting with confidence.![Why smart hotel price can vary more than expected Why smart hotel price can vary more than expected]() May 06, 2026Why smart hotel price can vary more than expectedSmart hotel price can vary due to hidden tech, energy systems, security, and maintenance. Discover what really drives rates and how to spot the best value before you book.

May 06, 2026Why smart hotel price can vary more than expectedSmart hotel price can vary due to hidden tech, energy systems, security, and maintenance. Discover what really drives rates and how to spot the best value before you book.![How to Use Ecoinvent Data Without Common LCA Errors How to Use Ecoinvent Data Without Common LCA Errors]() May 22, 2026How to Use Ecoinvent Data Without Common LCA ErrorsEcoinvent mistakes can weaken any LCA. Learn how to choose the right datasets, set accurate boundaries, and improve decision-ready results for tourism, hospitality, and mixed-use projects.

May 22, 2026How to Use Ecoinvent Data Without Common LCA ErrorsEcoinvent mistakes can weaken any LCA. Learn how to choose the right datasets, set accurate boundaries, and improve decision-ready results for tourism, hospitality, and mixed-use projects.![Why emerging markets still matter in 2026 growth planning Why emerging markets still matter in 2026 growth planning]() May 22, 2026Why emerging markets still matter in 2026 growth planningEmerging markets still matter in 2026 growth planning. Learn how to assess demand, risk, compliance, and procurement with TerraVista Metrics for smarter expansion decisions.

May 22, 2026Why emerging markets still matter in 2026 growth planningEmerging markets still matter in 2026 growth planning. Learn how to assess demand, risk, compliance, and procurement with TerraVista Metrics for smarter expansion decisions.![Tourism Development Risks to Watch Before New Site Investment Tourism Development Risks to Watch Before New Site Investment]() May 22, 2026Tourism Development Risks to Watch Before New Site InvestmentTourism development risks can derail new site investment fast. Discover a practical checklist to test infrastructure, climate resilience, compliance, and lifecycle costs before you commit.

May 22, 2026Tourism Development Risks to Watch Before New Site InvestmentTourism development risks can derail new site investment fast. Discover a practical checklist to test infrastructure, climate resilience, compliance, and lifecycle costs before you commit.![How AI for business helps teams make faster decisions How AI for business helps teams make faster decisions]() May 20, 2026How AI for business helps teams make faster decisionsAI for business helps teams make faster, evidence-based decisions by turning complex technical data into clear, comparable insights—reducing risk, improving consistency, and speeding approvals.

May 20, 2026How AI for business helps teams make faster decisionsAI for business helps teams make faster, evidence-based decisions by turning complex technical data into clear, comparable insights—reducing risk, improving consistency, and speeding approvals.![Is container shipping still the safest low cost option Is container shipping still the safest low cost option]() May 19, 2026Is container shipping still the safest low cost optionContainer shipping is still a leading low-cost option when cargo, packaging, route risk, and delivery timing are planned right. Learn the checklist that prevents damage and delays.

May 19, 2026Is container shipping still the safest low cost optionContainer shipping is still a leading low-cost option when cargo, packaging, route risk, and delivery timing are planned right. Learn the checklist that prevents damage and delays.![How to compare warehousing solutions for faster growth How to compare warehousing solutions for faster growth]() May 18, 2026How to compare warehousing solutions for faster growthWarehousing solutions comparison made simple: learn how to assess scalability, integration, cost, and risk to support faster growth and smarter supply chain decisions.

May 18, 2026How to compare warehousing solutions for faster growthWarehousing solutions comparison made simple: learn how to assess scalability, integration, cost, and risk to support faster growth and smarter supply chain decisions.![Why whiteboard markers fail faster than most teams expect Why whiteboard markers fail faster than most teams expect]() May 17, 2026Why whiteboard markers fail faster than most teams expectWhiteboard markers fail early due to weak cap seals, heat, poor storage, and damaged boards. Learn how teams can cut waste, improve reliability, and choose longer-lasting markers.

May 17, 2026Why whiteboard markers fail faster than most teams expectWhiteboard markers fail early due to weak cap seals, heat, poor storage, and damaged boards. Learn how teams can cut waste, improve reliability, and choose longer-lasting markers.![School equipment costs more when these basics get missed School equipment costs more when these basics get missed]() May 17, 2026School equipment costs more when these basics get missedSchool equipment may look affordable at first, but hidden durability, compliance, and integration gaps can drive costs up. Learn how to buy smarter and avoid costly mistakes.

May 17, 2026School equipment costs more when these basics get missedSchool equipment may look affordable at first, but hidden durability, compliance, and integration gaps can drive costs up. Learn how to buy smarter and avoid costly mistakes.![Back to school trends that may change buying plans Back to school trends that may change buying plans]() May 16, 2026Back to school trends that may change buying plansBack to school trends are reshaping buying plans across travel and hospitality. Discover how data-driven procurement improves flexibility, efficiency, and guest experience.

May 16, 2026Back to school trends that may change buying plansBack to school trends are reshaping buying plans across travel and hospitality. Discover how data-driven procurement improves flexibility, efficiency, and guest experience.![Office supplies costs rise when small choices go wrong Office supplies costs rise when small choices go wrong]() May 16, 2026Office supplies costs rise when small choices go wrongOffice supplies costs often rise through small, untracked buying decisions. Learn how to reduce waste, improve purchasing control, and protect budgets with smarter standards.

May 16, 2026Office supplies costs rise when small choices go wrongOffice supplies costs often rise through small, untracked buying decisions. Learn how to reduce waste, improve purchasing control, and protect budgets with smarter standards.![Agri-Supply Chain Delays That Hurt Freshness and Profit Agri-Supply Chain Delays That Hurt Freshness and Profit]() May 15, 2026Agri-Supply Chain Delays That Hurt Freshness and ProfitAgri-supply chain delays cut freshness, raise spoilage, and drain margins. Discover key bottlenecks, profit risks, and data-driven fixes for distributors and hospitality buyers.

May 15, 2026Agri-Supply Chain Delays That Hurt Freshness and ProfitAgri-supply chain delays cut freshness, raise spoilage, and drain margins. Discover key bottlenecks, profit risks, and data-driven fixes for distributors and hospitality buyers.![Are Refurbished Printers and Scanners Worth the Risk? Are Refurbished Printers and Scanners Worth the Risk?]() May 15, 2026Are Refurbished Printers and Scanners Worth the Risk?Printers and scanners: are refurbished models a smart savings move or a hidden liability? Learn the key risks, benefits, and buying checks before you invest.

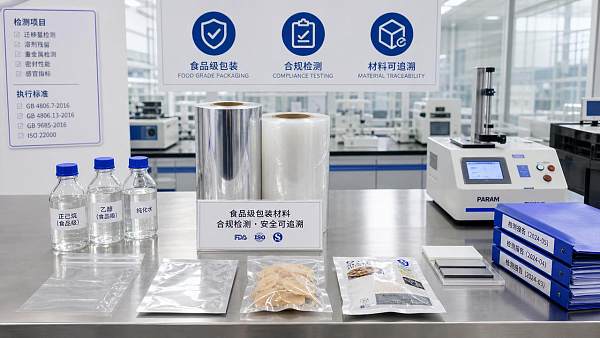

May 15, 2026Are Refurbished Printers and Scanners Worth the Risk?Printers and scanners: are refurbished models a smart savings move or a hidden liability? Learn the key risks, benefits, and buying checks before you invest.![Why Food Grade Packaging Fails Compliance Checks Why Food Grade Packaging Fails Compliance Checks]() May 14, 2026Why Food Grade Packaging Fails Compliance ChecksFood grade packaging fails compliance checks when migration risks, documentation gaps, and weak traceability go unnoticed. Learn the hidden causes and smarter verification steps.

May 14, 2026Why Food Grade Packaging Fails Compliance ChecksFood grade packaging fails compliance checks when migration risks, documentation gaps, and weak traceability go unnoticed. Learn the hidden causes and smarter verification steps.![Bakery Equipment Buying Mistakes That Hurt Output Quality Bakery Equipment Buying Mistakes That Hurt Output Quality]() May 13, 2026Bakery Equipment Buying Mistakes That Hurt Output QualityBakery equipment buying mistakes can quietly damage consistency, efficiency, and product quality. Learn what to check before investing to avoid waste, downtime, and costly output issues.

May 13, 2026Bakery Equipment Buying Mistakes That Hurt Output QualityBakery equipment buying mistakes can quietly damage consistency, efficiency, and product quality. Learn what to check before investing to avoid waste, downtime, and costly output issues.![Why Agricultural Chemicals Fail Even When the Label Is Followed Why Agricultural Chemicals Fail Even When the Label Is Followed]() May 13, 2026Why Agricultural Chemicals Fail Even When the Label Is FollowedAgricultural chemicals can fail even when labels are followed. Discover how water, weather, equipment, and mixing issues reduce results—and how to improve performance fast.

May 13, 2026Why Agricultural Chemicals Fail Even When the Label Is FollowedAgricultural chemicals can fail even when labels are followed. Discover how water, weather, equipment, and mixing issues reduce results—and how to improve performance fast.![Industrial and Manufacturing Orders Are Recovering Unevenly in 2026 Industrial and Manufacturing Orders Are Recovering Unevenly in 2026]() May 12, 2026Industrial and Manufacturing Orders Are Recovering Unevenly in 2026Industrial & Manufacturing orders are recovering unevenly in 2026. Learn how to assess supplier risk, compliance, lead times, and performance before sourcing critical projects.

May 12, 2026Industrial and Manufacturing Orders Are Recovering Unevenly in 2026Industrial & Manufacturing orders are recovering unevenly in 2026. Learn how to assess supplier risk, compliance, lead times, and performance before sourcing critical projects.![Grain Processing Capacity Is Growing, but Where Are the Bottlenecks? Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?]() May 12, 2026Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?Grain processing capacity is rising, but hidden bottlenecks still limit throughput. Discover key constraints, practical checks, and smarter ways to improve efficiency.

May 12, 2026Grain Processing Capacity Is Growing, but Where Are the Bottlenecks?Grain processing capacity is rising, but hidden bottlenecks still limit throughput. Discover key constraints, practical checks, and smarter ways to improve efficiency.![Cosmetic ingredients under review as safety standards tighten Cosmetic ingredients under review as safety standards tighten]() May 09, 2026Cosmetic ingredients under review as safety standards tightenCosmetic ingredients face stricter safety review as global standards rise. Learn how to assess compliance, traceability, and supplier risk to build safer, audit-ready products.

May 09, 2026Cosmetic ingredients under review as safety standards tightenCosmetic ingredients face stricter safety review as global standards rise. Learn how to assess compliance, traceability, and supplier risk to build safer, audit-ready products.![Industrial & Manufacturing trends reshaping supplier selection Industrial & Manufacturing trends reshaping supplier selection]() May 09, 2026Industrial & Manufacturing trends reshaping supplier selectionIndustrial & Manufacturing trends are reshaping supplier selection in tourism infrastructure. See how buyers compare durability, integration, and compliance to choose smarter partners.

May 09, 2026Industrial & Manufacturing trends reshaping supplier selectionIndustrial & Manufacturing trends are reshaping supplier selection in tourism infrastructure. See how buyers compare durability, integration, and compliance to choose smarter partners.

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.