Search News

TerraVista Metrics (TVM)Industry Portal

TerraVista Metrics (TVM)Hot Articles

TerraVista Metrics (TVM)- UL 60335-2-107:2026 Tightens Smart Lighting ExportsUL 60335-2-107:2026 tightens smart lighting exports with new EMC immunity and thermal protection checks. See how LED exporters can prepare faster for North America compliance.

- EN 16562:2026 Takes Effect for EU-Bound Modular CabinsEN 16562:2026 now impacts EU-bound Modular Cabins with A2-s1,d0, CE and EPD requirements. See who is affected, key compliance risks, and how to stay export-ready.

- RV MCU Lead Times Stretch as Local Supply Gains Audit AccessRV MCU lead times stretch beyond 26 weeks as local suppliers gain audit access and certification progress. Explore sourcing risks, compliance checks, and qualified alternatives for RV supply chains.

Popular Tags

TerraVista Metrics (TVM)Industry NewsWhy Benchmarking Data Often Leads You Wrong

auth.Time

Jun 09, 2026Click Count

Benchmarking data can sharpen decisions—or quietly distort them. For buyers, evaluators, and channel partners in tourism infrastructure, relying on generic benchmarking software, benchmarking tools, or a surface-level benchmarking comparison often hides critical gaps in durability, integration, and compliance. This article explores why flawed benchmarking analysis misleads procurement and how a rigorous benchmarking process supports better benchmarking reports, smarter benchmarking solutions, and more reliable sustainable tourism development.

Why generic benchmarking data fails in tourism infrastructure procurement

Many teams assume benchmarking data is objective by default. In practice, poor benchmarking analysis often starts with the wrong testing frame. A glamping cabin, an AI-enabled hotel control system, and an amusement hardware component may all be labeled as “tourism assets,” yet each carries different stress cycles, environmental exposure, energy expectations, and integration demands. When the benchmarking process compresses these variables into a single score, buyers receive a neat report that is easy to compare but hard to trust.

This problem becomes more serious in cross-border sourcing. Procurement teams evaluating manufacturing partners in Asia often review benchmarking reports built for general industrial categories rather than destination-grade use cases. Typical blind spots include thermal performance under seasonal swings of 10°C–35°C, corrosion behavior in coastal humidity, and network stability under 24/7 guest occupancy loads. These are not minor details. They shape maintenance cost, downtime risk, and reputation exposure over a 3–5 year operating window.

Information researchers and business evaluators are especially vulnerable when they must compare multiple vendors within 2–4 weeks. Under deadline pressure, they often default to benchmarking tools that prioritize speed over technical context. The result is a benchmarking comparison that looks complete on paper but excludes fatigue thresholds, carbon documentation, interoperability constraints, or installation variance. A fast decision then turns into a slow operational problem.

TerraVista Metrics (TVM) addresses this gap by treating benchmarking as a structural filter rather than a marketing checklist. Instead of asking whether a product looks competitive, TVM focuses on whether it performs within defined environmental, engineering, and procurement conditions. That shift matters because tourism infrastructure is not bought for display. It is bought to operate continuously, integrate cleanly, and comply predictably.

The most common distortions hidden inside a benchmarking report

A weak benchmarking report usually fails in one of four ways: it uses non-equivalent samples, ignores deployment context, overweights cosmetic features, or mixes supplier claims with independently measured results. In tourism procurement, all four can exist at the same time. That is why a visually polished benchmarking solution may still produce poor commercial decisions.

- Non-equivalent samples: comparing a prototype against a production-ready unit, or comparing indoor-rated electronics with field-installed systems.

- Missing environmental context: skipping UV exposure, salt mist conditions, vibration, or occupancy peaks common in resort operations.

- Feature overweighting: prioritizing interface design, finish options, or app screenshots over load stability, repair intervals, and energy efficiency.

- Unverified supplier input: accepting self-reported cycle life, throughput, or insulation values without independent testing boundaries.

For distributors and agents, these distortions create an additional channel risk. If benchmark claims fail after market entry, the local partner bears the cost of technical clarification, after-sales negotiation, and brand damage. A stronger benchmarking process protects not just the buyer but the whole distribution chain.

What a reliable benchmarking process should measure before you compare suppliers

Good benchmarking analysis is not just about collecting more data. It is about collecting the right data in the right order. For tourism and hospitality infrastructure, a dependable benchmarking process typically moves through 3 stages: scope definition, performance testing, and procurement interpretation. If any stage is skipped, the final benchmarking comparison becomes weaker than it appears.

Scope definition should clarify the operating scenario before a single metric is reviewed. Is the asset intended for mountain eco-lodges, high-humidity beachfront sites, urban smart hotels, or mixed-use entertainment zones? A prefab unit that performs well in dry inland conditions may produce very different insulation and condensation behavior in monsoon climates. Likewise, an IoT system that handles 200 devices in a lab may struggle when 800 connected endpoints operate across guest rooms, service areas, and back-office systems.

Performance testing should then isolate measurable engineering variables. For physical structures, this can include thermal resistance ranges, material fatigue exposure, fastener stability, and assembly tolerance. For digital hospitality systems, relevant factors include data throughput, latency stability, device compatibility, redundancy logic, and recovery time after interruption. Procurement teams do not need every possible metric; they need the 5–7 metrics that influence lifecycle cost and deployment reliability.

Interpretation is where many benchmarking tools fall short. Raw numbers mean little unless they are translated into decision consequences. TVM’s approach is useful here because it converts engineering measurements into procurement logic: what affects CAPEX, what influences OPEX, what creates compliance delay, and what increases integration risk.

Core dimensions that matter more than headline scores

Before accepting any benchmarking report, buyers should verify whether the assessment covers these decision-critical dimensions rather than only promotional performance indicators.

Benchmarking dimension What should be measured Procurement relevance Durability under use conditions Fatigue cycles, corrosion exposure, surface degradation, fastener stability Affects maintenance frequency, spare parts planning, and warranty negotiation System integration performance Protocol compatibility, throughput range, response latency, failure recovery behavior Determines installation complexity and interoperability with hotel or site systems Compliance readiness Material traceability, emissions documentation, electrical or safety document completeness Reduces approval delays and lowers the risk of rejected submissions Operational efficiency Thermal efficiency, energy draw range, uptime consistency, service interval estimates Shapes long-term operating cost and sustainability positioning The key lesson is simple: benchmarking data becomes useful only when dimensions align with real procurement consequences. If a benchmarking solution does not show how a metric affects installation, operation, compliance, or total cost, it may inform marketing but not buying.

A practical 4-step review sequence for evaluators

- Confirm scenario equivalence: verify site type, operating hours, climate exposure, and occupancy intensity.

- Separate measured data from declared claims: ask which values come from lab testing and which come from supplier documentation.

- Map metrics to commercial risk: identify which 3–5 metrics affect downtime, energy cost, and approval timelines.

- Check reproducibility: review whether the benchmarking process can be repeated when product batches or configurations change.

This sequence is especially useful when a procurement committee must compare offers within a fixed tender cycle of 7–15 days. It reduces the chance of overvaluing attractive dashboards and undervaluing hard engineering evidence.

Benchmarking comparison in real buying scenarios: what changes across asset types

A major reason benchmarking data leads teams wrong is that not all assets fail in the same way. In tourism development, procurement decisions often span prefabricated guest units, smart hotel networks, and visitor-facing mechanical systems. Each category requires a different benchmarking comparison model. If one template is used for all three, the analysis becomes shallow and the benchmarking report loses its operational meaning.

For prefabricated cabins, thermal efficiency and envelope durability matter early because they affect guest comfort, energy load, and maintenance calls. For smart hotel IoT systems, integration stability matters more because one incompatible protocol can delay commissioning by several weeks. For amusement or high-use leisure hardware, fatigue resistance and component replacement cycles become central because usage loads are repetitive and public safety expectations are high.

This is where TVM’s sector-specific benchmarking solutions create value. By translating supplier-side manufacturing capability into standardized whitepapers, TVM gives global tourism architects and procurement teams a way to compare unlike offers through use-case logic rather than brochure language. That reduces ambiguity during sourcing, especially when multiple factories provide technically similar but operationally different solutions.

The following table shows how benchmarking priorities shift by application scenario. It can help researchers, procurement directors, and channel partners decide which metrics deserve the greatest weight before asking for quotations.

Tourism asset category Primary benchmarking focus Typical procurement concern Prefab glamping units Thermal envelope behavior, moisture control, transport and assembly tolerance Whether comfort and durability remain stable across seasonal temperature swings and remote installation sites Hotel IoT and AI systems Network throughput, device interoperability, response consistency, recovery after outage Whether systems can scale from pilot floors to full-property deployment without instability Amusement and leisure hardware Material fatigue, repetitive load endurance, component service intervals Whether continuous use during peak seasons increases failure rate or maintenance shutdown time Hybrid hospitality infrastructure packages Cross-system compatibility, installation sequencing, documentation consistency Whether multi-vendor packages create hidden coordination and acceptance risks The table also explains why benchmarking software alone rarely solves evaluation complexity. Software can organize inputs, but it cannot determine whether a cabin should be tested for condensation risk, whether an IoT gateway should be assessed under occupancy peaks, or whether a leisure system needs tighter fatigue review. Human interpretation and sector knowledge remain essential.

How channel partners should read benchmarking data differently

Distributors, agents, and regional resellers should add one more layer to the benchmarking process: market transferability. A product that performs acceptably in a supplier test environment may still create problems if local installers lack training, spare parts lead times exceed 30–45 days, or documentation is not adapted to local approvals. For channel partners, benchmarking comparison should therefore include not just equipment performance but deployment support, documentation readiness, and after-sales realism.

This is particularly important when acting as an importer or local commercialization partner. Once product claims enter sales materials, the partner becomes part of the accountability chain. Independent benchmarking analysis can reduce that exposure by providing neutral, structured evidence before market launch.

What procurement teams should check before trusting benchmarking tools or supplier dashboards

Not every benchmarking tool is unsuitable, but no tool should be trusted without inspection. Procurement teams should ask whether the tool reflects actual decision criteria or simply automates comparison formatting. A dashboard with color-coded scores can create confidence too quickly, especially when multiple stakeholders need a short summary for internal approval. Yet a simplified score often hides the assumptions that matter most.

A practical way to test benchmarking software is to review its missing data tolerance. If the platform still generates a strong ranking when fatigue information, integration details, or compliance documents are incomplete, the ranking may be more decorative than analytical. In tourism infrastructure procurement, missing variables can be more important than reported variables because they often indicate future approval or operation risk.

Buyers should also check whether the benchmarking process distinguishes between laboratory values, simulation values, supplier declarations, and field observations. These are not interchangeable data classes. A thermal result derived from controlled testing should not be treated the same way as a sales estimate. The same applies to system throughput figures collected in isolated conditions versus live property traffic. Mixing data classes is one of the easiest ways benchmarking data leads decision-makers wrong.

TVM’s role is useful because it helps procurement teams decode that complexity. Instead of pushing one universal score, the lab-oriented approach connects measured performance to procurement decisions: what should be prequalified, what should be retested, what should be specified contractually, and what should be validated during acceptance.

A 6-point procurement checklist for better benchmarking decisions

- Verify test conditions: ask for environmental range, sample status, and whether the assessed unit matches the quoted configuration.

- Review document completeness: check drawings, material lists, interface descriptions, and compliance-related files before comparing scores.

- Focus on lifecycle impact: give higher weight to metrics that influence 12–36 month operating cost rather than launch-stage appearance.

- Ask for integration boundaries: confirm which systems, protocols, connectors, or site conditions are included or excluded.

- Separate pilot viability from scale viability: a successful sample deployment does not guarantee stable roll-out across 20, 50, or 200 units.

- Define acceptance checkpoints: convert critical benchmarking data into contract terms and commissioning checks.

This checklist is valuable for both direct buyers and business evaluators preparing internal recommendation memos. It also helps channel partners identify which claims can be safely carried into reseller discussions and which require deeper technical validation first.

Common misconceptions that distort benchmarking analysis

One common misconception is that more metrics always mean better benchmarking solutions. In reality, a 40-metric dashboard can be less useful than a disciplined 6-metric review if half the inputs are irrelevant to field operation. Another misconception is that benchmarking comparison should always produce a single winner. In many tenders, the right outcome is conditional selection: one supplier is better for cold-climate lodging, another for dense digital integration, and another for phased distribution channels.

A third misconception is that compliance can be checked after the technical ranking is finished. In tourism projects, carbon-related documentation, material disclosure, and safety records can change supplier viability very late in the process. Treating compliance as a final admin step rather than an early benchmarking dimension often causes avoidable delay.

How to turn benchmarking reports into stronger procurement, compliance, and project outcomes

A benchmarking report should not end with comparison. It should move into action. For developers, hotel procurement directors, and evaluation teams, the most useful reports are those that translate data into next-step decisions: who to shortlist, what to test further, which specifications to lock, and where to expect approval friction. This is especially important when projects must move from sourcing to installation within a 6–12 week pre-opening schedule.

In practical terms, benchmarking data should support three decisions. First, technical screening: can the solution withstand the intended operating scenario? Second, commercial framing: what risks may alter total cost, maintenance exposure, or replacement timing? Third, compliance planning: what documentation or validation should be prepared before import, installation, or site acceptance? When a benchmarking process supports all three, it becomes a management tool rather than a static report.

TVM is positioned well for this because its benchmarking work connects Chinese manufacturing output with the language global tourism architects and buyers actually need: measured engineering inputs, comparable reporting structures, and scenario-based interpretation. That is valuable for teams who want more than vendor storytelling but do not want to build a private test framework from zero.

A strong benchmarking solution can also improve negotiation quality. When performance thresholds, integration limits, and documentation gaps are visible early, buyers can discuss corrective actions before contract signing. This often leads to better specification alignment, fewer hidden assumptions, and more realistic delivery commitments.

Frequently asked questions about benchmarking data in B2B tourism sourcing

How should buyers choose between benchmarking software and independent benchmarking analysis?

Use benchmarking software for organizing supplier inputs, version control, and preliminary screening. Use independent benchmarking analysis when project risk is high, when systems must integrate across multiple vendors, or when the asset will face demanding operating conditions. As a rule, the more a decision depends on durability, compliance, and interoperability over 12–60 months, the less safe it is to rely only on automated benchmarking tools.

What should a benchmarking report include before a procurement team trusts it?

At minimum, it should state test boundaries, sample identity, environmental assumptions, the difference between measured and declared values, and the procurement meaning of each critical metric. It should also identify exclusions. If a benchmarking report does not explain what was not tested, readers may misread a partial evaluation as a full-risk assessment.

How long does a practical benchmarking process usually take?

The timeline depends on scope. A document-based screening may take 7–15 days. A more robust benchmarking process involving technical review, sample verification, and scenario interpretation often runs 2–4 weeks. If retesting, multi-vendor normalization, or compliance clarification is needed, the cycle can extend further. Buyers should align this timing with tender and installation milestones rather than treat benchmarking as a last-minute step.

Which teams benefit most from better benchmarking comparison?

Information researchers benefit by filtering noise earlier. Procurement teams benefit by improving shortlist quality. Business evaluators benefit by linking technical evidence to financial risk. Distributors and agents benefit by reducing downstream claim exposure. In short, any team responsible for recommending, approving, importing, or commercializing tourism infrastructure gains from a more disciplined benchmarking process.

Why work with TVM when benchmarking data needs to support real decisions

If you are comparing prefab hospitality units, smart hotel systems, or tourism hardware and feel that available benchmarking data is too generic, TVM can help you move from surface comparison to decision-grade evaluation. The objective is not to flood your team with technical jargon. It is to clarify which metrics matter, which gaps need verification, and which options fit your operational scenario.

TVM is especially relevant when your project involves one or more of these conditions: cross-border sourcing, multiple suppliers, sustainability-related documentation, integration-sensitive systems, or tight development schedules. In those cases, a clearer benchmarking report can save far more than the cost of late correction. It can protect approvals, reduce misaligned orders, and improve confidence across procurement, engineering, and channel discussions.

You can consult TVM on practical issues such as parameter confirmation, benchmarking comparison design, supplier shortlisting logic, expected delivery implications, documentation completeness, sample review priorities, and scenario-based evaluation of tourism infrastructure. If needed, the discussion can also focus on custom benchmarking solutions for glamping structures, hotel IoT environments, or high-use leisure hardware.

When benchmarking data must support procurement instead of decoration, the right next step is not another generic dashboard. It is a clearer testing scope, a more disciplined benchmarking process, and a report that helps your team buy, deploy, and scale with fewer hidden risks. Reach out to discuss your target product category, required specifications, expected project timeline, compliance concerns, sample support needs, and quotation objectives.

Recommended News

![Recycled Polyester vs Virgin Polyester: How to Compare Cost, Durability, and Certifications Recycled Polyester vs Virgin Polyester: How to Compare Cost, Durability, and Certifications]() Jun 08, 2026Recycled Polyester vs Virgin Polyester: How to Compare Cost, Durability, and CertificationsRecycled polyester vs virgin polyester: compare cost, durability, and certifications with a practical sourcing guide for buyers seeking compliance, value, and long-term performance.

Jun 08, 2026Recycled Polyester vs Virgin Polyester: How to Compare Cost, Durability, and CertificationsRecycled polyester vs virgin polyester: compare cost, durability, and certifications with a practical sourcing guide for buyers seeking compliance, value, and long-term performance.![How to Choose Electric Toothbrushes: Brush Modes, Bristle Types, Battery Life, and Price How to Choose Electric Toothbrushes: Brush Modes, Bristle Types, Battery Life, and Price]() Jun 07, 2026How to Choose Electric Toothbrushes: Brush Modes, Bristle Types, Battery Life, and PriceElectrictoothbrushes buying guide: compare brush modes, bristle types, battery life, and price to find the best balance of comfort, performance, and long-term value.

Jun 07, 2026How to Choose Electric Toothbrushes: Brush Modes, Bristle Types, Battery Life, and PriceElectrictoothbrushes buying guide: compare brush modes, bristle types, battery life, and price to find the best balance of comfort, performance, and long-term value.![]() Jun 05, 2026Baby Sleeping Bags Buying Guide: TOG Ratings, Sizes, Fabrics, and Safe Sleep Fitbabysleepingbags buying guide: compare TOG ratings, safe sizing, fabrics, and fit tips to choose a breathable, secure sleep bag for better comfort and safer nights.

Jun 05, 2026Baby Sleeping Bags Buying Guide: TOG Ratings, Sizes, Fabrics, and Safe Sleep Fitbabysleepingbags buying guide: compare TOG ratings, safe sizing, fabrics, and fit tips to choose a breathable, secure sleep bag for better comfort and safer nights.![]() Jun 04, 2026Platinum Spark Plugs vs Copper: Which Option Lasts Longer and Fits Your Engine?Platinum spark plugs vs copper: discover which option lasts longer, fits your engine better, and delivers stronger fuel efficiency, lower maintenance, and better long-term value.

Jun 04, 2026Platinum Spark Plugs vs Copper: Which Option Lasts Longer and Fits Your Engine?Platinum spark plugs vs copper: discover which option lasts longer, fits your engine better, and delivers stronger fuel efficiency, lower maintenance, and better long-term value.![Custom Metal Fabrication: Processes, Tolerances, and Quote Drivers Custom Metal Fabrication: Processes, Tolerances, and Quote Drivers]() Jun 03, 2026Custom Metal Fabrication: Processes, Tolerances, and Quote DriversCustom metal fabrication guide: compare processes, tolerances, materials, finishes, and quote drivers to reduce risk, control costs, and build reliable tourism infrastructure.

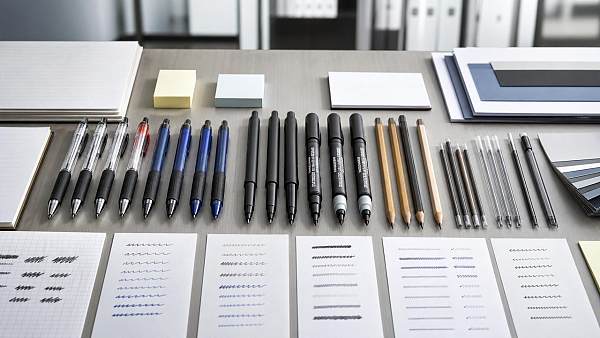

Jun 03, 2026Custom Metal Fabrication: Processes, Tolerances, and Quote DriversCustom metal fabrication guide: compare processes, tolerances, materials, finishes, and quote drivers to reduce risk, control costs, and build reliable tourism infrastructure.![Writing Instruments Buying Guide: Tip Types, Ink Options, and Use Cases Writing Instruments Buying Guide: Tip Types, Ink Options, and Use Cases]() Jun 02, 2026Writing Instruments Buying Guide: Tip Types, Ink Options, and Use CasesWriting instruments buying guide: compare tip types, ink options, durability, and use cases to choose reliable pens, markers, and pencils for every setting.

Jun 02, 2026Writing Instruments Buying Guide: Tip Types, Ink Options, and Use CasesWriting instruments buying guide: compare tip types, ink options, durability, and use cases to choose reliable pens, markers, and pencils for every setting.![Are Last-mile delivery drones ready for urban logistics? Are Last-mile delivery drones ready for urban logistics?]() Jun 01, 2026Are Last-mile delivery drones ready for urban logistics?Last-mile delivery drones are reshaping urban logistics—discover where they deliver real ROI, what risks to test, and how to plan scalable drone operations.

Jun 01, 2026Are Last-mile delivery drones ready for urban logistics?Last-mile delivery drones are reshaping urban logistics—discover where they deliver real ROI, what risks to test, and how to plan scalable drone operations.![Is a makeupbrushesset worth buying for daily use? Is a makeupbrushesset worth buying for daily use?]() May 31, 2026Is a makeupbrushesset worth buying for daily use?makeupbrushesset guide: discover if a daily brush set is worth buying, how to judge quality, hygiene, cost per use, and choose tools that improve every routine.

May 31, 2026Is a makeupbrushesset worth buying for daily use?makeupbrushesset guide: discover if a daily brush set is worth buying, how to judge quality, hygiene, cost per use, and choose tools that improve every routine.![Why Chemical Manufacturing Risks Are Rising in 2026 Why Chemical Manufacturing Risks Are Rising in 2026]() May 30, 2026Why Chemical Manufacturing Risks Are Rising in 2026Chemical manufacturing risks are rising in 2026. Learn how verified data, supplier controls, compliance evidence, and benchmarking can reduce exposure.

May 30, 2026Why Chemical Manufacturing Risks Are Rising in 2026Chemical manufacturing risks are rising in 2026. Learn how verified data, supplier controls, compliance evidence, and benchmarking can reduce exposure.![How Sustainable Furniture for Hotels Cuts Long-Term Costs How Sustainable Furniture for Hotels Cuts Long-Term Costs]() May 30, 2026How Sustainable Furniture for Hotels Cuts Long-Term CostsSustainable furniture for hotels lowers lifecycle costs with durable, repairable, compliant designs that reduce replacements, downtime, and waste.

May 30, 2026How Sustainable Furniture for Hotels Cuts Long-Term CostsSustainable furniture for hotels lowers lifecycle costs with durable, repairable, compliant designs that reduce replacements, downtime, and waste.![Contract Manufacturing Costs Often Hide in Change Orders Contract Manufacturing Costs Often Hide in Change Orders]() May 29, 2026Contract Manufacturing Costs Often Hide in Change OrdersContract manufacturing costs can hide in change orders. Learn how TerraVista Metrics reveals supplier risk, specification drift, and budget exposure before production.

May 29, 2026Contract Manufacturing Costs Often Hide in Change OrdersContract manufacturing costs can hide in change orders. Learn how TerraVista Metrics reveals supplier risk, specification drift, and budget exposure before production.![Why high-end furniture for hotels shapes guest reviews Why high-end furniture for hotels shapes guest reviews]() May 28, 2026Why high-end furniture for hotels shapes guest reviewsHigh-end furniture for hotels shapes comfort, trust, and guest reviews. Discover how durability, ergonomics, and premium design influence bookings, ratings, and brand value.

May 28, 2026Why high-end furniture for hotels shapes guest reviewsHigh-end furniture for hotels shapes comfort, trust, and guest reviews. Discover how durability, ergonomics, and premium design influence bookings, ratings, and brand value.![Why system integration for tourism fails in multi site projects Why system integration for tourism fails in multi site projects]() May 25, 2026Why system integration for tourism fails in multi site projectsSystem integration for tourism often fails in multi-site projects due to weak standards, untested interfaces, and poor coordination. Learn the checklist that reduces downtime and protects guest experience.

May 25, 2026Why system integration for tourism fails in multi site projectsSystem integration for tourism often fails in multi-site projects due to weak standards, untested interfaces, and poor coordination. Learn the checklist that reduces downtime and protects guest experience.![Is sustainable tourism certification worth the effort? Is sustainable tourism certification worth the effort?]() May 24, 2026Is sustainable tourism certification worth the effort?Sustainable tourism certification: is it worth the effort? Discover how it cuts risk, controls lifecycle costs, and builds credible market advantage for tourism businesses.

May 24, 2026Is sustainable tourism certification worth the effort?Sustainable tourism certification: is it worth the effort? Discover how it cuts risk, controls lifecycle costs, and builds credible market advantage for tourism businesses.![When a smart hotel exporter is the safer choice When a smart hotel exporter is the safer choice]() May 23, 2026When a smart hotel exporter is the safer choiceSmart hotel exporter selection is safer when backed by verified data, interoperability insight, and compliance readiness. Learn how to reduce procurement risk and choose with confidence.

May 23, 2026When a smart hotel exporter is the safer choiceSmart hotel exporter selection is safer when backed by verified data, interoperability insight, and compliance readiness. Learn how to reduce procurement risk and choose with confidence.![What guests notice first in smart hotel guest experience What guests notice first in smart hotel guest experience]() May 22, 2026What guests notice first in smart hotel guest experienceSmart hotel guest experience starts in the first 10 minutes. See what guests notice first—from check-in speed to room controls—and how hotels can turn first impressions into trust.

May 22, 2026What guests notice first in smart hotel guest experienceSmart hotel guest experience starts in the first 10 minutes. See what guests notice first—from check-in speed to room controls—and how hotels can turn first impressions into trust.![How smart hotel sustainability affects brand and payback How smart hotel sustainability affects brand and payback]() May 22, 2026How smart hotel sustainability affects brand and paybackSmart hotel sustainability shapes hotel brand value and payback through energy savings, guest comfort, and data-backed performance. See what drives real ROI.

May 22, 2026How smart hotel sustainability affects brand and paybackSmart hotel sustainability shapes hotel brand value and payback through energy savings, guest comfort, and data-backed performance. See what drives real ROI.![How smart hotel energy efficiency cuts costs without upgrades How smart hotel energy efficiency cuts costs without upgrades]() May 22, 2026How smart hotel energy efficiency cuts costs without upgradesSmart hotel energy efficiency helps hotels cut utility costs fast with occupancy-based controls, smarter scheduling, and clear data insights—without costly system upgrades.

May 22, 2026How smart hotel energy efficiency cuts costs without upgradesSmart hotel energy efficiency helps hotels cut utility costs fast with occupancy-based controls, smarter scheduling, and clear data insights—without costly system upgrades.![Are smart hotel benefits worth it for smaller properties? Are smart hotel benefits worth it for smaller properties?]() May 21, 2026Are smart hotel benefits worth it for smaller properties?Smart hotel benefits for smaller properties: learn how smart locks, energy control, and automation can cut costs, improve guest service, and deliver stronger ROI.

May 21, 2026Are smart hotel benefits worth it for smaller properties?Smart hotel benefits for smaller properties: learn how smart locks, energy control, and automation can cut costs, improve guest service, and deliver stronger ROI.![Ecoinvent Cost, Licensing, and ROI in 2026 Ecoinvent Cost, Licensing, and ROI in 2026]() May 20, 2026Ecoinvent Cost, Licensing, and ROI in 2026Ecoinvent cost, licensing, and ROI in 2026 explained for enterprise buyers. Compare pricing, usage rights, compliance value, and procurement impact to make smarter sustainability data decisions.

May 20, 2026Ecoinvent Cost, Licensing, and ROI in 2026Ecoinvent cost, licensing, and ROI in 2026 explained for enterprise buyers. Compare pricing, usage rights, compliance value, and procurement impact to make smarter sustainability data decisions.![How environmental sustainability cuts long term operating risk How environmental sustainability cuts long term operating risk]() May 22, 2026How environmental sustainability cuts long term operating riskEnvironmental sustainability helps cut long-term operating risk by improving efficiency, durability, compliance, and supply chain resilience. Learn how data-led decisions protect asset value.

May 22, 2026How environmental sustainability cuts long term operating riskEnvironmental sustainability helps cut long-term operating risk by improving efficiency, durability, compliance, and supply chain resilience. Learn how data-led decisions protect asset value.![Sustainable Tourism Development Trends to Watch in 2026 Sustainable Tourism Development Trends to Watch in 2026]() May 22, 2026Sustainable Tourism Development Trends to Watch in 2026Sustainable tourism development trends for 2026: explore carbon-led procurement, resilient infrastructure, smart system integration, and lifecycle value to future-proof tourism assets.

May 22, 2026Sustainable Tourism Development Trends to Watch in 2026Sustainable tourism development trends for 2026: explore carbon-led procurement, resilient infrastructure, smart system integration, and lifecycle value to future-proof tourism assets.![Hospitality Management Metrics That Actually Improve Guest Retention Hospitality Management Metrics That Actually Improve Guest Retention]() May 20, 2026Hospitality Management Metrics That Actually Improve Guest RetentionHospitality management metrics that truly boost guest retention: discover which service, infrastructure, and digital KPIs help evaluators compare properties, reduce churn, and improve long-term value.

May 20, 2026Hospitality Management Metrics That Actually Improve Guest RetentionHospitality management metrics that truly boost guest retention: discover which service, infrastructure, and digital KPIs help evaluators compare properties, reduce churn, and improve long-term value.![Smart warehousing mistakes that slow order fulfillment Smart warehousing mistakes that slow order fulfillment]() May 20, 2026Smart warehousing mistakes that slow order fulfillmentSmart warehousing mistakes can quietly delay fulfillment, raise safety risks, and reduce inventory accuracy. Discover a practical checklist to improve speed, control, and warehouse performance.

May 20, 2026Smart warehousing mistakes that slow order fulfillmentSmart warehousing mistakes can quietly delay fulfillment, raise safety risks, and reduce inventory accuracy. Discover a practical checklist to improve speed, control, and warehouse performance.

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.