Search News

TerraVista Metrics (TVM)Industry Portal

TerraVista Metrics (TVM)Hot Articles

TerraVista Metrics (TVM)- UL 60335-2-107:2026 Tightens Smart Lighting ExportsUL 60335-2-107:2026 tightens smart lighting exports with new EMC immunity and thermal protection checks. See how LED exporters can prepare faster for North America compliance.

- EN 16562:2026 Takes Effect for EU-Bound Modular CabinsEN 16562:2026 now impacts EU-bound Modular Cabins with A2-s1,d0, CE and EPD requirements. See who is affected, key compliance risks, and how to stay export-ready.

- RV MCU Lead Times Stretch as Local Supply Gains Audit AccessRV MCU lead times stretch beyond 26 weeks as local suppliers gain audit access and certification progress. Explore sourcing risks, compliance checks, and qualified alternatives for RV supply chains.

Popular Tags

TerraVista Metrics (TVM)Industry NewsWhy the Benchmarking Process Breaks Down Mid-Project

auth.Time

Jun 09, 2026Click Count

Mid-project benchmarking failures usually do not happen because teams stop caring. They happen because the benchmark itself was never stable enough to support real procurement and evaluation decisions. In tourism infrastructure projects, the breakdown typically starts when benchmarking data is incomplete, testing methods shift, supplier claims are not translated into comparable engineering metrics, or project teams begin making commercial decisions before technical alignment is fully established. For procurement teams, evaluators, distributors, and business decision-makers, the practical question is not simply why the benchmarking process fails, but how to detect failure early enough to protect budget, timelines, and technical fit.

In sectors like smart hospitality systems, prefabricated tourism accommodation, and specialized leisure hardware, benchmarking analysis is supposed to reduce uncertainty. But mid-project, many teams discover that their benchmarking comparison model cannot absorb design changes, supplier substitutions, compliance updates, or field-condition differences. When that happens, benchmarking stops being a decision framework and becomes a source of confusion. The most effective response is to understand exactly where breakdowns occur, what signals indicate risk, and how to rebuild a benchmarking system that remains usable from early screening to final procurement.

The real reason the benchmarking process breaks down mid-project

The most common cause is simple: the project starts with benchmarking that looks organized, but is not decision-ready. Early-stage benchmarking often works as a rough comparison tool. It may help shortlist products, validate supplier narratives, or estimate feasibility. But once the project moves deeper into design coordination, specification review, compliance checks, and commercial negotiation, the benchmark is exposed to real-world pressure.

At that point, weak assumptions begin to fail. The thermal performance data of a prefab hospitality unit may come from ideal lab conditions rather than actual deployment environments. A smart hotel IoT platform may show high data throughput in isolated tests but underperform once integrated with property management systems, guest-facing devices, and energy controls. Amusement or outdoor tourism hardware may pass static material tests but show fatigue issues under repetitive, high-load operating conditions.

The benchmark breaks down because the project becomes more specific, while the original benchmark remains too general. If the benchmarking system was built on supplier brochures, mixed test standards, or loosely defined criteria, it will not survive procurement scrutiny. That is why breakdown mid-project is rarely a single event. It is usually the cumulative result of poor data discipline at the beginning.

What buyers and evaluators worry about most when benchmarking starts to fail

For target readers such as procurement personnel, business evaluators, and channel partners, the biggest concern is not abstract methodology. It is decision risk. They want to know whether they are comparing the right things, whether the selected product will perform under actual operating conditions, and whether a weak benchmark will lead to expensive mistakes later.

The core concerns usually fall into five areas:

- Comparability risk: Are all suppliers being measured with the same definitions, same test logic, and same performance thresholds?

- Procurement risk: Will the benchmark still support the final buying decision after design changes, revised budgets, or substitution requests?

- Compliance risk: Are carbon, durability, safety, and regional technical requirements embedded in the benchmark from the start?

- Integration risk: Does the benchmark account for system compatibility rather than evaluating each component in isolation?

- Credibility risk: Is the benchmarking data independent, repeatable, and detailed enough to withstand internal review or external challenge?

These concerns matter especially in tourism and hospitality infrastructure because buying errors are not limited to unit price. A poor benchmarking comparison can affect installation complexity, maintenance cost, guest experience, energy performance, replacement cycles, and even brand reputation.

Where benchmarking analysis usually fails first

Mid-project failure often begins in one of several predictable places. Understanding these failure points helps teams diagnose whether the problem lies in the data, the process, or the decision framework itself.

1. The benchmark starts with marketing claims instead of engineering metrics

Many teams begin benchmarking by collecting supplier documents, product sheets, and sales presentations. This is useful for orientation, but dangerous if it becomes the benchmark foundation. Marketing materials often use favorable testing conditions, selective performance highlights, or undefined terms such as “high efficiency,” “smart-ready,” or “sustainable design.”

Without raw engineering metrics, the comparison becomes subjective. One supplier may report insulation performance using one methodology, while another cites a different standard entirely. One hotel technology vendor may promote system intelligence based on software features, while another reports actual network stability and throughput. These cannot be benchmarked accurately unless the data is normalized.

2. The benchmarking tools are inconsistent across the project lifecycle

A benchmarking tool that works during supplier pre-screening may not work during final evaluation. Early on, a spreadsheet with broad scoring categories may seem sufficient. Later, the team needs test protocols, weighted technical criteria, scenario-based modeling, life-cycle cost assumptions, and evidence tracing.

If the benchmarking tools do not evolve with project complexity, teams start making decisions outside the benchmark. Once that happens, benchmarking analysis loses authority. Procurement may proceed based on price pressure, engineering may shift based on installation convenience, and management may approve based on incomplete summaries. The benchmark still exists on paper, but no longer drives the project.

3. Technical criteria and commercial criteria are not aligned

This is one of the most damaging breakdowns. Technical teams may prioritize durability, interoperability, carbon compliance, and future maintenance performance. Procurement may focus on lead time, discount structure, warranty terms, and vendor responsiveness. Business stakeholders may want speed, lower CAPEX, or brand compatibility.

None of these priorities are wrong. The problem appears when they are not integrated into a single benchmarking system. If technical scoring and commercial decision-making are separated, the final selection often contradicts the benchmark outcome. Teams then lose confidence in the process and begin bypassing it altogether.

4. Scope changes are not reflected in the benchmark

Tourism projects change. Site conditions shift. Utility assumptions evolve. Guest experience goals are revised. Sustainability targets become stricter. Smart systems need broader integration. Modular unit configurations change due to land, climate, or occupancy requirements.

If the benchmarking comparison model is not updated when scope changes, it quickly becomes obsolete. Teams may still refer to it, but it no longer reflects the actual project. Mid-project breakdown is often the moment when people realize they are benchmarking an earlier version of the project, not the one currently being built.

5. No one owns benchmark governance

Benchmarking often spans engineering, procurement, operations, sustainability, and commercial teams. When ownership is unclear, decisions about data quality, scoring logic, supplier evidence, and benchmark updates are made inconsistently. One team adjusts criteria informally, another uses outdated test data, and a third interprets thresholds differently.

Without governance, even strong benchmarking data can lose value. A benchmark must be managed, version-controlled, and defended. Otherwise, it becomes just another reference document rather than a live decision instrument.

Why tourism infrastructure projects are especially vulnerable

In tourism and hospitality supply chains, benchmarking failure is amplified by the diversity of assets involved. A single project may include structural modules, energy systems, digital guest interfaces, access control, HVAC, lighting, entertainment hardware, and sustainability components from multiple suppliers and countries. Each category has its own standards, testing methods, and operational realities.

This creates a high risk of fragmented benchmarking. For example:

- A glamping unit may benchmark well for design and insulation, but poorly for transport resilience, humidity durability, or field assembly tolerance.

- A hotel AI system may benchmark well for interface features, but not for latency, integration stability, cybersecurity exposure, or multilingual support.

- Outdoor leisure hardware may benchmark well in new-condition performance, but not in repetitive use cycles, maintenance intervals, or environmental wear.

These are not minor technical details. They directly affect project ROI, operating continuity, and user experience. That is why benchmarking in this industry must move beyond surface comparison and into measurable, standardized, decision-grade analysis.

How to tell if your benchmarking system is already breaking down

Many teams only recognize failure after delays, disputes, or rework. In reality, there are early warning signs. If several of these are appearing in your project, the benchmarking process likely needs intervention.

- Suppliers are being compared using different standards or different evidence formats.

- Key performance claims cannot be traced back to test conditions or raw data.

- Internal teams keep creating parallel evaluation sheets outside the main benchmark.

- Procurement decisions are being made before technical discrepancies are resolved.

- The benchmark is not updated when specifications or project scope change.

- Stakeholders disagree on what the benchmark is supposed to prove.

- Commercially preferred suppliers score weakly, but are still advanced without formal exception logic.

- No one can clearly explain how weighting was assigned across technical, operational, and financial criteria.

When these symptoms appear, the issue is not just process inefficiency. It means the benchmarking analysis no longer provides dependable support for final selection.

What a more defensible benchmarking process looks like

A robust benchmarking system is not just a table of product scores. It is a structured decision framework built to survive project changes and scrutiny. For buyers and evaluators, the goal is not perfection. It is traceability, consistency, and practical relevance.

A more defensible approach usually includes the following elements:

Standardized metrics

Every supplier should be measured against the same definitions, thresholds, and test logic. If equivalency is impossible, the benchmark should clearly state the limitation rather than hiding it.

Evidence hierarchy

Not all evidence carries equal value. Independent lab tests, field-performance records, engineering documentation, and certified compliance data should rank above brochures and sales statements.

Lifecycle relevance

Benchmarking should cover not just purchase-stage performance, but installation, integration, maintenance, energy impact, fatigue behavior, and replacement implications where relevant.

Version control

As the project evolves, benchmark criteria and assumptions must be updated formally. Teams need to know which version supports which decision.

Cross-functional alignment

Engineering, procurement, operations, and business leadership should agree on what matters most and how trade-offs are handled. Otherwise, the benchmark will be ignored at the exact moment it matters most.

Exception rules

Sometimes a lower-scoring supplier is still selected for strategic reasons. That is acceptable only if the deviation is documented, justified, and reviewed against project risk.

How benchmarking can better support procurement decisions

For procurement-focused readers, the key lesson is this: benchmarking should not sit beside procurement; it should shape procurement. If benchmarking comparison is disconnected from sourcing strategy, it becomes an academic exercise.

To make benchmarking useful in procurement, teams should ensure that:

- Bid specifications match benchmark criteria.

- Supplier clarification requests are fed back into the benchmark structure.

- Total cost of ownership is considered alongside acquisition price.

- Technical non-conformities are quantified, not described vaguely.

- Substitution approvals require benchmark-equivalent evidence.

- Final award decisions can be explained through benchmark-backed logic.

This is particularly important for distributors, agents, and resellers as well. If they understand how end clients benchmark products, they can prepare stronger documentation, reduce friction in evaluation, and position their offering more effectively in competitive comparisons.

The value of independent benchmarking data

One reason benchmarking breaks down mid-project is that internal teams are forced to compare supplier-controlled narratives rather than neutral technical evidence. Independent benchmarking data reduces that distortion. It creates a common reference point that is less vulnerable to branding, selective reporting, or inconsistent terminology.

In tourism infrastructure, independent benchmarking is especially valuable when projects involve cross-border sourcing, Chinese manufacturing supply chains, sustainability commitments, and mixed technology stacks. Buyers do not just need promises of quality or innovation. They need proof that performance claims translate into deployable, compliant, and durable outcomes.

This is where data-driven whitepapers, engineering test results, and standardized infrastructure comparisons become strategically useful. They allow project teams to filter options based on measurable performance rather than aesthetic presentation or incomplete vendor storytelling.

Conclusion: benchmarking fails when it is treated as a formality instead of a control system

The benchmarking process breaks down mid-project when the original comparison framework is too weak to support real decisions. In most cases, the failure comes from unstable data, inconsistent benchmarking tools, poor governance, and misalignment between technical evaluation and procurement action. For tourism infrastructure buyers, evaluators, and channel partners, the solution is not more benchmarking language. It is better benchmarking structure.

If your benchmarking system cannot absorb scope changes, verify supplier claims, align departments, and support final sourcing decisions, it will fail when project pressure rises. A strong benchmarking process should help teams compare reliably, document risk clearly, and make procurement decisions with confidence. In a market where durability, compliance, integration, and long-term operating value matter, that level of discipline is not optional. It is what separates attractive proposals from truly defensible choices.

Recommended News

![Recycled Polyester vs Virgin Polyester: How to Compare Cost, Durability, and Certifications Recycled Polyester vs Virgin Polyester: How to Compare Cost, Durability, and Certifications]() Jun 08, 2026Recycled Polyester vs Virgin Polyester: How to Compare Cost, Durability, and CertificationsRecycled polyester vs virgin polyester: compare cost, durability, and certifications with a practical sourcing guide for buyers seeking compliance, value, and long-term performance.

Jun 08, 2026Recycled Polyester vs Virgin Polyester: How to Compare Cost, Durability, and CertificationsRecycled polyester vs virgin polyester: compare cost, durability, and certifications with a practical sourcing guide for buyers seeking compliance, value, and long-term performance.![How to Choose Electric Toothbrushes: Brush Modes, Bristle Types, Battery Life, and Price How to Choose Electric Toothbrushes: Brush Modes, Bristle Types, Battery Life, and Price]() Jun 07, 2026How to Choose Electric Toothbrushes: Brush Modes, Bristle Types, Battery Life, and PriceElectrictoothbrushes buying guide: compare brush modes, bristle types, battery life, and price to find the best balance of comfort, performance, and long-term value.

Jun 07, 2026How to Choose Electric Toothbrushes: Brush Modes, Bristle Types, Battery Life, and PriceElectrictoothbrushes buying guide: compare brush modes, bristle types, battery life, and price to find the best balance of comfort, performance, and long-term value.![]() Jun 05, 2026Baby Sleeping Bags Buying Guide: TOG Ratings, Sizes, Fabrics, and Safe Sleep Fitbabysleepingbags buying guide: compare TOG ratings, safe sizing, fabrics, and fit tips to choose a breathable, secure sleep bag for better comfort and safer nights.

Jun 05, 2026Baby Sleeping Bags Buying Guide: TOG Ratings, Sizes, Fabrics, and Safe Sleep Fitbabysleepingbags buying guide: compare TOG ratings, safe sizing, fabrics, and fit tips to choose a breathable, secure sleep bag for better comfort and safer nights.![]() Jun 04, 2026Platinum Spark Plugs vs Copper: Which Option Lasts Longer and Fits Your Engine?Platinum spark plugs vs copper: discover which option lasts longer, fits your engine better, and delivers stronger fuel efficiency, lower maintenance, and better long-term value.

Jun 04, 2026Platinum Spark Plugs vs Copper: Which Option Lasts Longer and Fits Your Engine?Platinum spark plugs vs copper: discover which option lasts longer, fits your engine better, and delivers stronger fuel efficiency, lower maintenance, and better long-term value.![Custom Metal Fabrication: Processes, Tolerances, and Quote Drivers Custom Metal Fabrication: Processes, Tolerances, and Quote Drivers]() Jun 03, 2026Custom Metal Fabrication: Processes, Tolerances, and Quote DriversCustom metal fabrication guide: compare processes, tolerances, materials, finishes, and quote drivers to reduce risk, control costs, and build reliable tourism infrastructure.

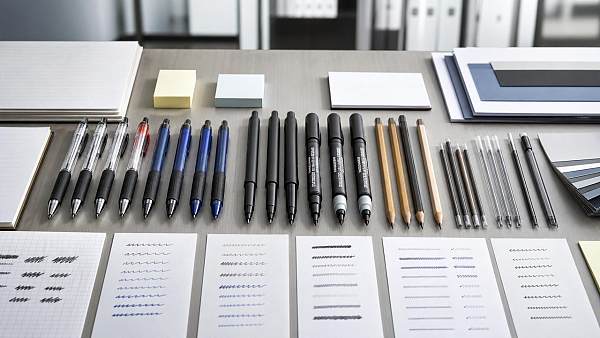

Jun 03, 2026Custom Metal Fabrication: Processes, Tolerances, and Quote DriversCustom metal fabrication guide: compare processes, tolerances, materials, finishes, and quote drivers to reduce risk, control costs, and build reliable tourism infrastructure.![Writing Instruments Buying Guide: Tip Types, Ink Options, and Use Cases Writing Instruments Buying Guide: Tip Types, Ink Options, and Use Cases]() Jun 02, 2026Writing Instruments Buying Guide: Tip Types, Ink Options, and Use CasesWriting instruments buying guide: compare tip types, ink options, durability, and use cases to choose reliable pens, markers, and pencils for every setting.

Jun 02, 2026Writing Instruments Buying Guide: Tip Types, Ink Options, and Use CasesWriting instruments buying guide: compare tip types, ink options, durability, and use cases to choose reliable pens, markers, and pencils for every setting.![Are Last-mile delivery drones ready for urban logistics? Are Last-mile delivery drones ready for urban logistics?]() Jun 01, 2026Are Last-mile delivery drones ready for urban logistics?Last-mile delivery drones are reshaping urban logistics—discover where they deliver real ROI, what risks to test, and how to plan scalable drone operations.

Jun 01, 2026Are Last-mile delivery drones ready for urban logistics?Last-mile delivery drones are reshaping urban logistics—discover where they deliver real ROI, what risks to test, and how to plan scalable drone operations.![Is a makeupbrushesset worth buying for daily use? Is a makeupbrushesset worth buying for daily use?]() May 31, 2026Is a makeupbrushesset worth buying for daily use?makeupbrushesset guide: discover if a daily brush set is worth buying, how to judge quality, hygiene, cost per use, and choose tools that improve every routine.

May 31, 2026Is a makeupbrushesset worth buying for daily use?makeupbrushesset guide: discover if a daily brush set is worth buying, how to judge quality, hygiene, cost per use, and choose tools that improve every routine.![Why Chemical Manufacturing Risks Are Rising in 2026 Why Chemical Manufacturing Risks Are Rising in 2026]() May 30, 2026Why Chemical Manufacturing Risks Are Rising in 2026Chemical manufacturing risks are rising in 2026. Learn how verified data, supplier controls, compliance evidence, and benchmarking can reduce exposure.

May 30, 2026Why Chemical Manufacturing Risks Are Rising in 2026Chemical manufacturing risks are rising in 2026. Learn how verified data, supplier controls, compliance evidence, and benchmarking can reduce exposure.![How Sustainable Furniture for Hotels Cuts Long-Term Costs How Sustainable Furniture for Hotels Cuts Long-Term Costs]() May 30, 2026How Sustainable Furniture for Hotels Cuts Long-Term CostsSustainable furniture for hotels lowers lifecycle costs with durable, repairable, compliant designs that reduce replacements, downtime, and waste.

May 30, 2026How Sustainable Furniture for Hotels Cuts Long-Term CostsSustainable furniture for hotels lowers lifecycle costs with durable, repairable, compliant designs that reduce replacements, downtime, and waste.![Contract Manufacturing Costs Often Hide in Change Orders Contract Manufacturing Costs Often Hide in Change Orders]() May 29, 2026Contract Manufacturing Costs Often Hide in Change OrdersContract manufacturing costs can hide in change orders. Learn how TerraVista Metrics reveals supplier risk, specification drift, and budget exposure before production.

May 29, 2026Contract Manufacturing Costs Often Hide in Change OrdersContract manufacturing costs can hide in change orders. Learn how TerraVista Metrics reveals supplier risk, specification drift, and budget exposure before production.![Why high-end furniture for hotels shapes guest reviews Why high-end furniture for hotels shapes guest reviews]() May 28, 2026Why high-end furniture for hotels shapes guest reviewsHigh-end furniture for hotels shapes comfort, trust, and guest reviews. Discover how durability, ergonomics, and premium design influence bookings, ratings, and brand value.

May 28, 2026Why high-end furniture for hotels shapes guest reviewsHigh-end furniture for hotels shapes comfort, trust, and guest reviews. Discover how durability, ergonomics, and premium design influence bookings, ratings, and brand value.![Why system integration for tourism fails in multi site projects Why system integration for tourism fails in multi site projects]() May 25, 2026Why system integration for tourism fails in multi site projectsSystem integration for tourism often fails in multi-site projects due to weak standards, untested interfaces, and poor coordination. Learn the checklist that reduces downtime and protects guest experience.

May 25, 2026Why system integration for tourism fails in multi site projectsSystem integration for tourism often fails in multi-site projects due to weak standards, untested interfaces, and poor coordination. Learn the checklist that reduces downtime and protects guest experience.![Is sustainable tourism certification worth the effort? Is sustainable tourism certification worth the effort?]() May 24, 2026Is sustainable tourism certification worth the effort?Sustainable tourism certification: is it worth the effort? Discover how it cuts risk, controls lifecycle costs, and builds credible market advantage for tourism businesses.

May 24, 2026Is sustainable tourism certification worth the effort?Sustainable tourism certification: is it worth the effort? Discover how it cuts risk, controls lifecycle costs, and builds credible market advantage for tourism businesses.![When a smart hotel exporter is the safer choice When a smart hotel exporter is the safer choice]() May 23, 2026When a smart hotel exporter is the safer choiceSmart hotel exporter selection is safer when backed by verified data, interoperability insight, and compliance readiness. Learn how to reduce procurement risk and choose with confidence.

May 23, 2026When a smart hotel exporter is the safer choiceSmart hotel exporter selection is safer when backed by verified data, interoperability insight, and compliance readiness. Learn how to reduce procurement risk and choose with confidence.![What guests notice first in smart hotel guest experience What guests notice first in smart hotel guest experience]() May 22, 2026What guests notice first in smart hotel guest experienceSmart hotel guest experience starts in the first 10 minutes. See what guests notice first—from check-in speed to room controls—and how hotels can turn first impressions into trust.

May 22, 2026What guests notice first in smart hotel guest experienceSmart hotel guest experience starts in the first 10 minutes. See what guests notice first—from check-in speed to room controls—and how hotels can turn first impressions into trust.![How smart hotel sustainability affects brand and payback How smart hotel sustainability affects brand and payback]() May 22, 2026How smart hotel sustainability affects brand and paybackSmart hotel sustainability shapes hotel brand value and payback through energy savings, guest comfort, and data-backed performance. See what drives real ROI.

May 22, 2026How smart hotel sustainability affects brand and paybackSmart hotel sustainability shapes hotel brand value and payback through energy savings, guest comfort, and data-backed performance. See what drives real ROI.![How smart hotel energy efficiency cuts costs without upgrades How smart hotel energy efficiency cuts costs without upgrades]() May 22, 2026How smart hotel energy efficiency cuts costs without upgradesSmart hotel energy efficiency helps hotels cut utility costs fast with occupancy-based controls, smarter scheduling, and clear data insights—without costly system upgrades.

May 22, 2026How smart hotel energy efficiency cuts costs without upgradesSmart hotel energy efficiency helps hotels cut utility costs fast with occupancy-based controls, smarter scheduling, and clear data insights—without costly system upgrades.![Are smart hotel benefits worth it for smaller properties? Are smart hotel benefits worth it for smaller properties?]() May 21, 2026Are smart hotel benefits worth it for smaller properties?Smart hotel benefits for smaller properties: learn how smart locks, energy control, and automation can cut costs, improve guest service, and deliver stronger ROI.

May 21, 2026Are smart hotel benefits worth it for smaller properties?Smart hotel benefits for smaller properties: learn how smart locks, energy control, and automation can cut costs, improve guest service, and deliver stronger ROI.![Ecoinvent Cost, Licensing, and ROI in 2026 Ecoinvent Cost, Licensing, and ROI in 2026]() May 20, 2026Ecoinvent Cost, Licensing, and ROI in 2026Ecoinvent cost, licensing, and ROI in 2026 explained for enterprise buyers. Compare pricing, usage rights, compliance value, and procurement impact to make smarter sustainability data decisions.

May 20, 2026Ecoinvent Cost, Licensing, and ROI in 2026Ecoinvent cost, licensing, and ROI in 2026 explained for enterprise buyers. Compare pricing, usage rights, compliance value, and procurement impact to make smarter sustainability data decisions.![How environmental sustainability cuts long term operating risk How environmental sustainability cuts long term operating risk]() May 22, 2026How environmental sustainability cuts long term operating riskEnvironmental sustainability helps cut long-term operating risk by improving efficiency, durability, compliance, and supply chain resilience. Learn how data-led decisions protect asset value.

May 22, 2026How environmental sustainability cuts long term operating riskEnvironmental sustainability helps cut long-term operating risk by improving efficiency, durability, compliance, and supply chain resilience. Learn how data-led decisions protect asset value.![Sustainable Tourism Development Trends to Watch in 2026 Sustainable Tourism Development Trends to Watch in 2026]() May 22, 2026Sustainable Tourism Development Trends to Watch in 2026Sustainable tourism development trends for 2026: explore carbon-led procurement, resilient infrastructure, smart system integration, and lifecycle value to future-proof tourism assets.

May 22, 2026Sustainable Tourism Development Trends to Watch in 2026Sustainable tourism development trends for 2026: explore carbon-led procurement, resilient infrastructure, smart system integration, and lifecycle value to future-proof tourism assets.![Hospitality Management Metrics That Actually Improve Guest Retention Hospitality Management Metrics That Actually Improve Guest Retention]() May 20, 2026Hospitality Management Metrics That Actually Improve Guest RetentionHospitality management metrics that truly boost guest retention: discover which service, infrastructure, and digital KPIs help evaluators compare properties, reduce churn, and improve long-term value.

May 20, 2026Hospitality Management Metrics That Actually Improve Guest RetentionHospitality management metrics that truly boost guest retention: discover which service, infrastructure, and digital KPIs help evaluators compare properties, reduce churn, and improve long-term value.![Smart warehousing mistakes that slow order fulfillment Smart warehousing mistakes that slow order fulfillment]() May 20, 2026Smart warehousing mistakes that slow order fulfillmentSmart warehousing mistakes can quietly delay fulfillment, raise safety risks, and reduce inventory accuracy. Discover a practical checklist to improve speed, control, and warehouse performance.

May 20, 2026Smart warehousing mistakes that slow order fulfillmentSmart warehousing mistakes can quietly delay fulfillment, raise safety risks, and reduce inventory accuracy. Discover a practical checklist to improve speed, control, and warehouse performance.

Quarterly Executive Summaries Delivered Directly.

Join 50,000+ industry leaders who receive our proprietary market analysis and policy outlooks before they hit the public library.